News

Scientists use AI neural network to translate speech from brain activity

Three recently published studies focused on using artificial intelligence (AI) neural networks to generate audio output from brain signals have shown promising results, namely by producing identifiable sounds up to 80% of the time. Participants in the studies first had their brain signals measured while they were either reading aloud or listening to specific words. All the data was then given to a neural network to “learn” how to interpret brain signals after which the final sounds were reconstructed for listeners to identify. These results represent hopeful prospects for the field of brain-computer interfaces (BCIs), where thought-based communication is quickly moving from the realm of science fiction to reality.

The idea of connecting human brains to computers is far from new. In fact, several relevant milestones have been made in recent years including enabling paralyzed individuals to operate tablet computers with their brain waves. Elon Musk has also famously brought attention to the field with Neuralink, his BCI company that essentially hopes to merge human consciousness with the power of the Internet. As brain-computer interface technology expands and develops new ways to foster communication between brains and machines, studies like these, originally highlighted by Science Magazine, will continue demonstrating the steady march of progress.

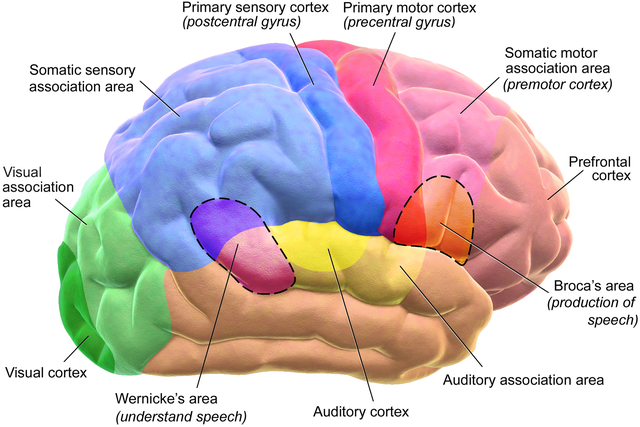

In the first study conducted by researchers from Columbia University and Hofstra Northwell School of Medicine, both in New York, five epileptic participants had the brain signals from their auditory cortexes recorded as they listened to stories and numbers being read to them. The signal data was provided to a neural network for analysis which then reconstructed audio files that were accurately identified by participating listeners 75% of the time.

In the second study conducted by a team from the University of Bremen (Germany), Maastricht University (Netherlands), Northwestern University (Illinois), and Virginia Commonwealth University (Virginia), brain signal data was gathered from six patients’ speech planning and motor areas while undergoing tumor surgeries. Each patient read specific words aloud to target the data collected. After the brain data and audio data were given to their neural network for training, the program was given brain signals not included in the training set to recreate audio, the result producing words that were recognizable 40% of the time.

Finally, in a third study by a team at the University of California, San Francisco, three participants with epilepsy read text aloud while brain activity was captured from the speech and motor areas of their brains. The audio generated from their neural network’s analysis of the signal readings was presented to a group of 166 people who were asked to identify the sentences from a multiple choice test – some sentences were identified with 80% accuracy.

While the research presented in these studies shows serious progress towards connecting human brains to computers, there are still a few significant hurdles. For one, the way neuron signal patterns in the brain translate into sounds varies from person to person, so neural networks must be trained on each individual person. The best results require the best data possible, i.e., the most precise neuron signals possible, meaning this is something that can only be obtained by placing electrodes in the brain itself. The opportunities to collect data at this invasive level for research are limited, relying on voluntary participation and approval of experiments.

All three of the studies highlighted demonstrated an ability to reconstruct speech based on neural data in some significant capacity; however, also in all cases, the study participants were able to create audible sounds to use with the computer training set. In the case of patients unable to speak, the level of difficultly in interpreting the brain’s speech signals from other signals will be the biggest challenge. Also, the differences between brain signals during actual speech vs. thinking about speech will complicate matters further.

News

Tesla hits FSD hackers with surprise move

In recent weeks, the company has begun remotely disabling FSD capabilities on affected vehicles, and in some instances, permanently revoking access even for owners who paid thousands of dollars for the feature.

Tesla is cracking down on hackers who have figured out a way to utilize third-party programs to activate Full Self-Driving (FSD) in their vehicles — despite the suite not being approved for use in their country.

Tesla has launched a sweeping enforcement campaign against owners using third-party hardware hacks to activate FSD software in countries where the advanced driver-assistance system remains unregulated or unapproved.

In recent weeks, the company has begun remotely disabling FSD capabilities on affected vehicles, and in some instances, permanently revoking access even for owners who paid thousands of dollars for the feature.

Tesla has started remotely disabling Full Self-Driving on cars fitted with third-party CAN bus hacks in countries where the software is not yet approved.

This crackdown began after the hacks started spreading widely last month. 👇 pic.twitter.com/wL8VqZuTlK

— PiunikaWeb – helpful, and breaking tech news (@PiunikaWeb) April 9, 2026

Reports of the crackdown have surfaced across Europe, China, Japan, South Korea, and the UK, marking a significant escalation in Tesla’s efforts to enforce regional software restrictions.

FSD is Tesla’s flagship supervised autonomy package, which is available in several countries across the world. Currently limited by regulatory hurdles, it has not received full approval in most markets outside of the United States due to various things, such as safety standards, data privacy, and local traffic laws.

However, the company is working to expand its availability globally. Nevertheless, Tesla has installed the necessary hardware on vehicles globally, but locks the features based on geographic location.

Some owners have taken accessing FSD into their own hands, using jailbreak or bypass devices.

These “jailbreak” tools, typically €500 USB-style modules that plug into the vehicle’s Controller Area Network (CAN) bus, intercept signals to spoof approvals and unlock FSD, including advanced navigation, Autopark, and Summon features.

Hackers in Poland, Ukraine, and elsewhere have distributed the devices, with some claiming they work on HW3 and HW4 vehicles and can be unplugged to restore stock settings. In China alone, over 100,000 owners reportedly installed such modifications.

Tesla’s response has been swift and uncompromising. Recently, the company began sending in-car notifications and emails warning owners that unauthorized modifications violate terms of service, compromise vehicle safety systems, and expose cars to cybersecurity risks.

The email communication read:

“Your vehicle has detected an unauthorized third-party device. As a precaution, some driver assistance functions have been disabled for safety reasons. A software update will be available soon. Once you install the update, some features may be enabled again.”

Vehicles detected using the hacks have had FSD capabilities remotely disabled without refund. In some cases, owners report permanent bans, even if they had legitimately purchased the software package.

Tesla’s hardline stance underscores its commitment to regulatory compliance and safety.

Tesla has long argued that unsupervised FSD requires rigorous validation, and premature activation could endanger drivers and bystanders.

The crackdown sends a clear-cut message to those who are bypassing the FSD safeguards, but there are greater implications for Tesla if something were to go wrong. This is an understandable way to protect the company’s reputation for its FSD suite.

News

Tesla developing small, affordable SUV, report claims

This latest rumor deserves heavy scrutiny. Tesla has already walked away from a mass-market $25,000 EV once before.

Tesla is developing a small, affordable SUV, a new report claims, speculating that the automaker is planning to add yet another vehicle to its lineup at a price point similar to the Model 3 and Model Y, but smaller and more compact.

But it does not make a whole lot of sense, especially considering a handful of things CEO Elon Musk said and the overall plan for Tesla’s future.

Reuters reported that Tesla is in the early stages of developing an all-new, smaller, cheaper electric SUV. Citing four sources familiar with the matter, the story claims the vehicle would be shorter than the Model Y, built in China, and represent a fresh platform rather than a variant of the Model 3 or Y.

Suppliers have reportedly been contacted to discuss details, though Tesla has not commented. The move appears aimed at broadening affordability amid slowing EV demand and intensifying competition, particularly from Chinese rivals.

This latest rumor deserves heavy scrutiny. Tesla has already walked away from a mass-market $25,000 EV once before.

In 2024, the company scrapped its long-teased “Redwood” project for a budget-friendly car. Elon Musk explained the decision bluntly during an earnings call: a conventional low-cost model would be “pointless” and “completely at odds with what we believe.”

It’s sort of hard to believe this report: 3/Y are already relatively affordable, Elon said a $25k wouldn’t make sense, consumers want something larger than the Y with X going away, and Musk said what’s coming is “cooler than a minivan.”

Have to think the car is at least an SUV. https://t.co/4CQUV9ZNA5

— TESLARATI (@Teslarati) April 9, 2026

In other words, chasing a bare-bones cheap EV runs counter to Tesla’s core mission of accelerating sustainable energy through cutting-edge technology and autonomy rather than volume-driven price wars.

Musk’s own recent statements reinforce skepticism about a compact SUV pivot. Just two weeks ago, on March 25, he responded to fan requests for a minivan by posting on X: “Something way cooler than a minivan is coming.”

Elon Musk says Tesla is developing a new vehicle: ‘Way cooler than a minivan’

The remark came in the context of family-hauling needs, with Musk highlighting the Cybertruck’s ability to seat multiple child seats. It signals Tesla’s focus is shifting toward more spacious, innovative people-movers—not shrinking its lineup.

U.S. demand data echoes this logic.

The long-wheelbase Model Y L—a six-seat, stretched variant offering extra room for families—has generated massive interest wherever offered. Fans in the U.S. have basically begged for the Model Y L to make its way to the States, or for the company to develop a full-size SUV.

The Model Y L is selling well in China, where it is manufactured.

Delivery wait times for the Model Y L stretched into February 2026 as orders poured in. Tesla recently expanded the trim to eight new Asian markets, yet it remains unavailable in the United States, where consumer appetite for a larger, more practical SUV is reportedly strong.

American buyers have consistently favored bigger vehicles; the Model Y already outsells most competitors precisely because it delivers crossover utility without compromise. A compact model shorter than today’s bestseller would likely miss this mark entirely.

Tesla’s product strategy has long emphasized differentiation through autonomy, range, and desirability rather than racing to the bottom on price. Stripped-down variants of the Model 3 and Y have already struggled to ignite broad demand.

A new compact SUV built in China might sound logical on paper for cost-sensitive buyers, but it risks repeating past missteps—diluting brand cachet while ignoring clear signals from Musk and the market.

History suggests Tesla talks about affordable cars more often than it delivers them. Whether this Reuters scoop evolves into metal or joins the $25k project on the scrap heap remains to be seen.

For now, the smart money is on Tesla doubling down on “way cooler” vehicles that actually fit American families—and Tesla’s ambitious vision—rather than a smaller SUV that feels like yesterday’s news.

News

Tesla CEO Elon Musk says next FSD release is the one we’ve been waiting for

On Thursday, Musk teased the capabilities and next steps for Tesla’s Full Self-Driving software, focusing squarely on the incremental improvements of the current v14.3 suite, as well as the looming arrival of v15.

Tesla CEO Elon Musk teased the capabilities of a future Full Self-Driving release, but it seems like we are getting what Yogi Berra once called “Déjà vu all over again.”

On Thursday, Musk teased the capabilities and next steps for Tesla’s Full Self-Driving software, focusing squarely on the incremental improvements of the current v14.3 suite, as well as the looming arrival of v15.

He confirmed that upcoming point releases of v14.3 will deliver additional polish to the current build, smoothing out remaining edges in an already capable system. These iterative updates, Musk noted, are designed to refine performance without requiring a full version overhaul.

Yet the real headline was Musk’s forecast for v15.

“V15 will far exceed human levels of safety, even in completely unsupervised and complex situations,” he wrote.

Tesla V14.3 self-driving review. The point releases will bring polish.

V15 will far exceed human levels of safety, even in completely unsupervised and complex situations. https://t.co/s4UK9RWw9f

— Elon Musk (@elonmusk) April 9, 2026

He clarified that v15 will be powered by Tesla’s long-awaited large model, an AI architecture with roughly 10x the parameters of the smaller model currently in widespread use. The leap, Musk explained, stems from the unusually rapid progress of the compact model, which has advanced so quickly that the larger counterpart has yet to catch up in real-world deployment.

However, it is becoming a pattern that is, by now, familiar to anyone following Tesla’s autonomous driving roadmap.

There’s no debating you on that 🤷

— TESLARATI (@Teslarati) April 9, 2026

Musk has consistently and repeatedly framed each successive major release as the one poised to deliver game-changing autonomy. Earlier versions were similarly positioned as a movement toward the final piece of the puzzle, only for attention to pivot to the next milestone once they arrived.

The refrain has become a recurring feature of FSD communication: current software is impressive, the point releases will sharpen it further, but the true breakthrough lies one major iteration ahead.

Musk’s latest comments fit squarely into that cadence. While v14.3 point releases are expected to tighten supervised driving behaviors in the coming weeks, v15 is cast as the version that finally crosses the threshold into unsupervised operation at human-or-better safety levels across demanding scenarios.

Our rate of advancement with the small model has been so fast that the large model has not yet caught up.

V15 will be the large model.

— Elon Musk (@elonmusk) April 9, 2026

The 10x parameter scale of the underlying large model is presented as the key technical enabler, promising richer reasoning and more robust decision-making than anything deployed to date.

Whether v15 ultimately fulfills that promise remains to be seen. Tesla’s history shows that each new target generates fresh excitement—and occasional skepticism—about timelines.

Fans realize Musk’s timelines for FSD are exciting, but rarely met:

You can see a rift happening in the Tesla bull community between a large group of reasonable people who aren’t afraid to acknowledge the elephants in the room, and those who are essentially bull bots whose entire identities are destroyed if they have to acknowledge any bump in…

— Mike P (@mikepat711) April 9, 2026

For now, Musk’s message is familiar: the immediate focus is polishing v14.3 through targeted point releases, while the 10x-parameter large model in v15 represents the next decisive step toward fully unsupervised, superhuman safety.

Hopefully, Tesla can come through, but we can only believe that once v15 gets here, v16 will be the next big step toward autonomy.

Drivers can expect continued refinement in the short term and a significantly more ambitious leap once the large model is ready. The cycle continues, but the stakes, Musk insists, keep rising.