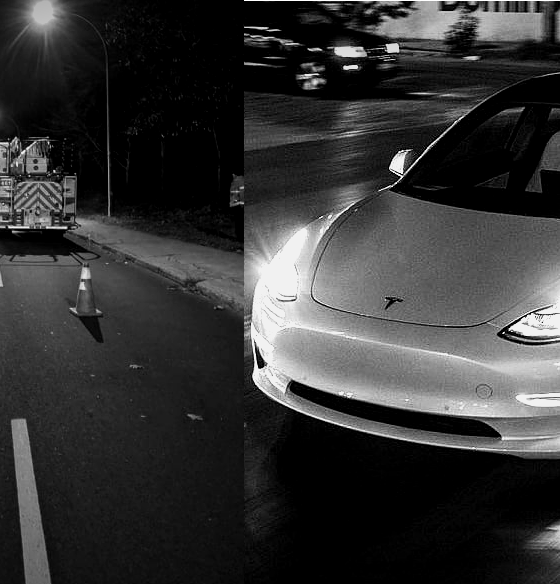

Tesla is currently being investigated by the National Highway Traffic Safety Administration (NHTSA) after several of its electric cars crashed into stationary emergency vehicles while Autopilot was engaged. The premise of the investigation itself is enough to whet the appetite of every Tesla skeptic since the idea of Autopilot crashing consistently into parked emergency vehicles makes for a compelling narrative. Tesla later released an update, enabling Autopilot to detect and slow down for stationary emergency vehicles. The NHTSA responded by calling out the company for not issuing a recall when it released its proactive over-the-air software update.

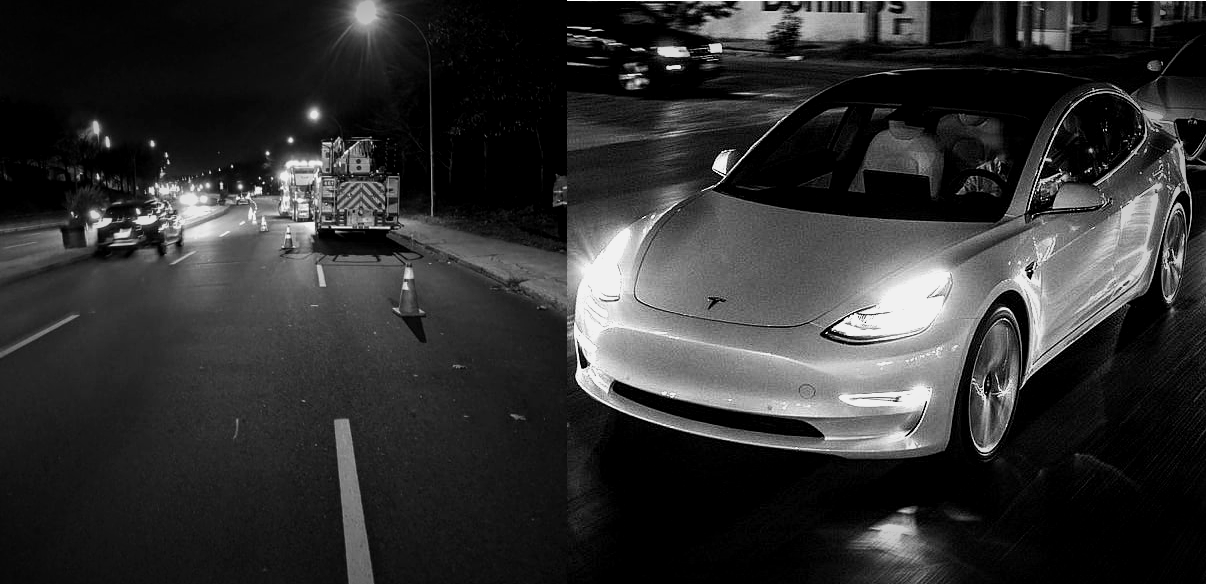

What was lost amidst the spread of the Tesla NHTSA investigation story was the fact that the relatively minor Autopilot update, which simply allowed vehicles to slow down when they detect things such as a police car or a firetruck parked on the side of the road, is already saving numerous lives. This is because there is a deadly problem on America’s roads, and it is something that very few seem to be acknowledging. Emergency personnel are dying on the job at a frighteningly frequent basis. They are dying because cars crash into them while they’re parked on the side of the road. And disturbingly enough, very little is being done about it.

The Flaws of HumanPilot

*Author’s Note and Trigger Warning: The succeeding sections of this article contains links to footage and other online references that may cause distress to readers. Discretion is advised.

One thing that truly stuck out while writing this piece was the sheer frequency of the accidents that happen to emergency personnel while they are responding to someone in need. This was despite the fact that all 50 states in the USA have a “Slow Down Move Over (SDMO)” Law in place. The premise of the SDMO law is simple: Upon noticing an emergency vehicle’s sirens or flashing lights on the side of the road, drivers are required to move away from the emergency vehicle by going into the next lane. If that is not possible, drivers must slow down to reduce the chances of an accident happening. The SDMO law is based on a very simple premise, but it is one that gets violated on a consistent basis.

This is partly due to states interpreting the law differently, with some adopting a “Slow Down and Move Over” model while others are following a “Slow Down or Move Over” system. But ultimately, there have been zero fatalities involving a vehicle that actually slowed down and moved over when they spotted a stationary emergency vehicle. This suggests that the law works, provided that it does get followed.

But when the Move Over Law gets violated, the human toll becomes disturbingly real. A report from the Government Accountability Office (GAO) indicates that about 8,000 injuries involving a stationary emergency vehicle have been reported in one year. As of this year alone, a total of 57 emergency responders have been killed while addressing a roadside issue. Posts from the National Struck-By Heroes Facebook group, which highlight the aftermath of Struck-by injuries (SBIs) are heartbreaking, and videos and posts shared by companies whose staff are killed while on the job are harrowing. This is something that was highlighted by James D. Garcia, the creator of the Move Over Law and an SBI survivor, who shared some of his insights with Teslarati.

“This year is the 25th anniversary of the first Slow Down Move Over Law, passed in South Carolina in 1996. Every state in the US has had an SDMO Law since 2012, and yet this year, we have already reached a record 56 responder deaths (This number has since risen to 57 as of this writing). Since 2018, there have been over 45,000 collisions with stationary roadside objects. Every seven seconds, an object is struck. Every other day, a responder is struck and injured. Every five days, a responder is killed.”

“If you ask the general public the most dangerous risk to a police officer, most would say the chance of being shot in pursuit. If you ask the biggest danger to a firefighter, most envision being trapped in a burning or collapsing building. But statistics prove the real story. Across all agencies, responders are twice more likely to die in an SBI than any other category of work-related injury. It is by far the most dangerous aspect of our job,” Garcia noted.

A DIY Solution

Perhaps the most heart-wrenching thing about the whole situation is the fact that SBIs are not even collected, considered, and analyzed formally by an official government agency, despite it being the leading cause of death and permanent injury for public safety and roadway responders. This situation has been so prevalent that James W. Law, a 32-year-veteran in the emergency roadside response industry and a specialist researcher in the Move Over Law, opted to develop a light sequence he fondly dubs as “E-Modes” to help drivers inform other vehicles that a parked emergency vehicle is nearby. Simply put, the problem of drivers not following SDMO laws is so real and deadly that emergency responders are DIY-ing a solution themselves — because they cannot count on anyone else.

Responding to roadside problems on America’s roads for the past 32 years is no joke, and over this time, Law has encountered the worst drivers possible. Law shared with Teslarati that over the course of his career, he has been personally involved in an accident four times, the first of which happened when he was just 18 years old. In what could very well prove the point that humans are bad drivers, one of Law’s experiences actually involved a driver intentionally crashing into him because he felt upset that traffic was disrupted due to an incident. Law’s legs broke the irate driver’s headlights because of the crash, and the driver wanted to accuse the roadside responder of damaging his car. The police were fortunately reasonable, and Law was not charged. The irate driver, on the other hand, received a $500 ticket for using his vehicle as a weapon.

Speaking with Teslarati, Law admitted that he is a pretty notable Tesla supporter, and he tried his best to emulate CEO Elon Musk’s first principles thinking when he developed E-modes’ custom light sequence. He aims to donate the light sequence protocols he developed to Tesla, partly due to the fact that the company is really the only carmaker out there that seems to be actively doing something to address the deadly issue plaguing emergency roadside personnel today. This became quite evident when the company updated its vehicles to detect and respond to traffic cones on the road. This small update, Law noted, may seem minor — even marginal — to the layman, but for roadside personnel, it was a godsend.

“Tesla’s traffic cone recognition is a crucial safety feature that I take full advantage of on any and all incidents. Properly setting up cones to define the ‘Kill Zone’ offers a quick way to communicate directly to any Tesla vehicle. Unlike humans, Tesla Vision is always aware. It’s one of the ways I communicate with oncoming Teslas. If Elon adopts E-Modes, a Tesla could communicate back to me that it is situation-aware. As a safety advocate, I strongly insist that every emergency responders use cones on every scene every time because it’s the right thing to do to protect everyone,” Law said.

The Lone Problem Solver

Inasmuch as the mainstream media coverage of the NHTSA’s probe on Autopilot’s incidents with emergency vehicles is substantial, the fact is that Tesla only accounted for nine crash injuries with first responder vehicles in the past 12 months. That’s a tiny fraction of the ~8,000 injuries the GAO indicated in its report. The company has also steadily rolled out features to make its vehicles safer. With every update of Autopilot and FSD, features like traffic cone recognition get more refined, and the more refined they get, the more emergency responders they protect. Tesla’s recent Autopilot update, which allows vehicles to slow down when they detect a parked emergency vehicle, is further proof of this.

Law noted that he had been involved in thousands of close calls in his 32-year career, but the one that truly stuck out to him involved a Tesla driver from late 2019, just after the company rolled out Autopilot’s capability to recognize and avoid traffic cones. While he was defining a “Kill Zone” on the road after responding to an incident, he saw an approaching Tesla whose driver appeared to be looking down and not paying attention to the road. Law was unsure if the Tesla was on Autopilot, but the vehicle moved over to the other lane seemingly as soon as it detected the traffic cones that he set up. The veteran emergency responder noted that the Tesla driver seemed surprised as the electric vehicle avoided the cones on its own.

Such an incident, ultimately, is what makes Tesla stand apart, at least for now. It may be an inconvenient truth, especially to those who salivate at the thought of FSD or Autopilot going berserk and hunting down emergency responders, but the fact remains that Tesla is doing far more to protect both its drivers and other people on the road than any other carmaker out there. Emergency responder deaths are preventable, and as the creator of the Move Over Law noted, the lion’s share of these incidents is due to human error. It is this human error that technologies such as Autopilot and FSD are trying to solve, NHTSA probe notwithstanding.

“Ninety percent of all struck-by deaths are a direct result of poor driver behavior. That means that nine out of ten responder deaths could have been prevented if the driver had maintained control of their vehicle at a reasonable speed and reacted in a considerate and attentive manner. Twenty-three percent of lethal struck-by violators were impaired. Five percent were distracted, and another three percent were drowsy. It is important we continue to support efforts to reduce drunk driving and speak out about the rapid rise of distracted driving resulting in responder deaths. Multiple agencies have ongoing PR campaigns to address these aspects, but none are taking on the most dominant category — angry, aggressive, entitled, and selfish drivers.

“The remaining 69% of drivers that crashed into and killed a responder were completely sober. They saw the lights, they recognized the situation, yet they still felt the need to speed up and pass just a few more cars before they moved over. They were in too big of a hurry to slow down to a controllable speed and killed a responder. These drivers consciously made an intentional personal decision to carelessly disregard the life of a responder. Self-absorbed drivers have become the norm. Stronger laws, higher fines, bigger signs, and brighter lights have no effect once they get behind the wheel. We need to face this reality and develop a strategy that confronts this disregard. We must reinforce the value of a responder’s life over whatever current personal priorities are influencing these drivers’ behavior,” Garcia noted.

A (Potentially) Safer Future

One can only hope that agencies such as the NHTSA could see the bigger picture with regards to vehicles and the advantages of technologies such as Autopilot and Full Self-Driving. It takes an immense amount of short-sightedness, after all, to remain fixated on whether a recall was filed for a proactive Autopilot update, or on 11 incidents that involved a Tesla crashing into a stationary emergency vehicle, all while one emergency personnel is killed every five days. Focusing on Tesla and ignoring the larger problem at hand seems counter-productive at best.

In an ideal scenario, technologies such as Autopilot’s capability to identify, slow down, and potentially even move over to another lane when an emergency vehicle is detected would become mandatory for all cars on the road. As noted by esteemed auto teardown expert Sandy Munro, advanced driver-assist systems such as Autopilot and FSD have the potential to save lives on the same level as seatbelts, perhaps even more. And in this light, John Gardella, a shareholder at CMBG3 Law in Boston, MA, told Teslarati that if the NHTSA really wishes to help roll out new safety features, it would actually be a lot easier than one might imagine.

“Implementing the safety feature in Tesla’s vehicles will be easier than one might imagine. The National Highway Traffic Safety Administration (NHTSA) showed earlier in 2021 through its final rule for safety features for automated driving systems that it does not wish to set onerous standards prior to many features for automated driving system (ADS) vehicles coming to market. In fact, the desire of the NHTSA was to reduce barriers to having ADS safety features come to market more rapidly, and thereby accelerate autonomous vehicles coming to mass markets. The NHTSA received some criticism for its approach. However, the NHTSA does still have the authority to interpret the Federal Motor Vehicle Safety Standards (FMVSS), investigate perceived defects or unreasonably safe vehicle features, and carry out its enforcement authority, including recall power,” Gardella said.

Don’t hesitate to contact us with news tips. Just send a message to tips@teslarati.com to give us a heads up.

Elon Musk

SpaceX to become America’s Military data backbone for missiles, drones, and warfighters

The Space Force just handed SpaceX $2.29 billion to build the military’s space internet backbone.

The U.S. Space Force awarded SpaceX a $2.29 billion contract on May 26, 2026 to build the backbone of its Space Data Network, a satellite-based communications system designed to keep American military forces connected anywhere on Earth in real time. The contract is firm-fixed-price and requires SpaceX to deliver a fully operational prototype by the end of 2027.

In plain terms, the SDN Backbone is the plumbing behind the military’s space-based internet. It functions as a low Earth orbit satellite constellation providing robust, high-capacity, and low-latency data transport for the Joint Force, connecting sensors and weapons systems continuously, globally, and securely. Think of it as a private, hardened version of Starlink built specifically for battlefield communications, one that soldiers, ships, and aircraft can rely on even in contested environments where ground-based networks have been disrupted.

SpaceX is quietly becoming the U.S. Military’s only reliable rocket

The Space Force was direct about why SpaceX was selected. “The SDN Backbone leverages the best of commercial innovation and delivers a strong foundation for the SDN mission set — a huge benefit and enabler for our warfighters,” said USSF Col. Ryan Frazier.

“We aren’t trading speed for scale; we are demanding both. By using rapid prototyping and Other Transaction Authorities, we are ensuring our advanced solutions are integrated and delivered to the warfighter as fast as possible,” added USSF Lt. Col. Fry, SDN Backbone system program manager.

The SDN Backbone will work alongside the Space Development Agency’s Transport Layer, with the two systems forming a unified open architecture to provide critical data transport for current and future Department of War missions.

As Teslarati has reported, this is not SpaceX’s first Space Force contract of 2026. In April, the Space Force awarded SpaceX $178.5 million to launch missile tracking satellites, and SpaceX is already embedded in the Golden Dome missile defense software group. The $2.29 billion SDN Backbone award puts SpaceX at the center of how the American military communicates in space, a position with direct implications for its reported $1.75 trillion IPO valuation as the company heads toward a public offering as early as June 2026.

News

Tesla’s dedicated Optimus factory construction officially underway at Giga Texas

Tesla’s dedicated factory for building up to ten million Optimus units is officially under construction at Gigafactory Texas.

Drone footage released on May 27 by Giga Texas observer Joe Tegtmeyer captures the significant milestone of the first steel structure officially standing at Tesla’s new Optimus factory on the North Campus of the facility.

Phase two of land reclamation is advancing steadily, and the progress will let the new building extend nearly the full length of the main Giga Texas factory, potentially exceeding 4,000 feet, while measuring somewhere between 50 and 70 meters narrower. Extensive foundation work is proceeding as well.

Big news at the new Optimus 10m/y factory construction site today! The 1st steel structure has been erected & as expected the second phase of land reclamation is underway.

This will allow this new factory to grow to nearly the same length as the main Giga Texas factory,… pic.twitter.com/FidRLV6XpU

— Joe Tegtmeyer 🚀 🤠🛸😎 (@JoeTegtmeyer) May 27, 2026

This facility forms a central element of Tesla’s broader North Campus expansion at Giga Texas. The project will add more than 5.2 million square feet of new industrial space. It sits alongside other advanced developments, including a Terafab for next-gen AI chips. The scale reflects Tesla’s commitment to transforming humanoid robotics into a core pillar of the company’s future.

Musk has said that Optimus will be the biggest product in the world on several occasions. He believes it will be Tesla’s biggest valuation contributor.

Tesla prepares to expand Giga Texas with new Optimus production plant

Tesla plans to build about 10 million robots at the site annually once it is completed, which would be about 27,000 units each day.

The Optimus plant at Giga Texas is part of Tesla’s phased strategy for Optimus manufacturing. In an effort to start production of the robot well before the Giga Texas plant is complete, Tesla ended production of the Model S and Model X vehicles, which were built in Fremont, California, to make way for initial Optimus manufacturing efforts.

Production there will start in either July or August of this year, and early units will support internal factory tasks while the team gathers real-world data to refine processes. The Gigafactory Texas facility will house a second-gen production line. It targets high-volume output starting in Summer 2027.

Musk has repeatedly described Optimus as potentially more valuable than Tesla’s entire vehicle business. Current versions are already completing minor tasks around various facilities, while Tesla continues to refine its abilities and add new features.

Tesla’s total investment could reach several billion dollars. Significant challenges lie ahead, including the creation of an entirely new manufacturing ecosystem, the refinement of AI systems for dependable autonomy, and the development of reliable supply chains for actuators, sensors, and other components.

Nevertheless, the visible progress at Giga Texas highlights Tesla’s capacity to translate ambitious concepts into physical reality.

Tesla’s Optimus factory stands as much more than a simple expansion project, as it is quite literally the second phase of what could potentially be the biggest product ever. With construction beginning, 2027 is poised to become a transformative year for Tesla, as it evolves even further from an electric vehicle leader into a pioneer of intelligent, general-purpose machines.

News

Tesla teases going Plaid Mode with the Model 3

Tesla Vice President of Vehicle Engineering, Lars Moravy, recently revealed the company has thought about introducing a Plaid powertrain on the Model 3, but there could be some challenges involved.

On the Ride the Lightning podcast, Moravy revealed that he thinks about a Plaid Model 3 “all the time,” and it certainly has a place in Tesla’s potential lineup of future vehicles.

Now that the Plaid powertrain is technically defunct due to the newfound absence of the Model S and Model X, Tesla could find a way to reintroduce the lightning-quick trim level to its mass-market vehicles.

But there are going to be some challenges with it. Moravy said that the Model 3 Plaid would likely adopt the carbon-sleeved motors that the Model S Plaid had. However, packaging would be a major challenge, as Moravy said on the podcast, it would be a “tight engineering squeeze.”

It’s important to note that there are no active production plans for the Model 3 Plaid at this point, but it’s also worth noting that with the Model S and Model X Plaid no longer available, Tesla would likely be willing to introduce something that is even more white-knuckle than the Model 3 Performance, which already boasts a 2.9-second 0-60 MPH acceleration rate and a top speed of 163 MPH.

Of course, there is the Roadster, but we don’t know when that will exactly make it to market, and we know that, for sure, it will not be accessible to many.

Tesla unveils juicy new detail on the Roadster and hints at new unveil timeline

Tesla has prided itself in building some of the best cars out there, but they’re also interested in building cars that are simply fun to be in.

A Plaid Model 3 could truly push the limits and could end up being one of the best cars Tesla will ever build, especially if it can shave off at least half of a second from its 0-60 MPH time and increase its top speed slightly.

More than anything, the real changes will be in the ride and aerodynamics. Tesla improving things like the suspension, handling, and downforce will be the true trademarks of its Plaid powertrain; putting it in the Model 3 could be a great move for the company and for customers interested in high-end performance.