News

NASA’s InSight hopes to detect “marsquakes”, deploys seismometer on Mars

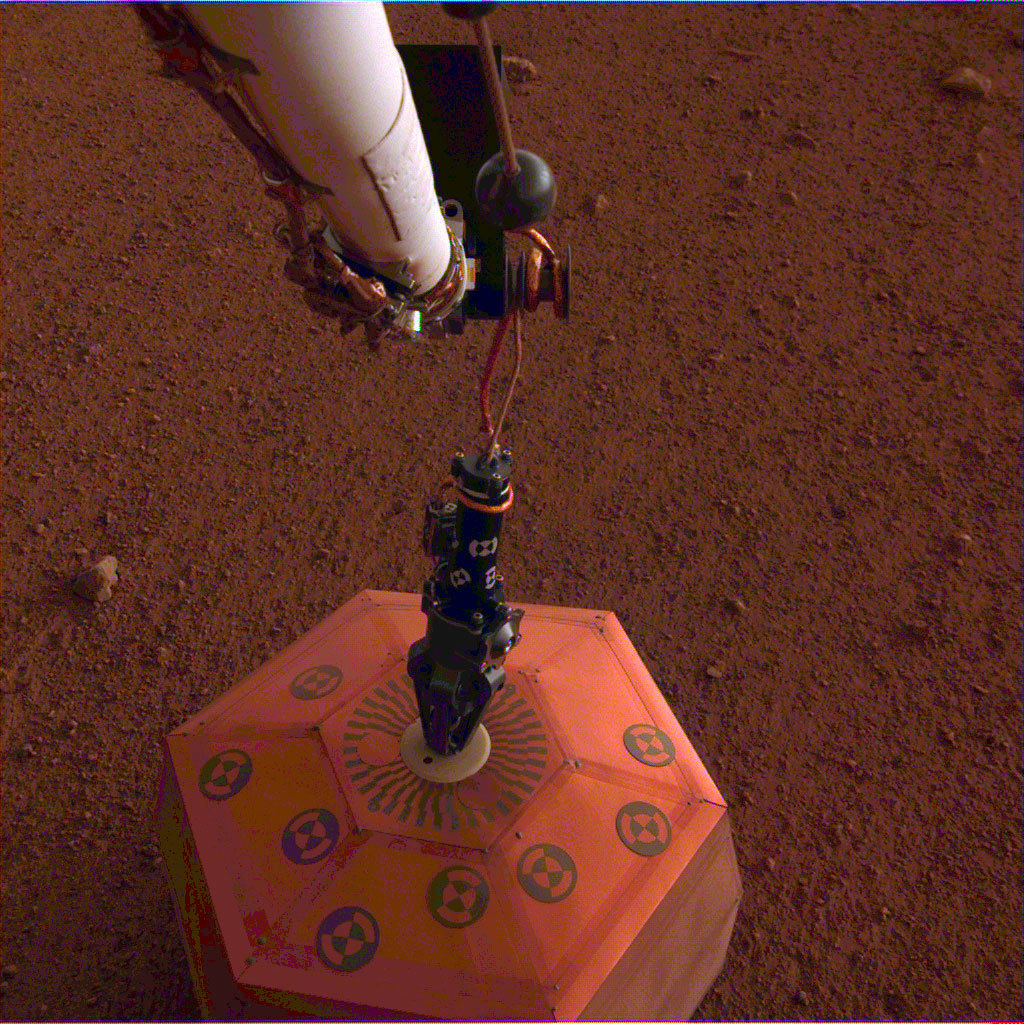

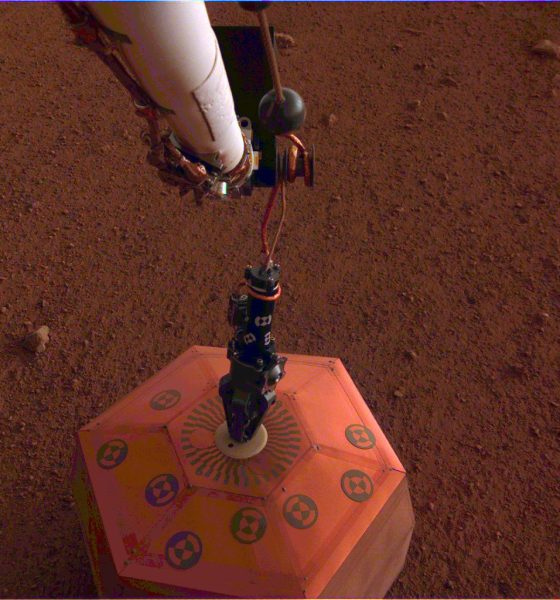

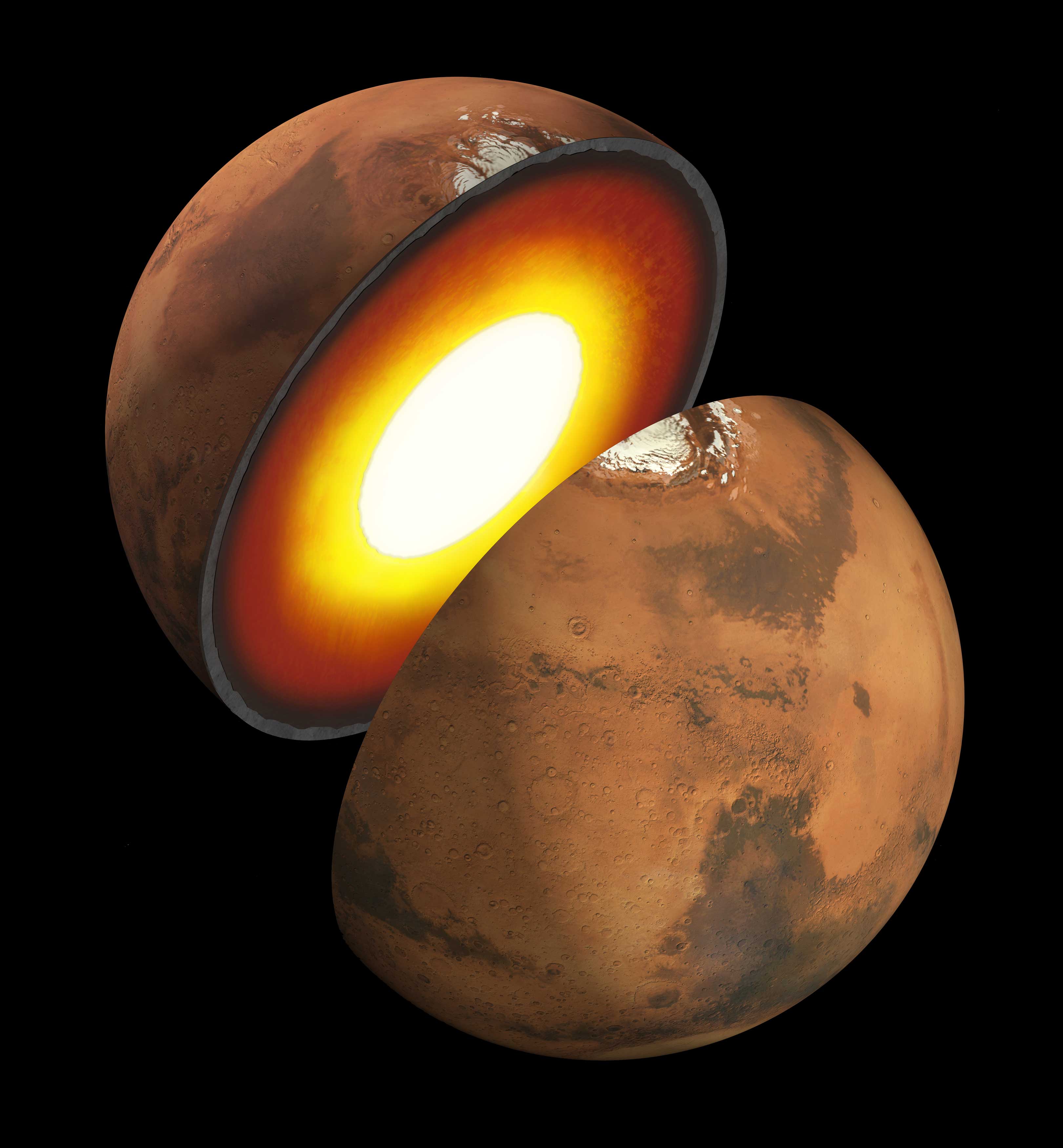

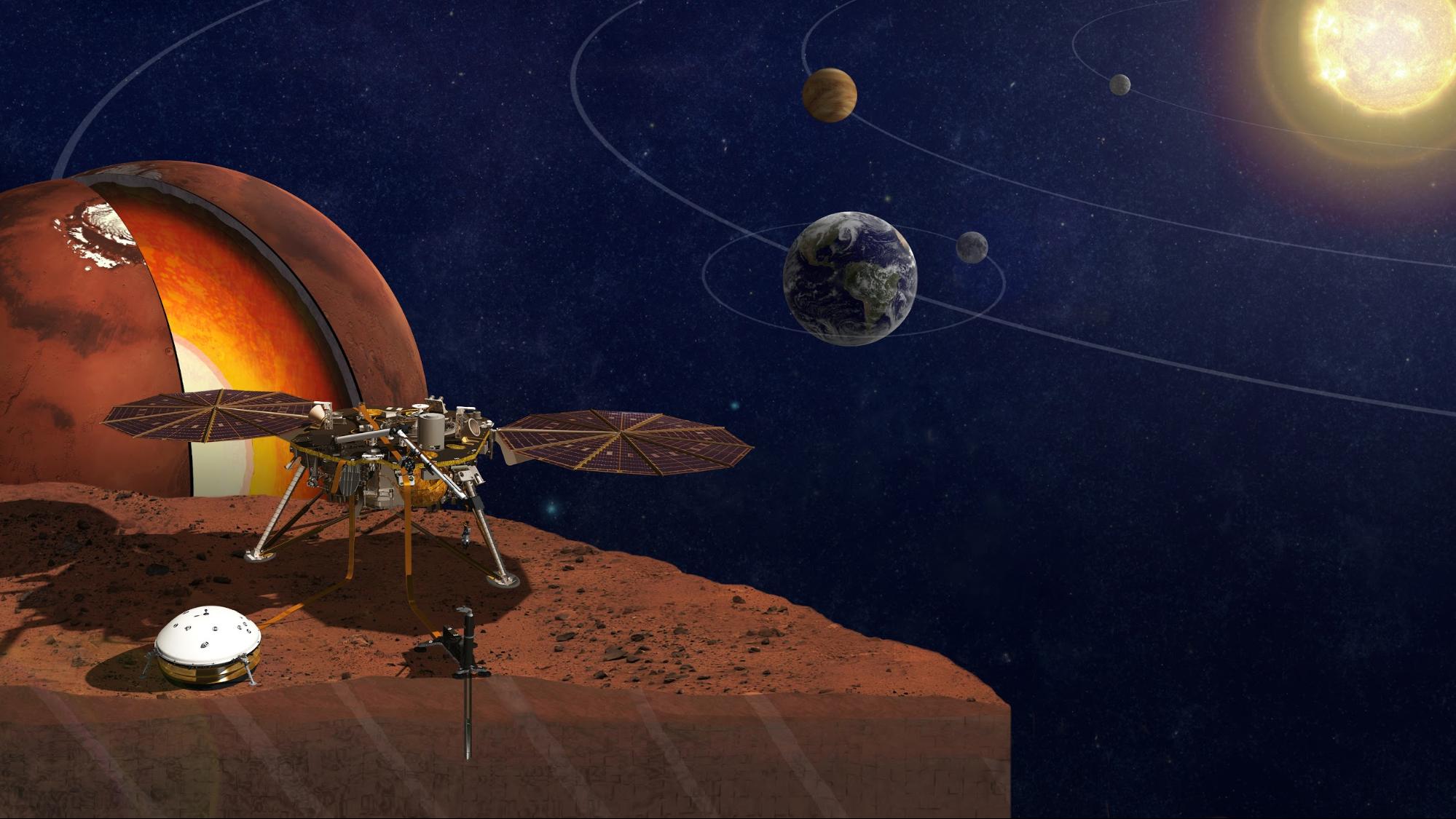

In another historic feat for NASA’s InSight lander, a seismometer has now been deployed on Mars, marking the first time a scientific instrument has been placed onto the surface of another planet. Once the craft’s team have things set up for readings, its instruments will begin measuring the internal vibrations of the red planet, hoping to ultimately learn about the activities and composition of its core and crust. InSight’s instruments will also study how powerful and frequent seismic activity is on Mars along with how often the surface is hit with meteorites. If we’re hoping to explore and possibly live there one day, this is all very important information to have.

After launching on May 5, 2018, aboard an Atlas rocket in California, InSight and its MarCO twin CubeSat companions traveled through deep space for around 6 months before landing on the Martian surface at 11:52 PST on November 26, 2018, an event watched live around the world, including a broadcast in Times Square, New York City. The planned mission for the craft is a little over 1 Martian year, i.e., about 2 Earth years, during which time it will aim to provide scientific data useful for understanding the processes that have shaped the rocky planets of our solar system. In other words, the things InSight learns about Mars will be directly relevant to our own planet as well.

InSight’s name is actually an acronym for “Interior Exploration using Seismic Investigations, Geodesy and Heat Transport”, each part being a reference to the specific science it will be conducting. There are several auxiliary instruments on board the lander that will assist or complement its main mission. However, there are 3 scientific instruments on the craft to help meet its objectives.

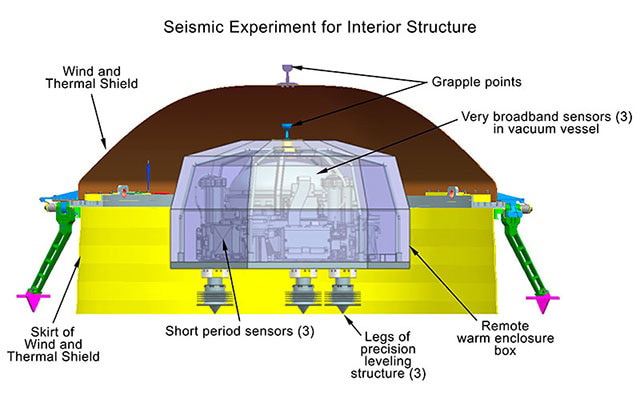

First, a seismometer named the Seismic Experiment for Interior Structure (SEIS) will study seismic waves from the Martian surface to study the planet’s crust. When magma moves or meteorites hit, the instrument will detect the motion and gather information that will tell scientists about Mars’ temperature, pressure, and composition. This is the instrument featured in the lander’s recent photo.

Second, a heat flow probe named the Heat Flow and Physical Properties Probe (HP3) will burrow more than 10 feet into the surface to measure the heat still flowing out of Mars, giving clues about how it evolved and whether Earth and Mars are made of the same materials. Finally, a radio science instrument named the Rotation and Interior Structure Experiment (RISE) will measure tiny changes in the location of InSight to measure Mars’ “wobbles” on its axis. This movement data will provide information about the planet’s core.

InSight is conducting its experiments on the western side of the Elysium Planitia of Mars, a smooth, flat region near the planet’s equator. The location was chosen from a pool of 22 candidate landing sites, all within Elysium, evaluated during several workshops from 2013-2015. The decision was made based on Elysium’s proximity to the equator (maximum sun for InSight’s solar arrays), low elevation (plenty of atmospheric space for its landing), lack of rocks and slopes (flat enough for the instruments to deploy and work properly), and the subsurface structure (so the digging instruments could burrow easily).

Next, InSight will finish setting up its remaining instruments and begin its full science mission. We can expect to continue receiving image updates from the lander as more milestones are reached. Here’s an extra bonus if you want to feel like you’re “there” with InSight: NASA’s “Experience InSight” interactive web page lets you control a virtual version of the lander in a Martian environment. You can deploy its solar panels, move around a few of its instruments, or just learn about the various parts that make up the mission. There are additionally two virtual cameras, just like the ones onboard the actual craft, enabling you to watch the movements you’re making, just like InSight’s team sees from their control center.

Watch the below video for a recap of InSight’s landing:

Energy

Zuckerberg’s Meta taps Musk’s Tesla for massive clean energy project

In a notable intersection of Big Tech powerhouses, Meta, led by Mark Zuckerberg, has partnered with Canadian energy infrastructure giant Enbridge on a significant renewable energy initiative that will rely on battery technology from Elon Musk’s Tesla.

The project, which was announced this week, marks another step in Meta’s aggressive push to power its expanding data center operations with clean energy, dispelling many of the complaints people have about them.

This new development is located near Cheyenne, Wyoming, and will feature a 365-megawatt (MW) solar farm paired with a 200 MW/1,600 megawatt-hour (MWh) battery energy storage system, also known as BESS. Tesla is providing the batteries for the project, valued at roughly $200 million.

The story was originally reported by Utility Dive.

This Wyoming project represents the first phase of Enbridge and Meta’s joint “Cowboy Project.” Once operational, it will deliver power to Meta’s regional data centers through Cheyenne Light, Fuel, and Power under Wyoming’s Large Power Contract Service tariff.

This tariff, originally developed in collaboration with Microsoft and Black Hills Energy, is designed specifically for large loads like data centers. It ensures that the renewable supply serves hyperscale customers without impacting retail electricity rates for other users.

The battery system will operate under a long-term tolling agreement, providing dispatchable capacity that enhances grid reliability. During periods of high demand, the utility can access the backup generation, addressing one of the key challenges of integrating large-scale renewables with the explosive growth of data center electricity demand driven by artificial intelligence.

This latest collaboration builds on prior joint efforts between Enbridge and Meta in Texas, including the 600 MW Clear Fork Solar, 152 MW Easter Wind, and 300 MW Cone Wind projects. Together with the Wyoming initiative, the companies have now partnered on roughly 1.6 gigawatts (GW) of combined solar, wind, and storage capacity.

The deal highlights the intensifying demand for reliable, low-carbon power from technology giants. Meta has committed to supporting its data center growth with renewable energy, joining peers like Microsoft and Google in seeking large-scale solutions. Enbridge’s Allen Capps described the project as “one of the larger utility-scale battery installations supporting U.S. data center operations and growth.”

The involvement of Tesla’s battery technology adds an intriguing layer, linking two of the world’s most prominent tech leaders—Zuckerberg and Musk—in the clean energy transition.

As data centers continue to drive unprecedented electricity load growth across the United States, projects like this one illustrate how hyperscalers are turning to strategic partnerships with traditional energy players and innovative storage solutions to meet both sustainability goals and reliability needs.

Elon Musk

SpaceX reveals reason for Starship v3 stand down, announces next launch date

SpaceX has decided to stand down from what was supposed to be the first test launch of Starship’s v3 rocket tonight after a minor issue with a hydraulic pin delayed the flight once more.

The company scrubbed its first test flight of the upgraded Starship v3 on May 21 in the final minutes of the countdown. SpaceX CEO Elon Musk quickly took to social media platform X, explaining that a hydraulic pin on the launch tower’s “chopsticks” arm failed to retract properly.

Musk added that the company would fix the issue this evening. SpaceX will attempt another launch tomorrow night at 5:30 p.m. CT, 6:30 p.m. ET, and 3:30 p.m. PT.

The hydraulic pin holding the tower arm in place did not retract.

If that can be fixed tonight, there will be another launch attempt tomorrow at 5:30 CT. https://t.co/DJAdvDYQpH

— Elon Musk (@elonmusk) May 21, 2026

The countdown for Starship Flight 12 — featuring the taller and more capable V3 stack with Booster 19 and Ship 39 — had been progressing smoothly until the late-stage issue surfaced. The Mechazilla tower arm, designed to secure the vehicle on the pad and eventually catch returning boosters, could not complete its retraction sequence.

SpaceX teams immediately began troubleshooting the hydraulic system for an overnight repair.

Starship V3 introduces several significant upgrades over earlier versions. These include greater propellant capacity, more powerful Raptor 3 engines, larger grid fins, enhanced heat shielding, and an improved fuel transfer system.

We covered the changes that were announced just days ago by SpaceX:

SpaceX unveils sweeping Starship V3 upgrades ahead of May 19 launch

The changes are intended to increase payload performance, support higher flight rates, and advance the vehicle toward operational missions, including Starlink deployments, NASA Artemis lunar landings, and future crewed Mars flights. The debut flight from Starbase’s new Launch Pad 2 marked an important milestone in scaling up the fully reusable Starship system.

This stand-down highlights the intricate challenges of preparing the world’s most powerful rocket for flight. Despite extensive pre-launch checks, a single component in the ground support equipment can force a scrub.

The incident aligns with Starship’s proven iterative development approach. Previous test flights have encountered both successes and setbacks, each providing critical data that refines hardware and procedures. Some outlets may call some of these flights “failures,” when in reality, they are all opportunities for SpaceX to learn for the next attempt.

With V3, SpaceX aims to reduce ground-system dependencies and increase launch cadence to meet ambitious long-term goals.

News

Tesla Model Y becomes first-ever car to reach legendary milestone

The Tesla Model Y became the first-ever car to reach a legendary Norwegian milestone, surpassing 100,000 new registrations after gaining a reputation as one of the most popular vehicles in the country and the world.

As of May 20, Norwegian authorities have registered 100,224 units of the electric SUV, according to data from local outlet Opplysningsrådet for veitrafikken (OFV).

By population, roughly one in every 29 passenger cars on Norwegian roads is now a Model Y, underscoring its rapid rise as a national favorite.

Since the first deliveries in August 2021, the Model Y has transformed from a newcomer to a staple in Norwegian traffic.

Tesla back on top as Norway’s EV market surges to 98% share in February

Geir Inge Stokke, the Managing Director of OFV, described the achievement as “remarkable,” noting that few single models have gained such traction so quickly. “Tesla Model Y has hit the Norwegian market spot on, and the numbers illustrate how fast the EV market has developed here,” Stokke said.

The Model Y’s success reflects Norway’s aggressive push toward electrification. Nearly nine out of ten units, 87.6 percent, to be exact, are privately registered, with the remaining 12.4 percent on company plates. Owners span the country, from major cities to smaller municipalities, proving it is no longer just an urban or niche vehicle but a true “people’s car.

Who is Buying Tesla Model Ys in Norway?

Typical Model Y drivers are men in their early 40s. The average registered user age is 44, with 83 percent male and 17 percent female. Stokke noted that household usage often extends beyond the primary registrant, broadening the vehicle’s real-world appeal.

Geographically, adoption concentrates in urban centers with strong charging infrastructure. Oslo leads with 16,861 registrations (16.82 percent of the national total), followed by Bergen (7,450), Bærum (4,313), and Trondheim (4,240).

The top five municipalities—Oslo, Bergen, Bærum, Trondheim, and Asker—account for 35,463 units, or about 35 percent of all Model Ys. Yet the vehicle’s presence outside big cities highlights its broad acceptance.

Growth Trajectory and Popularity

Tesla built a lot of sales momentum in a short amount of time. In 2021, registrations closed out at 8,267, but more than doubled to more than 17,000 units in 2022 and more than 23,000 units in 2023. 2025 was the company’s strongest year yet, as Tesla managed to record 27,621 registrations.

Through 2026, Tesla already has 7,036 registrations.

Tesla’s Global Success with the Model Y

Tesla has tasted so much success with the Model Y; it has been the best-selling car in the world three times, it has dominated EV sales in numerous countries, and contributed to a mass adoption of electric vehicles across the planet.

As Stokke emphasized, the Model Y’s journey from newcomer to icon mirrors Norway’s broader success story. With robust incentives that push sales, excellent infrastructure, and consumer eagerness to transition to sustainable powertrains, the country continues setting global benchmarks in sustainable mobility.

The Tesla Model Y stands as a shining example of how quickly change can happen when conditions align.