News

SpaceX CEO Elon Musk arrives in Texas for milestone Starship engine test

On Saturday evening, SpaceX CEO Elon Musk landed in Waco, Texas – perhaps along with additional SpaceX propulsion engineers – for the critical static fire debut of the first “radically redesigned” Raptor engine, built to power BFR’s Starship upper stage and Super Heavy booster.

If the first operationalized Raptor’s static fire tests go well, there are several possible routes the test program could take, all of which will end up with this engine and several others being tested and ultimately installed on the Starship hopper (Starhopper) prototype under construction roughly 500 miles (800 km) south of SpaceX’s Raptor test cell.

At @SpaceX Texas with engineering team getting ready to fire new Raptor rocket engine pic.twitter.com/ACFM8AtY8w

— Elon Musk (@elonmusk) February 3, 2019

Shortly after Musk revealed official photos of the first operationalized Raptor preparing for an inaugural static fire test at SpaceX’s McGregor, Texas facilities, the SpaceX and Tesla CEO’s private jet was seen landing at Waco, Texas around sunset. Although all SpaceX technical expertise needed for Raptor’s first ignition was probably already on site several days prior, Musk has been known to offer seats on his private planes to SpaceX and Tesla employees when a critical group is needed away from their normal base of operations. The best examples come from Tesla engineering expertise sometimes traveling between Fremont and Gigafactory 1 when needed, often to solve production holdups.

Regardless of whether he was traveling with members of the SpaceX propulsion team, Musk’s arrival at McGregor yesterday signified that Raptor Block 1’s first integrated hot-fire was imminent. Assuming no attempt was made on Saturday night or Sunday morning, SpaceX technicians and engineers are presumably still working on installing what is effectively a new rocket engine and ensuring that Raptor’s test cells – extensively overhauled and upgraded for the occasion – are working as intended. While the development Raptors SpaceX built hovered around 1000 kN (~100t) of thrust, also roughly the same as Merlin 1D, the Raptor now on stand in Texas is reportedly a 200 ton-class engine or more than double the thrust of any single engine SpaceX engineers and technicians have built or test-fired in 15 years of engine development.

- The only official render of Raptor, published by SpaceX in September 2016. The Raptor departing Hawthorne in Jan ’19 looked reasonably similar. (SpaceX)

- Technically speaking, this Raptor is the smaller (sea-level) version of the engine. (SpaceX)

- SpaceX’s current Texas facilities feature a test stand for Raptor, the engine intended to power BFR and BFS to Mars. (SpaceX)

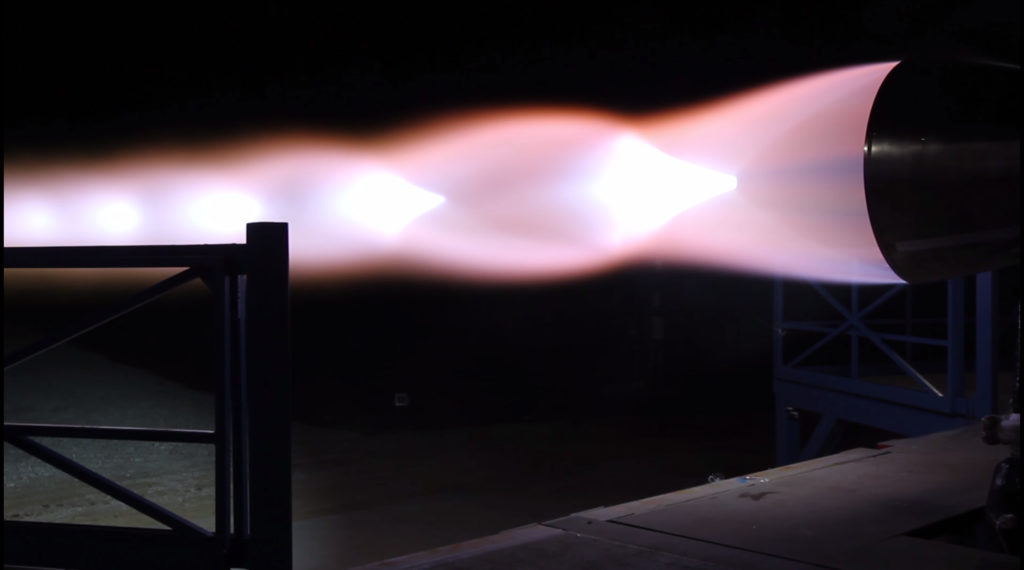

- A Raptor prototype is seen here during its first-ever ignition test. (SpaceX)

- A 2017 test-firing of the mature development Raptor, roughly 50% less powerful than the full-scale system. (SpaceX)

A fork in the R&D road

Prior to completing Raptor Block 1 (unofficial designation), SpaceX cumulatively test-fired dev Raptors for far more than 1200 seconds over the course of more than 24 months. It’s unclear how extensively the company’s engineers will be able to test the pathfinder hardware built on the back of that extensive test program. Nominally, one would expect hundreds or thousands of seconds of additional testing to properly characterize the design and production of a brand-new, optimized engine like Raptor while primarily ensuring that it performs within engineering specifications.

Knowing CEO Elon Musk’s self-admitted tendency to push for impractical deadlines and schedules that often appeared rushed for the sake of rushing, it’s not impossible that the first Raptors could find themselves installed on the Boca Chica-based Starhopper test article after Merlin-esque acceptance testing and nothing more. For M1D and MVac, acceptance testing usually takes the shape of a full-duration burn with throttle and gimbal activity to closely simulate a true Falcon 9 or Heavy launch. For the 200-ton Raptor now in Texas, comparable acceptance testing could take a variety of forms, ranging from short Starhopper-relevant burns (10-60 seconds for small hops) to simulating conditions during a Super Heavy launch and landing or even a 6 or 7-minute orbital insertion burn indicative of the performance needed for Starship.

Depending on the interplay between the route SpaceX engineers would likely prefer and the Starhopper test schedule executives and managers might want, this first Raptor engine (and two more soon to follow) could be installed on Starhopper anywhere from a few weeks to several months from now. Elon Musk indicated in early January that he expected hop tests would occur 4-8 weeks later, shortly followed by unplanned damage to the craft’s nose cone that pushed the debut back “a few weeks”.

Aiming for 4 weeks, which probably means 8 weeks, due to unforeseen issues

— Elon Musk (@elonmusk) January 5, 2019

I just heard. 50 mph winds broke the mooring blocks late last night & fairing was blown over. Will take a few weeks to repair.

— Elon Musk (@elonmusk) January 23, 2019

Realistically, hop tests should thus be expected to begin no earlier than (NET) 8-12 weeks from the first week of January, translating to NET March or April. This would give SpaceX propulsion engineers a decent amount of time to gain at least a few hundred (or maybe 1000+) seconds of experience operating the newest and most advanced iteration of Raptor.

Check out Teslarati’s newsletters for prompt updates, on-the-ground perspectives, and unique glimpses of SpaceX’s rocket launch and recovery processes!

News

Tesla stuns with another FSD approval in Europe, its second in two days

Tesla has stunned by gaining yet another approval for its Full Self-Driving suite in Europe, its second in two days and its fifth overall.

Belgium will be the latest country to allow Tesla owners to utilize FSD on public roads in Europe, joining a quickly growing list that started with the Netherlands, Lithuania, and Estonia.

On Tuesday, Denmark announced its approval of the FSD suite, which has now been followed by Belgium just one day later.

The country’s Minister of Mobility, Annick De Ridder, announced the approval on her X account, stating that she had just signed the approval of Tesla FSD. It now goes to the country’s homologation department for the last step of the approval process.

De @Tesla community houdt hier al geruime tijd de vinger aan de pols over de toelating voor de FSD-technologie op onze Vlaamse en Belgische wegen.

Uit waardering voor jullie niet-aflatende interesse (en aanmoediging 😉), krijgen jullie hierbij de primeur: ik heb net de toelating… pic.twitter.com/Yrps4OHTj8— Annick De Ridder (@AnnickDeRidder) June 10, 2026

The Belgian approval is one of mighty importance because it truly shows how quickly countries in Europe could greenlight the FSD suite consecutively. Approvals are already coming in relatively quickly, which is a great sign.

Perhaps the next big development that could come from FSD approvals in Europe is an approval from a country like England, Italy, France, Spain, or Germany. It would be something to see how FSD would perform in a major European metro, such as London, Barcelona, Madrid, Paris, Rome, or Berlin.

Getting Full Self-Driving in Spain and England will be such huge milestones for Tesla. I am so excited to see how FSD performs in Madrid, Barcelona, and London, specifically.

The ultimate test will always be Mumbai or New Delhi. Excited for India’s eventual approval! https://t.co/paw9Ch1qmL pic.twitter.com/9RdDERVSSJ

— TESLARATI (@Teslarati) June 9, 2026

Full Self-Driving does an excellent job of roaming around major U.S. cities like New York and Los Angeles, but other high-profile international cities of significance would truly mark a line in the sand for Tesla, which can simply enable any vehicle in its customer-owned fleet to run FSD with the correct approvals.

Elon Musk

SpaceX’s Elon Musk relieves worries about orbital data centers

SpaceX CEO Elon Musk recently confronted worries about orbital data centers and launching satellites in mass quantities in space, as some voiced concerns about crowding.

Musk’s SpaceX plans to combat the issue of needing data centers by launching them into space instead of taking up valuable real estate on Earth. It has been a major point of SpaceX’s future, including its looming IPO, which could be the largest ever.

In a recent interview filmed at SpaceX’s Starlink terminal factory in Bastrop, Texas, Elon Musk directly addressed concerns that deploying large numbers of AI satellites for orbital data centers could crowd Earth’s orbit. His message was straightforward and reassuring: space is vast beyond human intuition.

“Space is really big,” Musk said. “It’s not like space is gonna get crowded. Space is enormous. If you actually look at it relative to the Earth, the satellites are so tiny you can’t even see them.” He emphasized that even zooming in makes a satellite appear large, but from a planetary perspective, they are minuscule specks.

Elon on concerns that AI satellites will crowd space:

“Space is really big. It’s not like space is gonna get crowded. Space is enormous. If you actually look at it relative to the earth, the satellites are so tiny you can’t even see them.” https://t.co/Mvr7NpL25Q pic.twitter.com/5Fi629Rii7

— Sawyer Merritt (@SawyerMerritt) June 8, 2026

Musk pointed to SpaceX’s real-world experience operating roughly 10,000 Starlink satellites as evidence that large constellations can be managed safely. “We’ve got a pretty good idea of how to operate just really large constellations and do it safely,” he noted. SpaceX remains the only operator with meaningful experience at this scale, giving the company unique insight into tight orbital packing without compromising safety

The discussion highlighted SpaceX’s plans for “AI1” satellites—essentially orbiting racks of AI compute powered by massive solar arrays and cooled via radiative panels in space’s vacuum.

These satellites leverage proven Starlink V3 technology, making them simpler to design than communications satellites. A first-generation unit targets around 150 kW peak power, with a 70-meter wingspan for solar panels and radiators. Laser links will connect them to each other and the Starlink network, delivering low-latency access (on the order of a few milliseconds from low-Earth orbit).

FCC accepts SpaceX filing for 1 million orbital data center plan

Musk framed orbital data centers as a practical solution to Earth’s constraints on AI growth. Ground-based facilities face power shortages, water demands for cooling, and grid limitations. In space, constant sunlight (no day-night cycle), vacuum radiative cooling, and abundant solar energy offer clear advantages.

Production will ramp up at an expanded “Gigasat” factory in Bastrop, with solar manufacturing already underway and full AI satellite output expected at reasonable volume by the end of 2027. Starship’s rapid, high-volume launch capability, aiming for multiple flights per hour, will make massive deployment feasible.

Critics sometimes raise risks like space debris or Kessler syndrome, but Musk’s response underscores scale: even a million satellites would represent an imperceptible fraction of available orbital volume when viewed against Earth’s size. SpaceX’s automated collision avoidance and deorbiting designs for Starlink further mitigate concerns.

This vision ties into broader ambitions. Musk sees orbital AI compute as a step toward harnessing more of the Sun’s energy, advancing humanity on the Kardashev scale from a Type 0 civilization toward Type 1 and eventually Type 2. By moving power-hungry data centers off-planet, SpaceX aims to unlock orders-of-magnitude more compute while preserving Earth’s resources.

Musk’s comments should ease public anxiety. With proven operational expertise, incremental engineering, and the immensity of space itself, orbital data centers represent not overcrowding, but smart expansion into the final frontier.

Investor's Corner

Tesla Full Self-Driving hits Level 4? One analyst says yes

Tesla Full Self-Driving (Supervised) is currently listed as a Level 2 suite in terms of its passenger cars. As its Robotaxi platform continues to move quickly, it has been recognized as a Level 4 ride-sharing program by the State of Texas, as Tesla recently self-certified itself.

However, a Wall Street analyst is arguing that Tesla (NASDAQ: TSLA) has effectively achieved Level 4 autonomy in most conditions in all of its vehicles, drawing on personal experience and data released by the company.

Alex Potter of Piper Sandler said in a note to investors on Wednesday that “Tesla has solved the self-driving puzzle,” pointing to decisions to offer insurance discounts for FSD-enabled policies as a signal of confidence, which is backed up by stellar safety records compared to human driving.

Investing.com initially reported on Potter’s new note.

Additionally, Potter looks at the recent start of Cybercab production at Giga Texas as a potential indication that Tesla is ready to offer some level of unsupervised driving at least in the near future. The Cybercab has no steering wheel or pedals, completely eliminating the ability for human input.

He also sees Tesla’s allocation of “several hundred million USD (if not $1B+)” as confidence internally, seeing as it would be tough to set aside that amount of capital toward a project that the company does not see as relatively near-term.

Forward thinking, especially as Cybercab has no human controls, it would make sense that Tesla is at least close to self-driving. How close is another question.

Tesla has routinely teased that unsupervised FSD is close, but there are still a lot of things it feels as if the company has to roll out some more capability, including unsupervised parking features, known as “Banish,” better operation with regional self-driving performance, and other improvements.

That is not to say that Tesla FSD is super impressive already. It has already completed coast-to-coast drives across the United States and Canada, it routinely takes the stress out of driving for most people, and it has proven through Tesla Safety Reports that it is safer and involved in accidents less frequently than humans.

🚨 These are the first-ever FSD safety statistics out of the Netherlands, showing it was over 3.5x safer than human driving on Dutch roads.

The most recent numbers out of Tesla for North America show:

-Over 5.5 million miles between accidents for Teslas using FSD

-660k miles… https://t.co/XKlRzgSGEh pic.twitter.com/HX6kzh0ZKc— TESLARATI (@Teslarati) June 9, 2026

Even Potter believes it is capable, as he used it to go from Missoula, Montana, to Minneapolis, Minnesota, back in April.

“There’s no substitute for personal experience,” he wrote.