News

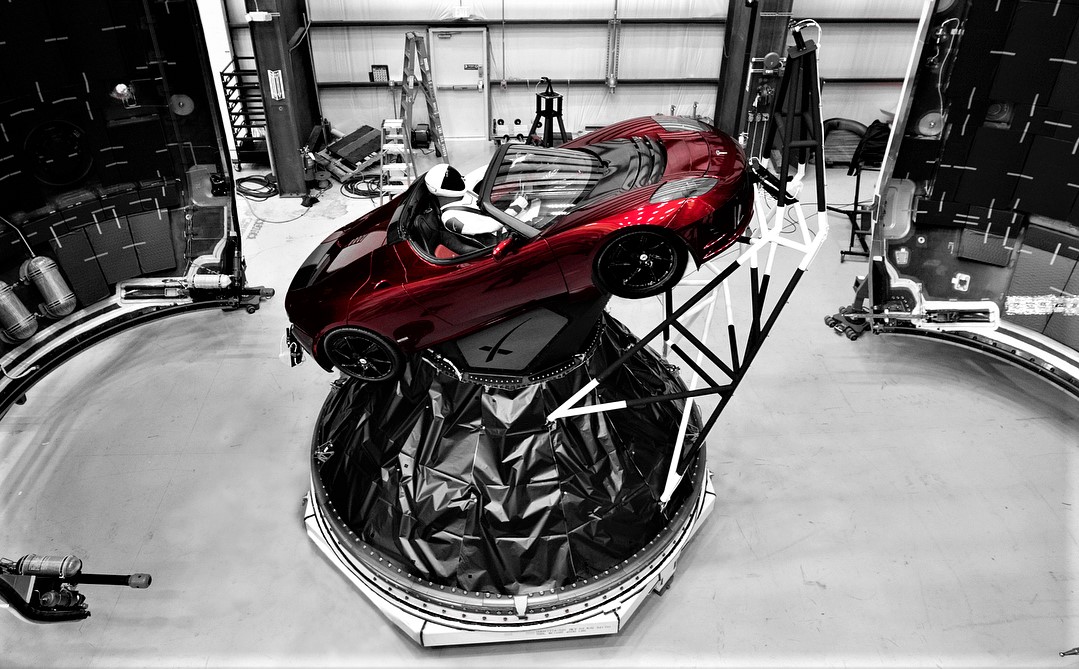

Tesla Roadster and ‘friends’ make history in newly-published log of 57k+ human objects in space

When the Tesla Roadster and its Starman occupant entered space aboard Falcon Heavy’s maiden voyage in 2018, it joined the ranks of one astronomer’s impressive database of human-made objects that have left Earth: The General Catalog of Artificial Space Objects (GCAT). It’s the most comprehensive collection of space object data available to the public, and its author recently published it in full for open-source use.

Jonathan McDowell, currently with the Harvard-Smithsonian Center for Astrophysics, created GCAT as an endeavor that began about 40 years go during his Apollo-inspired childhood.

“It was hard for me growing up in England to get details about space because the media there weren’t as interested in it as the U.S. media, so in a slightly obsessive way I started making a list of rocket launches… Now I have the best list,” McDowell told VICE in recently published comments. Lack of information in his younger days seems to have only been the beginning of the challenges the astronomer was willing to take on for his project. As detailed to VICE, McDowell also traveled to international space agency locations to obtain their old rocket lists and even learned Russian to translate that country’s space object data.

Although McDowell has been collecting his Catalog data for decades, the push to finally put all of his work online was inspired by more recent events. The risks of COVID-19 and “imminent death” threatened the database’s purpose. “There’s no point if it dies with me,” he told VICE. Publishing the GCAT had been in his plans, however, the pandemic pushed its priority to the top of McDowell’s personal bucket list.

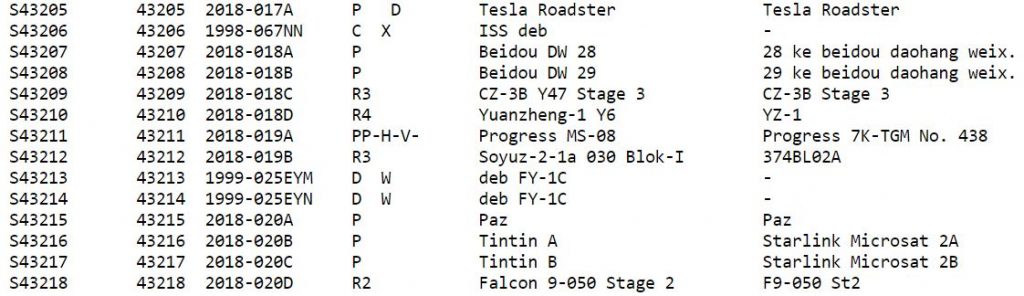

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

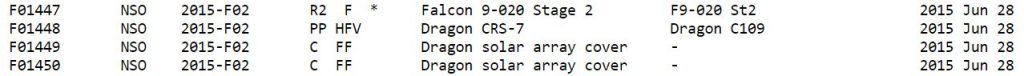

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

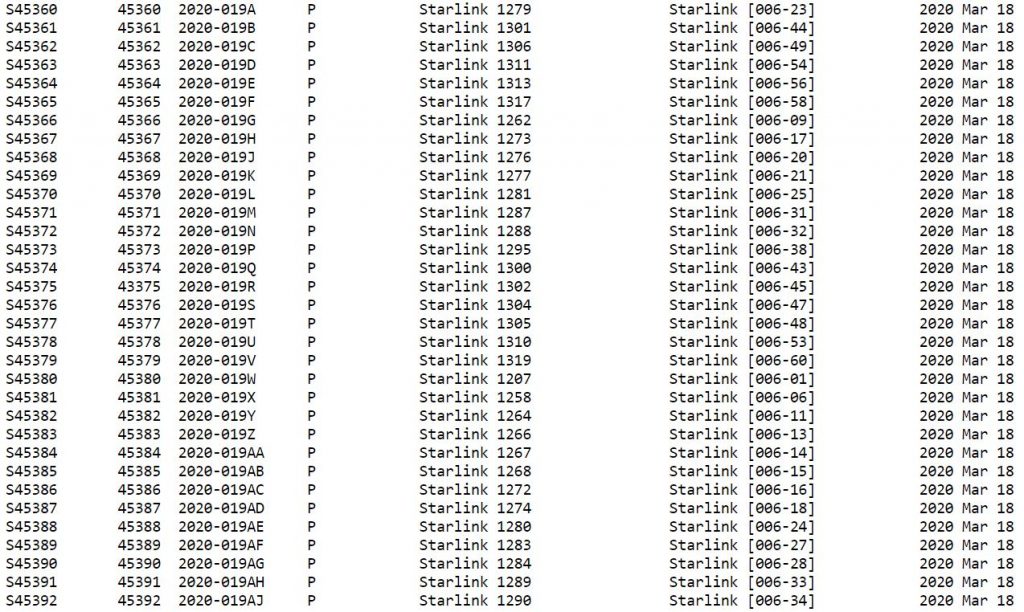

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

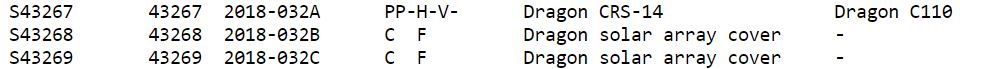

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

So, what exactly might one use the GCAT for? McDowell had his own suggestions, including the determination of how many working satellites are currently in space. Since the data is easy to export into software that allows sorting of tab-delimited files, one could perhaps also look at the amount of debris produced over the years to get a general picture for how active spaceflight operations were in the past or how they may be progressing. Plenty of information about each object’s origin and owner is included for this kind of research.

One of the GCAT data sets tracks failed objects that would have otherwise made it to orbit. As an example, looking at the number of items from failed launch attempts in 1958 (52) gives a hint as to how intense the space race between the US and the Soviet Union was at the time. Data browsing could be used for general historical inquiry as well. For instance, Sputnik 1, launched by the Soviet Union on October 4, 1957, is object 00001; the Eagle lander still on the Moon from Apollo 11’s mission is object #04041; and the Tesla Roadster is object #43205.

Some of the data can inspire more historical awareness such as the listing of tools lost during on-orbit construction of the Soviets’ Mir Space Station in 1986. Of course, reminders of significant spaceflight misfortunes are also included like the Challenger Space Shuttle explosion in 1986 and SpaceX’s CRS-7 ISS resupply mission failure in 2015.

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

- Data from GCAT (J. McDowell, planet4589.org/space/gcat)

Since GCAT is inclusive of both functional items and notorious bits of space junk logged from decades of data digging, the Tesla Roadster and its 57,000+ “friends” are poised to help with some serious research now and in the far future.

“My audience is the historian 1,000 years from now,” McDowell explained. “I’m imagining that 1,000 years from now there will be more people living off Earth than on, and that they will look back to this moment in history as critically important.” For fans of Star Trek, this type of record keeping certainly seems to be relevant to future humans more often than not (away mission, anyone?). Perhaps that type of science fiction storyline will transpire into reality, just as so many of SpaceX’s achievements have done already.

Interestingly enough, McDowell is working on another project to track deep space objects beyond Earth’s orbit. Will space debris take center stage around Mars and beyond like it does around our own planet? Seeing the progress in one comprehensive database will certainly be an interesting way to show just how far humans have come since object #00001.

Investor's Corner

SpaceX IPO set to provide massive $11.6B windfall for teacher pension plan

The Ontario Teachers’ Pension Plan (OTPP) stands to reap one of the most extraordinary returns in pension fund history thanks to a bold 2019 investment in SpaceX.

According to a recent report from The Globe and Mail, the Toronto-based fund invested roughly $300 million CAD (~$220 million USD at the time) in Elon Musk’s space company as its inaugural deal through the Teachers’ Innovation Platform.

At SpaceX’s anticipated $1.75 trillion IPO valuation, set for a mid-June debut on Nasdaq under ticker $SPCX, that stake could now be worth up to $11.6 billion USD. This would represent a roughly 50x return and easily become OTPP’s most successful single investment ever.

The fund manages $279 billion in assets for approximately 346,000 working and retired teachers in Ontario, potentially delivering an average boost of around $33,500 per member if fully realized.

SpaceX has filed its S-1 and plans to price shares at $135 each, aiming to raise a record $75 billion in what would be the largest IPO in history, surpassing Saudi Aramco. The company reported $18.67 billion in revenue for 2025, driven primarily by Starlink satellite internet growth and NASA contracts, though it continues to post significant losses tied to ambitious R&D in Starship and AI initiatives.

Important pieces moving forward include:

- Starlink Expansion: The satellite broadband service is scaling rapidly, targeting global connectivity, especially in underserved rural and remote areas. This segment offers massive recurring revenue potential as numbers climb.

- Starship and Reusability Leadership: SpaceX’s fully reusable Starship aims to slash launch costs dramatically, enabling frequent missions, Mars ambitions, and lucrative government/defense contracts. Success here could unlock exponential growth.

- AI and Diversification: Recent moves, including ties to xAI, position SpaceX in high-growth AI infrastructure, broadening beyond traditional aerospace.

- Validation Scrutiny: While the $1.75 trillion target excites investors, analysts like Morningstar value the company closer to $780 billion, citing high multiples (around 90x trailing revenue) and execution risks. A 180-day lockup period will prevent early investors like OTPP from selling immediately post-IPO.

The irony has not been lost on observers. Ontario’s government previously canceled a Starlink rural internet contract amid political tensions involving Musk, yet the pension fund’s savvy investment, made when SpaceX was valued around $33-36 billion, and Starlink was nascent, delivers outsized gains independent of politics.

For OTPP, this windfall strengthens its already solid 111 percent funding ratio and underscores the value of patient, innovation-focused capital allocation.

For SpaceX, the IPO marks a new chapter: greater transparency, access to public markets for talent retention and growth capital, and heightened pressure to deliver on its multi-planetary vision.

All eyes are fixed on whether SpaceX can justify its lofty valuation through sustained execution. For Ontario teachers, the returns are already stellar, but SpaceX, like other Musk companies in the past, has plenty of things to prove. Perhaps the most ideal person for the job is at the helm, hoping to bring the company to a massive valuation.

News

Tesla skeptics will hate what this new reliability study says

In a notable shift for electric vehicle perceptions, Tesla has emerged as a standout performer in the latest iSeeCars longevity study, which analyzed over 174 million used vehicles.

The data reveals that Tesla models have a 4.6 percent chance of reaching 250,000 miles, matching the industry average of 4.8 percent and tying for sixth place among 32 brands. This positions Tesla ahead of many established names, including Subaru (2.3 percent, roughly half of Tesla’s rate), Nissan (2.4 percent), Mazda, BMW, Mercedes-Benz, and Porsche.

Toyota leads with an impressive 17.8 percent likelihood, followed by Lexus (12.8 percent), Honda, and Acura. Yet Tesla’s result stands out for a relatively young EV brand. Experts attribute this to the inherent simplicity of electric powertrains: fewer moving parts mean no oil changes, timing belts, or complex engine components that typically fail in internal combustion vehicles.

Fewer things to maintain means fewer things to break, and ultimately, fewer things to go wrong.

A Tesla is twice as likely to reach 250,000 miles as a Subaru⁰⁰“No engine, no oil changes, no timing chains, no fuel injectors, and far fewer moving parts overall”⁰⁰https://t.co/k8iJwbzrrp

— Tesla North America (@tesla_na) June 8, 2026

This design advantage helps Teslas defy unfounded skepticism about battery longevity and overall durability, two things that have plagued the company from outsider perspectives without much proof.

The iSeeCars reliability ratings further bolster Tesla’s case. The Tesla Model S earns a strong 7.9/10 reliability score, ranking No. 1 out of 35 most reliable electric cars. It boasts a predicted average lifespan of about 154,419 miles (around 16.9 years) and a 21.9 percent chance of hitting 200,000 miles.

Tesla, as an electric car brand, also scores 7.9/10 overall, securing the top spot among electric vehicle manufacturers in several luxury and segment categories.

Real-world examples reinforce the data. High-mileage Teslas, including Model S vehicles exceeding one million miles, demonstrate that EVs can endure when properly maintained. Owners report minimal mechanical issues beyond typical wear items like tires and brakes, which regenerative braking often extends.

Tesla Model 3 hits quarter million miles with original battery and motor

This performance challenges narratives around EV reliability, especially amid mixed reports from other sources like Consumer Reports or regional inspections. iSeeCars‘ massive dataset emphasizes long-term durability over short-term defect rates, painting Tesla as a leader in sustainable, high-mileage ownership.

For buyers prioritizing longevity and low maintenance, Tesla’s results signal strong value. While no brand is flawless, factors like driving habits, climate, and software updates matter—the numbers suggest Tesla belongs among the elite for those seeking vehicles built to last.

As EV adoption grows, this iSeeCars data underscores Tesla’s engineering edge in creating enduring, future-proof automobiles.

DIY

Tesla owner fixes common feature complaint with crafty DIY retrofit

Tesla owners have long griped about the wireless phone charger in the Model Y and other vehicles. It often turns smartphones into miniature ovens rather than reliably topping them up.

Software engineer and Model Y owner Michał Gapiński tackled this issue head-on with a clever DIY upgrade, swapping the cooled wireless charger pad from the China-made Model YL in for the one that came standard in his vehicle.

There are several key differences between the U.S.-built Model Y’s wireless charging pad and the one that Tesla has been installing in the Model YL. The one installed in U.S.-built vehicles lacks active cooling and relies on basic heat dissipation, leading to rapid temperature buildup during charging. In contrast, the Model YL integrates a small fan for active cooling.

Will it fit? Fingers crossed, I want a first YL charger deployed in the regular juniper pic.twitter.com/wWDqSNFVkW

— Michał Gapiński (@mikegapinski) June 2, 2026

This design maintains lower temperatures even in warm ambient conditions, though it does not support faster Qi2 charging on iPhones. The connector matches exactly, making physical swaps feasible on compatible consoles, but coding is required to enable full functionality.

Owners in the U.S. have complained about the wireless charging pad, with many reporting that overheating is fairly common. Within 20 or 30 minutes of placing a phone on the wireless charging pad, many have reported overheating messages on their phones, which halt charging and essentially turn the pad into a fancy place to rest your phone.

Many owners have opted to simply plug their phones into a charging cord. Tesla has acknowledged the problem by releasing several solutions for owners, including a relatively new feature that allows you to simply turn off the charging and simply act as a holder for your phone while driving.

Gapiński said that he sourced the cooled pad affordably from China, and it cost under $200 for the part.

He removed the existing console charger, swapped in the new unit, confirming a perfect connector fit, and handled the trim differences. Since the parameter isn’t fully secured, he enabled it through custom coding outside official Toolbox.

Connector is identical, she fits, now time to code it. https://t.co/Y9idgDrpCq pic.twitter.com/uwwgq6blg7

— Michał Gapiński (@mikegapinski) June 2, 2026

The fan activates quietly, blending with AC and seat cooling. He reported the installation was effective and the wireless charging pad worked perfectly; it even kept the phone cool as it stayed at just 86 degrees Fahrenheit. Many times, the wireless charging pad will bring the phone’s temperature well above 100 degrees, sometimes even being relatively hot to the touch.

The retrofit worked, no issues. First Model Y with a cooled wireless charger! No QI2/faster charging on the iPhone but it does not boil the phone even when it is 30 degrees outside.

The fan kicks in, it is not audible especially with the air conditioning and seat cooling. The… https://t.co/JOyR8Tb1Yo pic.twitter.com/kJcYhQIlYq

— Michał Gapiński (@mikegapinski) June 2, 2026

This retrofit highlighted an elegant, owner-driven solution to a factory shortcoming. It is expected that Tesla will begin installing the cooled charging pads into new cars in the U.S. soon, and hopefully, it will offer some sort of retrofit service or kit to owners here who want to use the charging pad effectively.

For those who love to tinker, it’s an accessible upgrade, proving that innovation thrives beyond the production line.