News

SpaceX’s first orbital-class Starship and Super Heavy to return to launch pad next week

CEO Elon Musk says that SpaceX could return the first orbital-class Starship prototype and its Super Heavy booster to the launch site after rolling the rockets back to the factory for finishing steps.

In response to a video of Super Heavy Booster 4 (B4) returning to the build site, Musk rather specifically stated that both Booster for and Starship 20 (S20) will return to the orbital launch pad on Monday, August 16th. SpaceX returned Ship 20 to its ‘high bay’ vertical integration facility mere hours after the Starship was stacked atop a Super Heavy booster (B4) for the first time ever on August 6th. For unknown reasons, perhaps due to high winds, Booster 4 spent another five days at the pad before SpaceX finally lifted it off the orbital launch mount and rolled it back to the high bay, where it took Ship 20’s place on August 11th.

Almost immediately after S20’s August 6th return, its six Raptor engines were removed to make way for an engine-less proof test campaign that Musk has now implied could start as early as next Monday. Mirroring S20, SpaceX also begin uninstalling Super Heavy Booster 4’s 29 Raptor engines the same day it returned to the high bay.

Around 12 hours after the process began, SpaceX appeared to have removed 14 (just shy of half) of Super Heavy B4’s Raptor engines – a pace almost as spectacular as their 12-18 hour installation a bit less than two weeks prior. Aside from making engine removal dramatically easier, Musk says that SpaceX moved Ship 20 and Booster 4 back to the build site to expedite some minor final integration work – namely “small plumbing and wiring.”

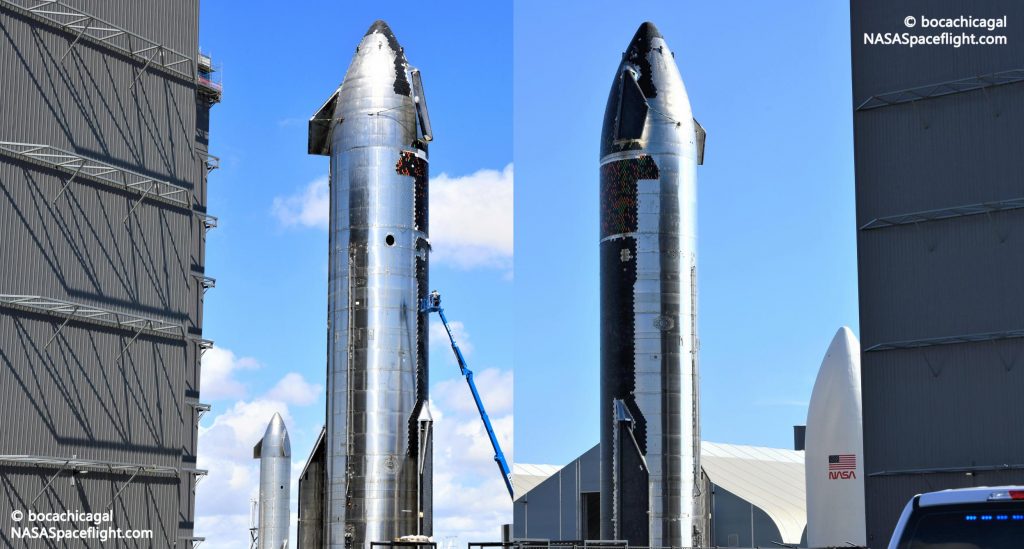

However, aside from Raptor removal, the most obvious and significant work ongoing since the pair’s return to the high bay is the process of inspecting Starship S20’s heat shield and repairing or replacing broken, chipped, and loose tiles. Not long after Ship 20 arrived back at the build site, workers in boom lifts began a seemingly arduous process of inspecting the Starship’s nose heat shield and marking – with colored tape – hundreds of tiles with cracks, chips, or other less visible issues.

After several days of inspections and hundreds of tiles marked, SpaceX finally began the process of removing off-nominal tiles early on August 12th. According to NASASpaceflight.com, that removal process is not particularly easy and can require the use of power tools to effectively cut tiles off their embedded mounting frames. Given the amount of force required, some level of care is also almost certainly needed to avoid damaging any adjacent tiles, which could quickly cause a minor misstep to exponentially spread. Nevertheless, a small team of SpaceX technicians seemingly managed to remove no less than several dozen (and maybe 100+) broken tiles in a few hours.

Up next, those removed tiles will need to be replaced. Still, it remains to be seen if SpaceX will choose to fully complete Starship S20’s “98% done” heat shield before sending the ship back to the launch site for proof and static fire testing. To a degree, putting Starship through a gauntlet of ground tests with a full heat shield installed would be an excellent test of the resilience of its thermal protection system to major thermal stresses from frosty steel skin and expansion/contraction during fueling, as well as violent vibrations during static fires.

However, Starship S20’s heat shield is already so close to completion that it might be only marginally less valuable to save time by testing the vehicle as soon as possible.

To an extent, Booster 4 is a much simpler case as Super Heavy needs to major thermal protection. However, according to Musk, some or all of Super Heavy’s 29 Raptor engines will need their own miniature thermal protection system – perhaps a flexible blanket-like enclosure not unlike what SpaceX uses to partially protect Falcon booster engines during reentry. It remains to be seen if Booster 4 will return to the launch site without engines for cryogenic proof testing or if SpaceX will install heat shielded Raptors before starting the first flightworthy Super Heavy’s first test campaign.

News

Tesla Full Self-Driving is taking over Europe: fourth country gets FSD approval

Tesla has secured regulatory approval for its Full Self-Driving (Supervised) system in Denmark, marking a significant step in the technology’s expansion across Europe.

Announced on June 9, the approval positions Denmark as the fourth European country to greenlight FSD Supervised, following the Netherlands, Lithuania, and Estonia.

Rollout to Danish vehicle owners is expected to begin soon, the company said.

The Danish Road Traffic Authority granted provisional approval after reviewing the original type approval issued by the Dutch vehicle authority (RDW) on April 10, 2026.

FSD Supervised now approved in Denmark 🇩🇰

Rollout will begin soon pic.twitter.com/Xpxwcme10k

— Tesla Europe, Middle East & Africa (@teslaeurope) June 9, 2026

This national recognition approach allows individual countries to bypass slower EU-wide harmonization processes, accelerating deployment. Lithuania activated the system on May 20, with Estonia following on May 29, demonstrating a rapid domino effect across the region.

FSD Supervised enables advanced driver assistance capabilities, including automatic steering, acceleration, braking, lane changes, and navigation through complex urban and rural environments. The system is designed for supervised use, as its name states, meaning drivers must remain attentive and ready to intervene at all times.

It adapts to diverse conditions, such as rain, night driving, and varied road types common in Denmark, but it is important to note that the tech is not fully autonomous.

Following a launch in Europe just a few months ago, with its first approval coming in the Netherlands, Tesla is just now highlighting the successful start.

Early data from the Netherlands highlights strong safety performance. Between April 10 and June 5, vehicles using FSD Supervised recorded 3.5 times fewer collisions than manual driving overall, with zero crashes reported on highways across more than 16.6 million kilometers driven.

These results underscore the potential of the technology to enhance road safety when properly supervised.

Tesla’s European push builds on its global footprint, now reaching 12 countries with FSD Supervised availability. The software receives continuous over-the-air updates, improving performance based on real-world data from millions of miles.

In Denmark, owners with compatible hardware—particularly newer vehicles equipped with Hardware 4 (HW4)—are anticipated to gain access first, though exact timelines and eligibility details will be confirmed during rollout.

This approval reflects growing regulatory confidence in supervised autonomy across Europe. As more nations recognize the Dutch certification, Tesla continues to demonstrate how its AI-driven approach can navigate real-world driving scenarios effectively. Denmark’s addition strengthens Tesla’s position in the region, paving the way for broader adoption on a continent that his been surprisingly slow to adopt the technology.

With FSD Supervised now approved in four European markets in just two months, the technology is steadily advancing toward wider availability. Tesla aims to refine the system further through ongoing data collection and software iterations, supporting its vision for safer and more efficient transportation.

News

Tesla revises FSD transfer policy on new Cybertruck trim, causing cancellations

Tesla has apparently revised the policy it previously had listed for Full Self-Driving transfers on the newest All-Wheel-Drive Cybertruck that the company had sold for a steal price of just $59,000 earlier this year.

After initially stating that customers who bought the pickup would be able to transfer FSD purchases, Tesla recently changed the language in those terms and conditions to reflect that this would no longer be the case.

Tesla launches new Cybertruck trim with more features than ever for a low price

The adjustment in terminology has caused a handful of orderers to cancel their reservations due to the loss of FSD transfer:

Just cancelled my 59k CT order today. My screenshot from that day of order (feb 20th) clearly shows that it would be eligible.

Terms were retroactively modified. Our 2020 Y and 2023 S are just fine for now. pic.twitter.com/D9PFnId1B4

— Ryan Scanlan 👥 (@Xenius) June 8, 2026

Tesla said orders for the new Cybertruck AWD must be placed by March 31, 2026, to qualify for the FSD transfer. The language in the document from earlier this year explicitly states that they “may qualify” for the transfer program, but the date of March 31 is explicitly mentioned.

Additionally, Tesla Delivery Advisors reached out to some orderers of the AWD Cybertruck, who were told there was “an update to the eligibility of the Full Self-Driving (Supervised) transfer.” Tesla stated they could:

- proceed without the transfer,

- upgrade to a Premium or Cyberbeast trim and request an FSD Transfer

- cancel the order and be refunded the $250 order fee.

Tesla turning around and changing these terms will undoubtedly result in a handful of cancellations on the part of those who have placed an order for this truck. They could pay $99 per month for an FSD subscription, which is now the only option available, but having purchased the suite outright on another vehicle and being told the transfer policy would be upheld, only to have it cancelled, is a tough pill to swallow.

These moves were also made by Tesla just before deliveries were set to begin on the Cybertruck AWD configuration. Reservation holders have started receiving VINs for their trucks, and Tesla is preparing to hand over the first units.

It’s a disappointing move from Tesla that will undoubtedly make some of its fans who have bought the truck frustrated.

Elon Musk

Tesla tipped its hand at where Robotaxi is heading next

In the world of autonomous ride-hailing, there are only a handful of names. Among those few companies lies a strategy play by each to keep the opposition on their toes. Tesla, on the other hand, already tipped its hand at where it is headed next.

Tesla has signaled its next major push in the autonomous ride-hailing market by filing for an Autonomous Vehicle Network Company permit in Nevada (Docket 26-05015). Through Tesla Robotaxi, LLC, the company seeks approval to operate up to 5,000 robotaxis in Clark County, including high-traffic areas like Las Vegas and Henderson airports, within the first 12 months of launch.

This filing builds on Tesla’s earlier testing approvals from the Nevada DMV in September 2025 and preparations such as maintenance hubs in the Las Vegas area. Nevada represents a strategic expansion into a major tourist destination, where high visitor volumes could drive strong utilization and showcase the reliability of unsupervised autonomy to a broad audience.

We’d have to assume this means Tesla is targeting Las Vegas, and it’s a great move from a business perspective.

Vegas is such a melting pot of people from all around the country and the world. It will expose people from all corners of the globe to Tesla’s autonomy capabilities https://t.co/Qz3fQmhULF pic.twitter.com/Du5pj2RyWC

— TESLARATI (@Teslarati) June 6, 2026

Approval would mark a significant step toward commercial operations in a new state, following progress in Texas.

Tesla’s shareholder decks and earnings calls have clearly outlined these ambitions. In the Q4 2025 shareholder deck, the company listed planned Robotaxi coverage for the first half of 2026, explicitly naming Las Vegas alongside Phoenix, Miami, Orlando, and Tampa, with Dallas and Houston already advancing. Austin was noted as “ramping unsupervised,” while the Bay Area remained in safety-driver mode.

By Q1 2026, the deck updated statuses to reflect launches in Dallas and Houston, with “preparations underway” for the remaining cities, including Las Vegas. Paid Robotaxi miles nearly doubled sequentially in Q1, underscoring momentum even as broader timelines adjusted slightly for regulatory and operational readiness.

On earnings calls, CEO Elon Musk and executives have emphasized a phased rollout prioritizing safety. Unsupervised operations in Texas have shown strong results with no reported accidents or injuries in the program. Tesla continues groundwork in additional major U.S. metros through testing and permitting, positioning it to scale quickly once approvals clear.

This Nevada move aligns with Tesla’s vision of transforming from an EV maker into an AI and robotics leader. The forthcoming Cybercab, which started production at Giga Texas in April, is expected to eventually dominate the fleet, replacing many Model Y vehicles and driving down costs to enable affordable rides.

For investors and the industry, this signals Tesla’s intent to dominate key Sun Belt and tourist markets where weather, regulations, and demand favor rapid scaling. Success in Las Vegas could validate the model for denser urban and high-tourism environments, accelerating the shift toward a future where robotaxis generate meaningful revenue.

Las Vegas will also expand knowledge among the general public at Tesla’s capabilities, helping people experience driverless ride-hailing from several companies during their time on The Strip.