Release notes for Tesla’s holiday software update were shared earlier this month, including the addition of the High Fidelity Park Assist feature. Some have since shared footage of the feature in action, showing how it works in parking lots and garages as users evaluate its usefulness when trying to park.

Tesla’s holiday update release notes were shared on X earlier this month, where the company first mentioned the new High Fidelity Park Assist mode. Tesla owner Ryan Hoffman, along with others, have shared videos of the feature on X, including one taken in a Supercharger lot on Saturday.

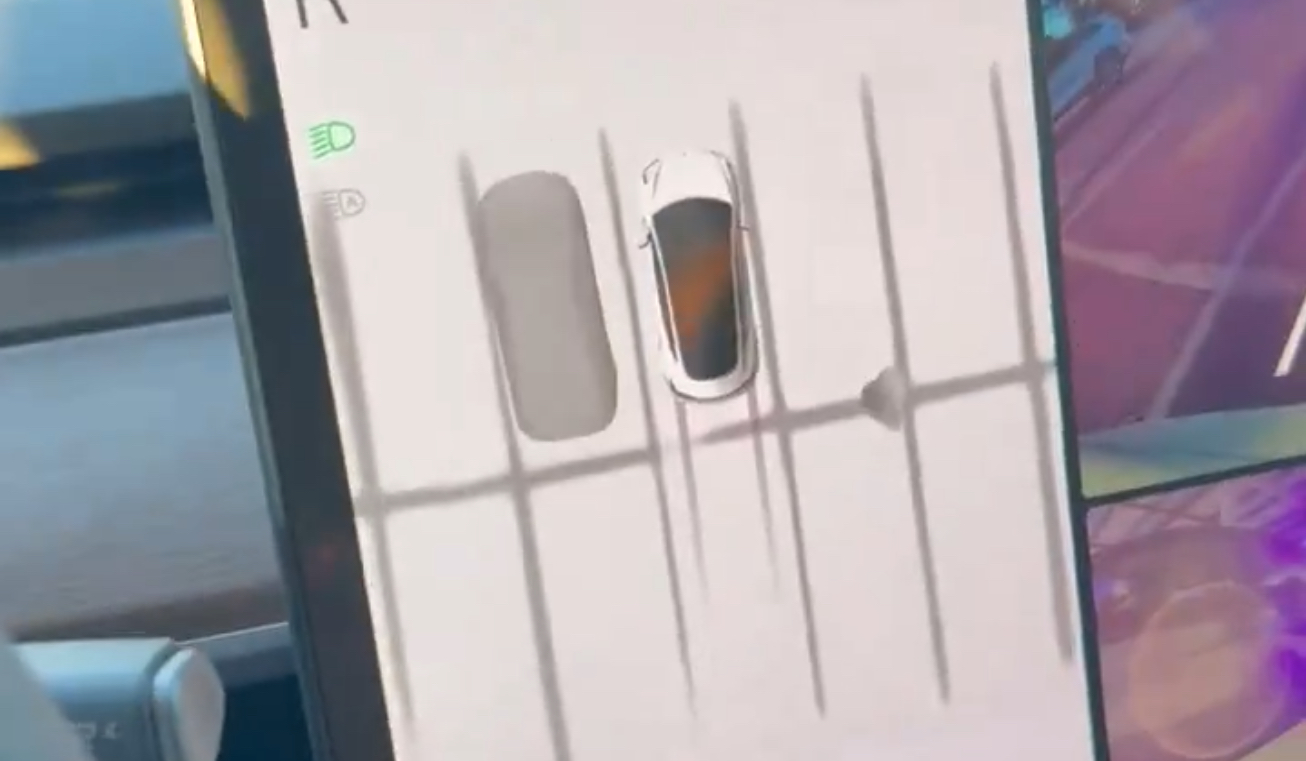

As can be seen in the video, the activated High Fidelity Park Assist mode shows a similar view to the highly requested Birds Eye 360-degree visualization. Hoffman says he drives a 2023 Model 3 RWD with HW3 and the Ryzen chip, meaning the car doesn’t have Ultrasonic Sensors (USS) and utilizes just Tesla Vision.

The video is taken at a Supercharger station, where Hoffman backs into a charging spot. Behind the visualization of the car, you can see an orange and yellow zone, signifying the vehicle’s close proximity to the charging pile. The top-down visualization shows that it recognizes the charging stalls as well as the parking lines on the ground, making it easy to back into the spot without the car ending up crooked.

He also shared a short video of what it looks like to back into the spot, including the actual rear camera’s video footage and the High Fidelity Park Assist view, and calling the feature a “game changer” for parking.

Here’s a short video of what it looked like to reverse into the spot. The lines for each parking spot are very handy… it’s a game changer for parking. pic.twitter.com/47BDBbXw7z

— Ryan Hoffman (@tekmaven) December 16, 2023

Others have shared similar footage of High Fidelity Park Assist, as many have wondered how exactly the feature is activated when being used in a parking lot. According to X user EVBaymax, the feature appears to engage when there is a clearly defined object in front of or around the car, and when users shift into reverse in a parking lot. Still, the current version seems to lack the ability to engage when driving forward, although it probably should have this ability.

In his videos of the feature, you can see the visualization switch on from the regular Autopilot view when reversing in the parking lot, and he also says that speeding up to around 15-20 mph makes the visualization disappear again. He goes on to call the feature “surprisingly accurate” and “definitely helpful,” and he also includes footage using it in a darker, in-garage environment.

Here’s what the switch from Autopilot visualizations to High-Fidelity Park Assist looks like in a parking lot pic.twitter.com/xtx8BylI0W

— kEV (@EVBaymax) December 16, 2023

You can see in the above videos that the feature still requires some prodding to work as desired, though once it’s engaged, it looks to be pretty useful. It does, however, appear to fill the need that many have requested with Birds Eye, 360-degree views, as the top-down visualizer makes it especially easy to see where the vehicle is in relation to other cars, parking lines, and more when parking.

Tesla CEO Elon Musk recently said that the company’s cars will eventually offer a convenient “Tap to Park” feature, in which the vehicle will identify open parking spots and let drivers select on-screen which to use, then letting the driver get out and allow the car to park itself in the selected space. Still, many are awaiting updates like Tesla’s Actually Smart Summon and the automaker only reintroduced its Vision-based Park Assist earlier this year.

Tesla Model 3 Highland owner’s manual confirms Auto Shift out of Park feature

What are your thoughts? Let me know at zach@teslarati.com, find me on X at @zacharyvisconti, or send your tips to us at tips@teslarati.com.

Elon Musk

Tesla’s Q1 delivery figures show Elon Musk was right

On the surface, the numbers reflect a mature EV market facing competition, softening demand, and the loss of certain incentives. Yet they also quietly validate a prediction Elon Musk has repeated for years: Tesla’s traditional auto business is becoming far less central to the company’s future.

Tesla reported its Q1 delivery figures on Thursday, and the figures — solid but unspectacular — show that CEO Elon Musk was right about what the company’s most important production and division would be.

We are seeing that shift occur in real time.

Tesla delivered 358,023 vehicles in the first quarter of 2026, according to the company’s official report released April 2.

The figure represents modest year-over-year growth of roughly 6 percent from Q1 2025’s 336,681 deliveries but a sharp sequential drop from Q4 2025’s 418,227. Production reached 408,386 vehicles, while energy storage deployments hit 8.8 GWh.

On the surface, the numbers reflect a mature EV market facing competition, softening demand, and the loss of certain incentives. Yet they also quietly validate a prediction Elon Musk has repeated for years: Tesla’s traditional auto business is becoming far less central to the company’s future.

Musk has long argued that vehicles alone will not define Tesla’s value.

Optimus Will Be Tesla’s Big Thing

In September 2025, Musk stated bluntly on X that “~80% of Tesla’s value will be Optimus,” the company’s humanoid robot.

He has described Optimus as potentially “more significant than the vehicle business over time.” Those comments were not abstract futurism. In January 2026, during the Q4 2025 earnings call, Musk announced the end of Model S and X production, framing it as an “honorable discharge,” he called it.

Those are the biggest factors.

~80% of Tesla’s value will be Optimus.

— Elon Musk (@elonmusk) September 1, 2025

The Fremont factory space, once dedicated to those flagship sedans, is being converted into an Optimus manufacturing line, with a long-term target of one million robots per year from that single facility alone.

The Q1 2026 numbers arrive at precisely the moment this strategic pivot is accelerating. Model 3 and Y deliveries totaled 341,893 units, while “other models” (including Cybertruck, Semi, and the final wave of S/X) added 16,130.

Growth is no longer explosive because Tesla is no longer chasing volume at all costs. Instead, the company is reallocating capital and factory floor space toward autonomy, energy storage, and robotics, businesses Musk believes will command far higher margins and enterprise value than incremental car sales.

Delivery Hits and Misses are Becoming Less Important

Wall Street’s pre-release consensus had pegged deliveries near 365,000. Coming in below that estimate might have rattled investors focused solely on automotive metrics. Yet Musk’s thesis has never been about maximizing quarterly vehicle shipments.

Tesla, he has insisted, “has never been valued strictly as a car company.”

The modest Q1 auto performance, paired with the deliberate wind-down of legacy programs and the ramp of Optimus, underscores that point. While EV demand stabilizes, Tesla is building the infrastructure for Robotaxis and humanoid robots that could dwarf today’s car business.

The future is here, and it is happening. It’s funny to think about how quickly Tesla was able to disrupt the traditional automotive business and force many car companies to show their hand. But just as fast as Tesla disrupted that, it is now moving to disrupt its own operation.

Cars, once the only recognizable and widely-known division of Tesla, is now becoming a background effort, slowly being overtaken by the company’s ambitions to dominate AI, autonomy, and robotics for years to come.

Critics may still view the shift as risky or premature. But the Q1 figures, solid but unspectacular in the auto segment, illustrate exactly what Musk has been signaling: the era when Tesla’s valuation rose and fell with every Model Y delivery is ending.

The company’s long-term bet is on AI-driven products that turn vehicles into high-margin robotaxis and factories into robot foundries. Thursday’s delivery report did not just meet the market’s tempered expectations; it proved Elon Musk was right all along.

The car business, once everything, is quietly becoming an important piece of a much larger puzzle.

Investor's Corner

Tesla reports Q1 deliveries, missing expectations slightly

The figure, however, fell short of Wall Street’s consensus estimate of 365,645 units, reflecting ongoing headwinds in the global EV market.

Tesla reported deliveries for the first quarter of 2026 today, missing expectations set by Wall Street analysts slightly as the company aims to have a massive year in terms of sales, along with other projects.

Tesla delivered 358,023 vehicles in the first quarter of 2026, marking a 6.3 percent increase from 336,681 vehicles in Q1 2025.

The figure, however, fell short of Wall Street’s consensus estimate of 365,645 units, reflecting ongoing headwinds in the global EV market. Production reached approximately 362,000 vehicles, with Model 3 and Model Y accounting for the vast majority. The results come as Tesla navigates softening demand, intensifying competition in China and Europe, and the expiration of key U.S. federal tax incentives.

🚨 BREAKING: Tesla delivered 358,023 vehicles in Q1 2026

Tesla also reported record energy deployments of 8.8 GWh

Wall Street had delivery consensus estimates of 365,645 pic.twitter.com/EVNAu5L3UT

— TESLARATI (@Teslarati) April 2, 2026

Energy storage deployments provided a bright spot, hitting a record 8.8 GWh in Q1. This underscores the accelerating momentum in Tesla’s energy segment, which has become a critical growth driver even as automotive volumes stabilize.

Year-over-year, the energy business continues to outpace vehicle sales, with analysts noting strong backlog demand for Megapack systems amid rising grid-scale needs for renewables and AI data centers.

Looking ahead, analysts project full-year 2026 vehicle deliveries in the range of 1.69 million units—a modest 3-5% rise from roughly 1.64 million in 2025.

Growth is expected to accelerate in the second half as production ramps and new incentives emerge in select markets. However, risks remain: persistent high interest rates, price competition from legacy automakers and Chinese EV makers, and potential margin pressure could cap upside.

Tesla has not issued official full-year guidance, but executives have signaled confidence in sequential quarterly improvements driven by cost reductions and refreshed lineups.

By the end of 2026, Tesla plans several major product launches to reignite momentum. The refreshed Model Y, including a new 7-seater variant already rolling out in select markets, is expected to boost family-oriented sales with updated styling, efficiency gains, and interior enhancements.

Autonomous ambitions remain central to Tesla’s mission, and that’s where the vast majority of the attention has been put. Volume production of the Cybercab (Robotaxi) is targeted to begin ramping in 2026, potentially unlocking new revenue streams through unsupervised Full Self-Driving (FSD) deployment.

A next-generation affordable EV platform, possibly under $30,000, is also in advanced planning stages for 2026 or 2027 introduction. On the energy front, the Megapack 3 and larger Megablock systems will drive further deployment scale.

While Q1 highlights transitional challenges in autos, Tesla’s diversified roadmap, spanning refreshed consumer vehicles, commercial trucks, Robotaxis, and explosive energy growth, positions the company for a stronger second half and beyond. Investors will watch Q2 closely for signs of sustained recovery, especially with new vehicles potentially on the horizon.

Elon Musk

NASA sends humans to the Moon for the first time since 1972 – Here’s what’s next

NASA’s Artemis II launched four astronauts toward the Moon on the first crewed lunar mission since 1972.

NASA’s Space Launch System rocket launches carrying the Orion spacecraft with NASA astronauts Reid Wiseman, commander; Victor Glover, pilot; Christina Koch, mission specialist; and CSA (Canadian Space Agency) astronaut Jeremy Hansen, mission specialist on NASA’s Artemis II mission, Wednesday, April 1, 2026, from Operations and Support Building II at NASA’s Kennedy Space Center in Florida. NASA’s Artemis II mission will take Wiseman, Glover, Koch, and Hansen on a 10-day journey around the Moon and back aboard SLS rocket and Orion spacecraft launched at 6:35pm EDT from Launch Complex 39B. (NASA/Bill Ingalls)

NASA launched four astronauts toward the Moon on April 1, 2026, marking the first crewed lunar mission since Apollo 17 in December 1972. The Artemis II mission lifted off from Kennedy Space Center aboard the Space Launch System rocket at 6:35 p.m. EDT, sending commander Reid Wiseman, pilot Victor Glover, mission specialist Christina Koch, and Canadian astronaut Jeremy Hansen on a 10-day journey around the far side of the Moon and back.

The mission does not include a lunar landing. It is a test flight designed to validate the Orion spacecraft’s life support systems, navigation, and communications in deep space with a crew aboard for the first time. If the crew reaches the planned distance of 252,000 miles from Earth, they will set a new record for the farthest any human has ever traveled, surpassing even the Apollo 13 distance record.

As Teslarati reported, SpaceX holds a central role in what comes next. The Starship Human Landing System is under contract to carry astronauts to the lunar surface for Artemis IV, now targeting 2028, after NASA restructured its mission sequence due to delays in Starship’s orbital refueling demonstration. Before any Moon landing happens, SpaceX must prove it can transfer propellant between two Starships in orbit, something no rocket program has done at this scale.

The last time humans left Earth’s orbit was 53 years ago. Gene Cernan and Harrison Schmitt of Apollo 17 were the final people to walk on the Moon, a record that stands to this day. Elon Musk has long argued that returning is not optional. “It’s been now almost half a century since humans were last on the Moon,” Musk said. “That’s too long, we need to get back there and have a permanent base on the Moon.”

The Artemis program involves 60 countries signed onto the Artemis Accords, and this mission sets several firsts beyond distance. Glover becomes the first person of color to travel beyond low Earth orbit, Koch the first woman, and Hansen the first non-American astronaut to reach the Moon’s vicinity. According to NASA’s live mission updates, the spacecraft’s solar arrays deployed successfully after liftoff and the crew completed a proximity operations demonstration within the first hours of flight.

Artemis II is step one. The Moon landing and the permanent lunar base come later. But after more than five decades, humans are heading back.