News

Elon Musk’s Twitter is working on removing child sexual abuse material at scale with “no mercy” for abusers

Elon Musk’s Twitter is working on removing child sexual abuse (CSAM) at scale with “no mercy for those who are involved in these illegal activities.” Andrea Stroppa shared a thread on Twitter with updates on how Twitter has moved from being lenient toward the child abuse problem to tackling it head-on.

Stroppa spearheaded the research team at Ghost Data and found that over 500 accounts openly shared the illegal material over a 20-day period in September. You can view the full report here. In his thread, Stroppa noted that he worked as an independent researcher along Twitter’s Trust and Safety team led by Ms. Ella Irin during the past few weeks. “Twitter achieved some relevant results I want to share with you,” Stroppa tweeted.

Stroppa noted that Twitter updated its mechanism to detect content related to CSAM and that it is faster, more efficient, and more aggressive. “No mercy for those who are involved in these illegal activities.”

THANK YOU! I’m so grateful.

— 𝔈𝔩𝔦𝔷𝔞 (@elizableu) December 3, 2022

Over the past few days, Twitter’s daily suspension rate has almost doubled, which means that the platform is doing a capillary analysis of contents. “It doesn’t matter when illicit content has been published. Twitter will find it and act accordingly.”

Stroppa pointed out that within the past 24 hours, Twitter began increasing its efforts and took down 44,000 suspicious accounts, and over 1,300 of those profiles tried to bypass detection using codewords and text in images to communicate.

He added that Twitter is aware of strategies, keywords, external URLs, and communication methods used by these accounts. “To increase its ability to protect children’s safety, Twitter involved independent and expert third parties.”

Stroppa added that Twitter is focusing its efforts on networks of Spanish-speaking and Portuguese-speaking users that share CSAM. “Twitter continues to have teams in place dedicated to investigating and taking action on these types of violations daily. Teams are more determined than ever and composed of passionate experts. Furthermore, Twitter simplified the process of users reporting illicit content.”

In a statement to Teslarati, Stroppa said, “If these good things are happening, it’s because Elon really cares about children’s safety. With Elon, we share the idea of the light of consciousness. This light goes through millions of people and improves a bit of the world.”

Eliza Bleu, who has been pushing Twitter to protect children since before Elon Musk purchased the platform, previously emphasized that the content needed to be removed “at scale.” In August, The Verge found that Twitter was unable to detect CSAM at scale.

“Twitter cannot accurately detect child sexual exploitation and non-consensual nudity at scale,” the Red Team, “to pressure-test the decision to allow adult creators to monetize on the platform by specifically focusing on what it would look like for Twitter to do this safely and responsibly.”

In her own thread, Eliza Bleu said that she never thought she would be able to tweet this, but “Twitter is currently working on detecting, removing, and reporting child sexual abuse material at scale.”

She added that the issue will take time to clean up, but the rapid changes are “just beautiful to see.”

On Saturday, Bleu told Teslarati, “While the corporate media was fear-mongering and spreading baseless conspiracy theories about Musk’s inability to tackle child sexual exploitation on Twitter with an alleged ‘skeleton crew,’ the platform was actually busy making amazing progress towards protecting sexually exploited children.”

“I’m extremely grateful to see the progress and the changes made under Elon Musk. He has accomplished in a month what the platform could not seem to do over the past decade about the issue of child sexual abuse material. The only time the platform previously made this much progress is when they implemented PhotoDNA.”

The technology Bleu is referring to was created when Microsoft partnered with Dartmouth College in 2009. PhotoDNA aids organizations in finding and removing known images of child exploitation. Bleu also called out Twitter’s advertisers that left the platform, citing Elon Musk as the reason, yet were silent on Twitter’s slowness and, at times, refusal to remove CSAM from its platform.

Your feedback is welcome. If you have any comments or concerns or see a typo, you can email me at johnna@teslarati.com. You can also reach me on Twitter at @JohnnaCrider1.

Teslarati is now on TikTok. Follow us for interactive news & more. Teslarati is now on TikTok. Follow us for interactive news & more. You can also follow Teslarati on LinkedIn, Twitter, Instagram, and Facebook.

News

Tesla cleared in Canada EV rebate investigation

Tesla has been cleared in an investigation into the company’s staggering number of EV rebate claims in Canada in January.

Canadian officials have cleared Tesla following an investigation into a large number of claims submitted to the country’s electric vehicle (EV) rebates earlier this year.

Transport Canada has ruled that there was no evidence of fraud after Tesla submitted 8,653 EV rebate claims for the country’s Incentives for Zero-Emission Vehicles (iZEV) program, as detailed in a report on Friday from The Globe and Mail. Despite the huge number of claims, Canadian authorities have found that the figure represented vehicles that had been delivered prior to the submission deadline for the program.

According to Transport Minister Chrystia Freeland, the claims “were determined to legitimately represent cars sold before January 12,” which was the final day for OEMs to submit these claims before the government suspended the program.

Upon initial reporting of the Tesla claims submitted in January, it was estimated that they were valued at around $43 million. In March, Freeland and Transport Canada opened the investigation into Tesla, noting that they would be freezing the rebate payments until the claims were found to be valid.

READ MORE ON ELECTRIC VEHICLES: EVs getting cleaner more quickly than expected in Europe: study

Huw Williams, Canadian Automobile Dealers Association Public Affairs Director, accepted the results of the investigation, while also questioning how Tesla knew to submit the claims that weekend, just before the program ran out.

“I think there’s a larger question as to how Tesla knew to run those through on that weekend,” Williams said. “It doesn’t appear to me that we have an investigation into any communication between Transport Canada and Tesla, between officials who may have shared information inappropriately.”

Tesla sales have been down in Canada for the first half of this year, amidst turmoil between the country and the Trump administration’s tariffs. Although Elon Musk has since stepped back from his role with the administration, a number of companies and officials in Canada were calling for a boycott of Tesla’s vehicles earlier this year, due in part to his association with Trump.

News

Tesla Semis to get 18 new Megachargers at this PepsiCo plant

PepsiCo is set to add more Tesla Semi Megachargers, this time at a facility in North Carolina.

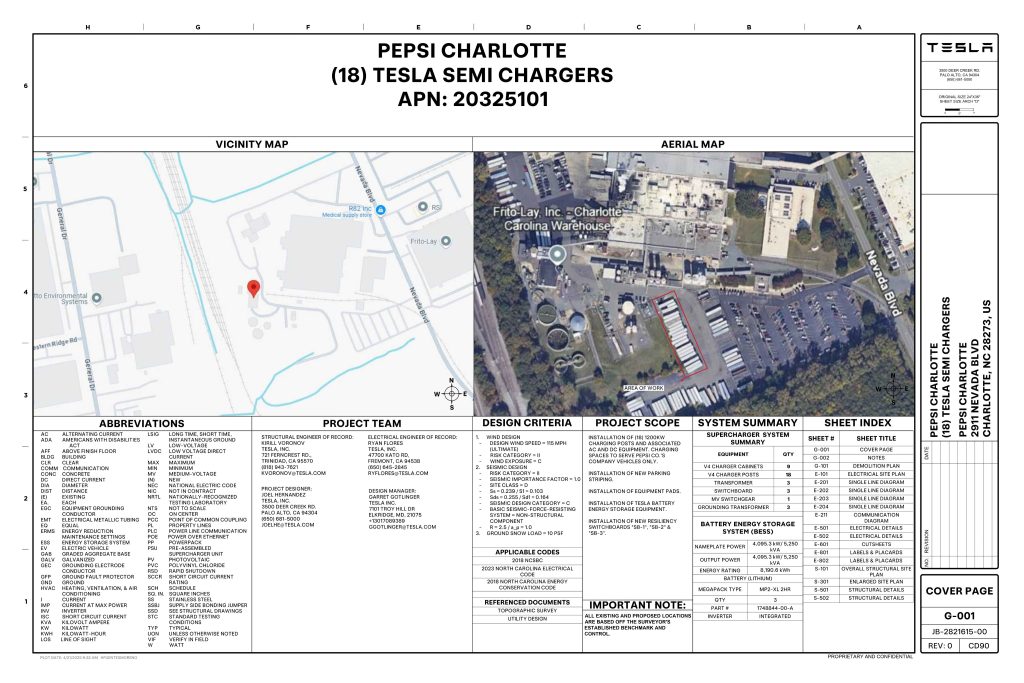

Tesla partner PepsiCo is set to build new Semi charging stations at one of its manufacturing sites, as revealed in new permitting plans shared this week.

On Friday, Tesla charging station scout MarcoRP shared plans on X for 18 Semi Megacharging stalls at PepsiCo’s facility in Charlotte, North Carolina, coming as the latest update plans for the company’s increasingly electrified fleet. The stalls are set to be built side by side, along with three Tesla Megapack grid-scale battery systems.

The plans also note the faster charging speeds for the chargers, which can charge the Class 8 Semi at speeds of up to 1MW. Tesla says that the speed can charge the Semi back to roughly 70 percent in around 30 minutes.

You can see the site plans for the PepsiCo North Carolina Megacharger below.

Credit: PepsiCo (via MarcoRPi1 on X)

Credit: PepsiCo (via MarcoRPi1 on X)

READ MORE ON THE TESLA SEMI: Tesla to build Semi Megacharger station in Southern California

PepsiCo’s Tesla Semi fleet, other Megachargers, and initial tests and deliveries

PepsiCo was the first external customer to take delivery of Tesla’s Semis back in 2023, starting with just an initial order of 15. Since then, the company has continued to expand the fleet, recently taking delivery of an additional 50 units in California. The PepsiCo fleet was up to around 86 units as of last year, according to statements from Semi Senior Manager Dan Priestley.

Additionally, the company has similar Megachargers at its facilities in Modesto, Sacramento, and Fresno, California, and Tesla also submitted plans for approval to build 12 new Megacharging stalls in Los Angeles County.

Over the past couple of years, Tesla has also been delivering the electric Class 8 units to a number of other companies for pilot programs, and Priestley shared some results from PepsiCo’s initial Semi tests last year. Notably, the executive spoke with a handful of PepsiCo workers who said they really liked the Semi and wouldn’t plan on going back to diesel trucks.

The company is also nearing completion of a higher-volume Semi plant at its Gigafactory in Nevada, which is expected to eventually have an annual production capacity of 50,000 Semi units.

Tesla executive teases plan to further electrify supply chain

News

Tesla sales soar in Norway with new Model Y leading the charge

Tesla recorded a 54% year-over-year jump in new vehicle registrations in June.

Tesla is seeing strong momentum in Norway, with sales of the new Model Y helping the company maintain dominance in one of the world’s most electric vehicle-friendly markets.

Model Y upgrades and consumer preferences

According to the Norwegian Road Federation (OFV), Tesla recorded a 54% year-over-year jump in new vehicle registrations in June. The Model Y led the charge, posting a 115% increase compared to the same period last year. Tesla Norway’s growth was even more notable in May, with sales surging a whopping 213%, as noted in a CNBC report.

Christina Bu, secretary general of the Norwegian EV Association (NEVA), stated that Tesla’s strong market performance was partly due to the updated Model Y, which is really just a good car, period.

“I think it just has to do with the fact that they deliver a car which has quite a lot of value for money and is what Norwegians need. What Norwegians need, a large luggage space, all wheel drive, and a tow hitch, high ground clearance as well. In addition, quite good digital solutions which people have gotten used to, and also a charging network,” she said.

Tesla in Europe

Tesla’s success in Norway is supported by long-standing government incentives for EV adoption, including exemptions from VAT, road toll discounts, and access to bus lanes. Public and home charging infrastructure is also widely available, making the EV ownership experience in the country very convenient.

Tesla’s performance in Europe is still a mixed bag, with markets like Germany and France still seeing declines in recent months. In areas such as Norway, Spain, and Portugal, however, Tesla’s new car registrations are rising. Spain’s sales rose 61% and Portugal’s sales rose 7% last month. This suggests that regional demand may be stabilizing or rebounding in pockets of Europe.

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoTesla investors will be shocked by Jim Cramer’s latest assessment

-

Elon Musk2 days ago

Elon Musk2 days agoxAI launches Grok 4 with new $300/month SuperGrok Heavy subscription

-

Elon Musk4 days ago

Elon Musk4 days agoElon Musk confirms Grok 4 launch on July 9 with livestream event

-

News1 week ago

News1 week agoTesla Model 3 ranks as the safest new car in Europe for 2025, per Euro NCAP tests

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoA Tesla just delivered itself to a customer autonomously, Elon Musk confirms

-

Elon Musk1 week ago

Elon Musk1 week agoxAI’s Memphis data center receives air permit despite community criticism

-

News2 weeks ago

News2 weeks agoXiaomi CEO congratulates Tesla on first FSD delivery: “We have to continue learning!”

-

News2 weeks ago

News2 weeks agoTesla sees explosive sales growth in UK, Spain, and Netherlands in June