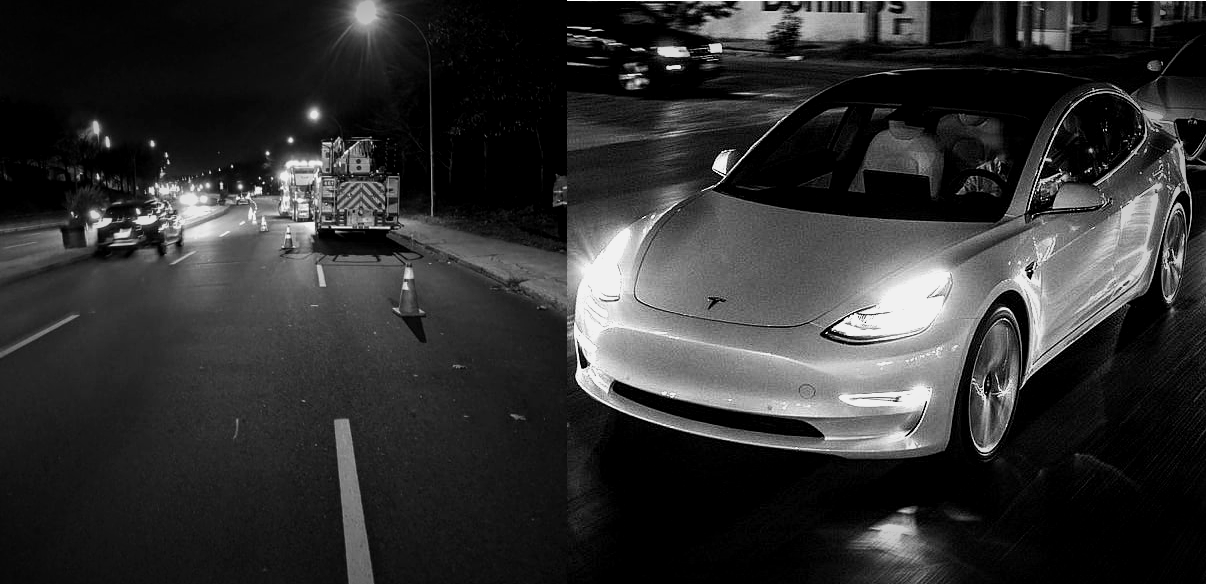

Tesla is currently being investigated by the National Highway Traffic Safety Administration (NHTSA) after several of its electric cars crashed into stationary emergency vehicles while Autopilot was engaged. The premise of the investigation itself is enough to whet the appetite of every Tesla skeptic since the idea of Autopilot crashing consistently into parked emergency vehicles makes for a compelling narrative. Tesla later released an update, enabling Autopilot to detect and slow down for stationary emergency vehicles. The NHTSA responded by calling out the company for not issuing a recall when it released its proactive over-the-air software update.

What was lost amidst the spread of the Tesla NHTSA investigation story was the fact that the relatively minor Autopilot update, which simply allowed vehicles to slow down when they detect things such as a police car or a firetruck parked on the side of the road, is already saving numerous lives. This is because there is a deadly problem on America’s roads, and it is something that very few seem to be acknowledging. Emergency personnel are dying on the job at a frighteningly frequent basis. They are dying because cars crash into them while they’re parked on the side of the road. And disturbingly enough, very little is being done about it.

The Flaws of HumanPilot

*Author’s Note and Trigger Warning: The succeeding sections of this article contains links to footage and other online references that may cause distress to readers. Discretion is advised.

One thing that truly stuck out while writing this piece was the sheer frequency of the accidents that happen to emergency personnel while they are responding to someone in need. This was despite the fact that all 50 states in the USA have a “Slow Down Move Over (SDMO)” Law in place. The premise of the SDMO law is simple: Upon noticing an emergency vehicle’s sirens or flashing lights on the side of the road, drivers are required to move away from the emergency vehicle by going into the next lane. If that is not possible, drivers must slow down to reduce the chances of an accident happening. The SDMO law is based on a very simple premise, but it is one that gets violated on a consistent basis.

This is partly due to states interpreting the law differently, with some adopting a “Slow Down and Move Over” model while others are following a “Slow Down or Move Over” system. But ultimately, there have been zero fatalities involving a vehicle that actually slowed down and moved over when they spotted a stationary emergency vehicle. This suggests that the law works, provided that it does get followed.

But when the Move Over Law gets violated, the human toll becomes disturbingly real. A report from the Government Accountability Office (GAO) indicates that about 8,000 injuries involving a stationary emergency vehicle have been reported in one year. As of this year alone, a total of 57 emergency responders have been killed while addressing a roadside issue. Posts from the National Struck-By Heroes Facebook group, which highlight the aftermath of Struck-by injuries (SBIs) are heartbreaking, and videos and posts shared by companies whose staff are killed while on the job are harrowing. This is something that was highlighted by James D. Garcia, the creator of the Move Over Law and an SBI survivor, who shared some of his insights with Teslarati.

“This year is the 25th anniversary of the first Slow Down Move Over Law, passed in South Carolina in 1996. Every state in the US has had an SDMO Law since 2012, and yet this year, we have already reached a record 56 responder deaths (This number has since risen to 57 as of this writing). Since 2018, there have been over 45,000 collisions with stationary roadside objects. Every seven seconds, an object is struck. Every other day, a responder is struck and injured. Every five days, a responder is killed.”

“If you ask the general public the most dangerous risk to a police officer, most would say the chance of being shot in pursuit. If you ask the biggest danger to a firefighter, most envision being trapped in a burning or collapsing building. But statistics prove the real story. Across all agencies, responders are twice more likely to die in an SBI than any other category of work-related injury. It is by far the most dangerous aspect of our job,” Garcia noted.

A DIY Solution

Perhaps the most heart-wrenching thing about the whole situation is the fact that SBIs are not even collected, considered, and analyzed formally by an official government agency, despite it being the leading cause of death and permanent injury for public safety and roadway responders. This situation has been so prevalent that James W. Law, a 32-year-veteran in the emergency roadside response industry and a specialist researcher in the Move Over Law, opted to develop a light sequence he fondly dubs as “E-Modes” to help drivers inform other vehicles that a parked emergency vehicle is nearby. Simply put, the problem of drivers not following SDMO laws is so real and deadly that emergency responders are DIY-ing a solution themselves — because they cannot count on anyone else.

Responding to roadside problems on America’s roads for the past 32 years is no joke, and over this time, Law has encountered the worst drivers possible. Law shared with Teslarati that over the course of his career, he has been personally involved in an accident four times, the first of which happened when he was just 18 years old. In what could very well prove the point that humans are bad drivers, one of Law’s experiences actually involved a driver intentionally crashing into him because he felt upset that traffic was disrupted due to an incident. Law’s legs broke the irate driver’s headlights because of the crash, and the driver wanted to accuse the roadside responder of damaging his car. The police were fortunately reasonable, and Law was not charged. The irate driver, on the other hand, received a $500 ticket for using his vehicle as a weapon.

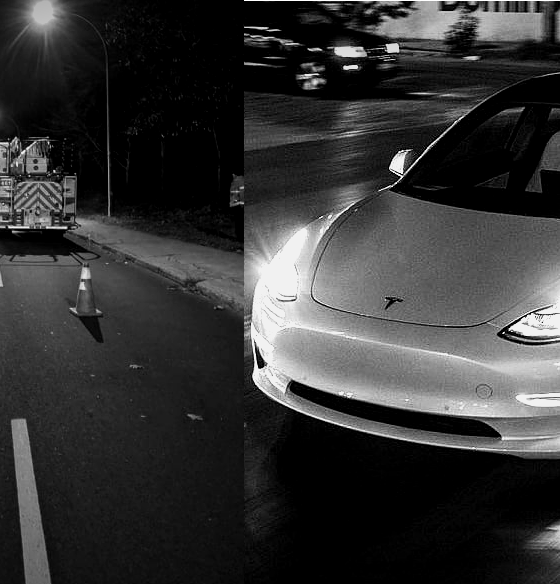

Speaking with Teslarati, Law admitted that he is a pretty notable Tesla supporter, and he tried his best to emulate CEO Elon Musk’s first principles thinking when he developed E-modes’ custom light sequence. He aims to donate the light sequence protocols he developed to Tesla, partly due to the fact that the company is really the only carmaker out there that seems to be actively doing something to address the deadly issue plaguing emergency roadside personnel today. This became quite evident when the company updated its vehicles to detect and respond to traffic cones on the road. This small update, Law noted, may seem minor — even marginal — to the layman, but for roadside personnel, it was a godsend.

“Tesla’s traffic cone recognition is a crucial safety feature that I take full advantage of on any and all incidents. Properly setting up cones to define the ‘Kill Zone’ offers a quick way to communicate directly to any Tesla vehicle. Unlike humans, Tesla Vision is always aware. It’s one of the ways I communicate with oncoming Teslas. If Elon adopts E-Modes, a Tesla could communicate back to me that it is situation-aware. As a safety advocate, I strongly insist that every emergency responders use cones on every scene every time because it’s the right thing to do to protect everyone,” Law said.

The Lone Problem Solver

Inasmuch as the mainstream media coverage of the NHTSA’s probe on Autopilot’s incidents with emergency vehicles is substantial, the fact is that Tesla only accounted for nine crash injuries with first responder vehicles in the past 12 months. That’s a tiny fraction of the ~8,000 injuries the GAO indicated in its report. The company has also steadily rolled out features to make its vehicles safer. With every update of Autopilot and FSD, features like traffic cone recognition get more refined, and the more refined they get, the more emergency responders they protect. Tesla’s recent Autopilot update, which allows vehicles to slow down when they detect a parked emergency vehicle, is further proof of this.

Law noted that he had been involved in thousands of close calls in his 32-year career, but the one that truly stuck out to him involved a Tesla driver from late 2019, just after the company rolled out Autopilot’s capability to recognize and avoid traffic cones. While he was defining a “Kill Zone” on the road after responding to an incident, he saw an approaching Tesla whose driver appeared to be looking down and not paying attention to the road. Law was unsure if the Tesla was on Autopilot, but the vehicle moved over to the other lane seemingly as soon as it detected the traffic cones that he set up. The veteran emergency responder noted that the Tesla driver seemed surprised as the electric vehicle avoided the cones on its own.

Such an incident, ultimately, is what makes Tesla stand apart, at least for now. It may be an inconvenient truth, especially to those who salivate at the thought of FSD or Autopilot going berserk and hunting down emergency responders, but the fact remains that Tesla is doing far more to protect both its drivers and other people on the road than any other carmaker out there. Emergency responder deaths are preventable, and as the creator of the Move Over Law noted, the lion’s share of these incidents is due to human error. It is this human error that technologies such as Autopilot and FSD are trying to solve, NHTSA probe notwithstanding.

“Ninety percent of all struck-by deaths are a direct result of poor driver behavior. That means that nine out of ten responder deaths could have been prevented if the driver had maintained control of their vehicle at a reasonable speed and reacted in a considerate and attentive manner. Twenty-three percent of lethal struck-by violators were impaired. Five percent were distracted, and another three percent were drowsy. It is important we continue to support efforts to reduce drunk driving and speak out about the rapid rise of distracted driving resulting in responder deaths. Multiple agencies have ongoing PR campaigns to address these aspects, but none are taking on the most dominant category — angry, aggressive, entitled, and selfish drivers.

“The remaining 69% of drivers that crashed into and killed a responder were completely sober. They saw the lights, they recognized the situation, yet they still felt the need to speed up and pass just a few more cars before they moved over. They were in too big of a hurry to slow down to a controllable speed and killed a responder. These drivers consciously made an intentional personal decision to carelessly disregard the life of a responder. Self-absorbed drivers have become the norm. Stronger laws, higher fines, bigger signs, and brighter lights have no effect once they get behind the wheel. We need to face this reality and develop a strategy that confronts this disregard. We must reinforce the value of a responder’s life over whatever current personal priorities are influencing these drivers’ behavior,” Garcia noted.

A (Potentially) Safer Future

One can only hope that agencies such as the NHTSA could see the bigger picture with regards to vehicles and the advantages of technologies such as Autopilot and Full Self-Driving. It takes an immense amount of short-sightedness, after all, to remain fixated on whether a recall was filed for a proactive Autopilot update, or on 11 incidents that involved a Tesla crashing into a stationary emergency vehicle, all while one emergency personnel is killed every five days. Focusing on Tesla and ignoring the larger problem at hand seems counter-productive at best.

In an ideal scenario, technologies such as Autopilot’s capability to identify, slow down, and potentially even move over to another lane when an emergency vehicle is detected would become mandatory for all cars on the road. As noted by esteemed auto teardown expert Sandy Munro, advanced driver-assist systems such as Autopilot and FSD have the potential to save lives on the same level as seatbelts, perhaps even more. And in this light, John Gardella, a shareholder at CMBG3 Law in Boston, MA, told Teslarati that if the NHTSA really wishes to help roll out new safety features, it would actually be a lot easier than one might imagine.

“Implementing the safety feature in Tesla’s vehicles will be easier than one might imagine. The National Highway Traffic Safety Administration (NHTSA) showed earlier in 2021 through its final rule for safety features for automated driving systems that it does not wish to set onerous standards prior to many features for automated driving system (ADS) vehicles coming to market. In fact, the desire of the NHTSA was to reduce barriers to having ADS safety features come to market more rapidly, and thereby accelerate autonomous vehicles coming to mass markets. The NHTSA received some criticism for its approach. However, the NHTSA does still have the authority to interpret the Federal Motor Vehicle Safety Standards (FMVSS), investigate perceived defects or unreasonably safe vehicle features, and carry out its enforcement authority, including recall power,” Gardella said.

Don’t hesitate to contact us with news tips. Just send a message to tips@teslarati.com to give us a heads up.

News

Tesla Full Self-Driving is taking over Europe: fourth country gets FSD approval

Tesla has secured regulatory approval for its Full Self-Driving (Supervised) system in Denmark, marking a significant step in the technology’s expansion across Europe.

Announced on June 9, the approval positions Denmark as the fourth European country to greenlight FSD Supervised, following the Netherlands, Lithuania, and Estonia.

Rollout to Danish vehicle owners is expected to begin soon, the company said.

The Danish Road Traffic Authority granted provisional approval after reviewing the original type approval issued by the Dutch vehicle authority (RDW) on April 10, 2026.

FSD Supervised now approved in Denmark 🇩🇰

Rollout will begin soon pic.twitter.com/Xpxwcme10k

— Tesla Europe, Middle East & Africa (@teslaeurope) June 9, 2026

This national recognition approach allows individual countries to bypass slower EU-wide harmonization processes, accelerating deployment. Lithuania activated the system on May 20, with Estonia following on May 29, demonstrating a rapid domino effect across the region.

FSD Supervised enables advanced driver assistance capabilities, including automatic steering, acceleration, braking, lane changes, and navigation through complex urban and rural environments. The system is designed for supervised use, as its name states, meaning drivers must remain attentive and ready to intervene at all times.

It adapts to diverse conditions, such as rain, night driving, and varied road types common in Denmark, but it is important to note that the tech is not fully autonomous.

Following a launch in Europe just a few months ago, with its first approval coming in the Netherlands, Tesla is just now highlighting the successful start.

Early data from the Netherlands highlights strong safety performance. Between April 10 and June 5, vehicles using FSD Supervised recorded 3.5 times fewer collisions than manual driving overall, with zero crashes reported on highways across more than 16.6 million kilometers driven.

These results underscore the potential of the technology to enhance road safety when properly supervised.

Tesla’s European push builds on its global footprint, now reaching 12 countries with FSD Supervised availability. The software receives continuous over-the-air updates, improving performance based on real-world data from millions of miles.

In Denmark, owners with compatible hardware—particularly newer vehicles equipped with Hardware 4 (HW4)—are anticipated to gain access first, though exact timelines and eligibility details will be confirmed during rollout.

This approval reflects growing regulatory confidence in supervised autonomy across Europe. As more nations recognize the Dutch certification, Tesla continues to demonstrate how its AI-driven approach can navigate real-world driving scenarios effectively. Denmark’s addition strengthens Tesla’s position in the region, paving the way for broader adoption on a continent that his been surprisingly slow to adopt the technology.

With FSD Supervised now approved in four European markets in just two months, the technology is steadily advancing toward wider availability. Tesla aims to refine the system further through ongoing data collection and software iterations, supporting its vision for safer and more efficient transportation.

News

Tesla revises FSD transfer policy on new Cybertruck trim, causing cancellations

Tesla has apparently revised the policy it previously had listed for Full Self-Driving transfers on the newest All-Wheel-Drive Cybertruck that the company had sold for a steal price of just $59,000 earlier this year.

After initially stating that customers who bought the pickup would be able to transfer FSD purchases, Tesla recently changed the language in those terms and conditions to reflect that this would no longer be the case.

Tesla launches new Cybertruck trim with more features than ever for a low price

The adjustment in terminology has caused a handful of orderers to cancel their reservations due to the loss of FSD transfer:

Just cancelled my 59k CT order today. My screenshot from that day of order (feb 20th) clearly shows that it would be eligible.

Terms were retroactively modified. Our 2020 Y and 2023 S are just fine for now. pic.twitter.com/D9PFnId1B4

— Ryan Scanlan 👥 (@Xenius) June 8, 2026

Tesla said orders for the new Cybertruck AWD must be placed by March 31, 2026, to qualify for the FSD transfer. The language in the document from earlier this year explicitly states that they “may qualify” for the transfer program, but the date of March 31 is explicitly mentioned.

Additionally, Tesla Delivery Advisors reached out to some orderers of the AWD Cybertruck, who were told there was “an update to the eligibility of the Full Self-Driving (Supervised) transfer.” Tesla stated they could:

- proceed without the transfer,

- upgrade to a Premium or Cyberbeast trim and request an FSD Transfer

- cancel the order and be refunded the $250 order fee.

Tesla turning around and changing these terms will undoubtedly result in a handful of cancellations on the part of those who have placed an order for this truck. They could pay $99 per month for an FSD subscription, which is now the only option available, but having purchased the suite outright on another vehicle and being told the transfer policy would be upheld, only to have it cancelled, is a tough pill to swallow.

These moves were also made by Tesla just before deliveries were set to begin on the Cybertruck AWD configuration. Reservation holders have started receiving VINs for their trucks, and Tesla is preparing to hand over the first units.

It’s a disappointing move from Tesla that will undoubtedly make some of its fans who have bought the truck frustrated.

Elon Musk

Tesla tipped its hand at where Robotaxi is heading next

In the world of autonomous ride-hailing, there are only a handful of names. Among those few companies lies a strategy play by each to keep the opposition on their toes. Tesla, on the other hand, already tipped its hand at where it is headed next.

Tesla has signaled its next major push in the autonomous ride-hailing market by filing for an Autonomous Vehicle Network Company permit in Nevada (Docket 26-05015). Through Tesla Robotaxi, LLC, the company seeks approval to operate up to 5,000 robotaxis in Clark County, including high-traffic areas like Las Vegas and Henderson airports, within the first 12 months of launch.

This filing builds on Tesla’s earlier testing approvals from the Nevada DMV in September 2025 and preparations such as maintenance hubs in the Las Vegas area. Nevada represents a strategic expansion into a major tourist destination, where high visitor volumes could drive strong utilization and showcase the reliability of unsupervised autonomy to a broad audience.

We’d have to assume this means Tesla is targeting Las Vegas, and it’s a great move from a business perspective.

Vegas is such a melting pot of people from all around the country and the world. It will expose people from all corners of the globe to Tesla’s autonomy capabilities https://t.co/Qz3fQmhULF pic.twitter.com/Du5pj2RyWC

— TESLARATI (@Teslarati) June 6, 2026

Approval would mark a significant step toward commercial operations in a new state, following progress in Texas.

Tesla’s shareholder decks and earnings calls have clearly outlined these ambitions. In the Q4 2025 shareholder deck, the company listed planned Robotaxi coverage for the first half of 2026, explicitly naming Las Vegas alongside Phoenix, Miami, Orlando, and Tampa, with Dallas and Houston already advancing. Austin was noted as “ramping unsupervised,” while the Bay Area remained in safety-driver mode.

By Q1 2026, the deck updated statuses to reflect launches in Dallas and Houston, with “preparations underway” for the remaining cities, including Las Vegas. Paid Robotaxi miles nearly doubled sequentially in Q1, underscoring momentum even as broader timelines adjusted slightly for regulatory and operational readiness.

On earnings calls, CEO Elon Musk and executives have emphasized a phased rollout prioritizing safety. Unsupervised operations in Texas have shown strong results with no reported accidents or injuries in the program. Tesla continues groundwork in additional major U.S. metros through testing and permitting, positioning it to scale quickly once approvals clear.

This Nevada move aligns with Tesla’s vision of transforming from an EV maker into an AI and robotics leader. The forthcoming Cybercab, which started production at Giga Texas in April, is expected to eventually dominate the fleet, replacing many Model Y vehicles and driving down costs to enable affordable rides.

For investors and the industry, this signals Tesla’s intent to dominate key Sun Belt and tourist markets where weather, regulations, and demand favor rapid scaling. Success in Las Vegas could validate the model for denser urban and high-tourism environments, accelerating the shift toward a future where robotaxis generate meaningful revenue.

Las Vegas will also expand knowledge among the general public at Tesla’s capabilities, helping people experience driverless ride-hailing from several companies during their time on The Strip.