Firmware

Federal Regulators Racing To Catch Up With Tesla Autopilot

Tesla’s Autopilot has caught federal regulators by surprise. But while they rush to catch up, the company is readying for more features and upgrades soon.

![Tesla Autopilot Version 7.0 Dashboard Display [Source: Tesla Motors]](https://www.teslarati.com/wp-content/uploads/2015/10/Tesla-Version-7-Autopilot-Dash.jpg)

During the Q3 earnings call on Tuesday, Elon Musk acknowledged that some drivers are misusing the Autosteer functions introduced in the latest Version 7.0. Despite Tesla’s warning that drivers must keep at least one hand on the steering wheel at all times, videos of Autpilot-enabled drivers depict a different story, showing drivers hopping into the back seat, shaving, reading the newspaper, or eating breakfast without ever touching the wheel.

Musk says his company is looking into putting “some additional constraints” on the Autopilot system in order to “minimize the possibility of people doing crazy things with it.” We know that Autopilot 1.01 will have improved lane holding, but Musk didn’t say what additional driver constraints would be introduced. It’s easy to imagine the software might be amended to require drivers to actually maintain contact with the wheel.

Those Autopilot videos are troubling to more than just Elon Musk and Tesla management. They have also captured the attention of federal regulators who are now on notice that Tesla automobiles are capable of doing things that are not subject to any federal oversight. Speaking to The Verge on Wednesday, Jeffrey Miller, associate professor of engineering practice at the University of Southern California and a member of the Institute of Electrical and Electronics Engineers, said Tesla’s beta software raises a host of questions for regulators.

“Beta software typically means that a company is not fully releasing this to the public,” Miller said. “They are releasing it to people who are willing to test it with the understanding there will be bugs in it.” Which is why Musk and company are so insistent that drivers remain actively engaged in controlling the car at all times. In its most recent monthly report on self-driving cars, Google told readers that it is pursuing fully autonomous systems precisely because it thinks human drivers will react too slowly to situations that require them to retake control of their cars.

For its part, the National Highway Transportation Safety Administration (NHTSA) says its mission is to “save lives, prevent injuries, [and] reduce vehicle-related crashes.” The agency “applies performance requirements to the regulated system as a whole, and typically does not develop requirements for specific elements within the regulated system such as its software,” a spokesman told The Verge. “As with any new vehicle feature, manufacturers are free to offer it. If defective however, [the] agency can pursue a recall.”

The NHTSA is not blind to the availability of new computer aided systems that help reduce the risk of injury or death. A year ago, it updated its 5-Star vehicle safety ratings to include automatic emergency braking as a recommended safety technology. And Since 2011, it has also added electronic stability control, forward collision warning, lane departure warning, and rearview camera systems to its list of “recommended” technologies. A NHTSA spokesperson told The Verge, “We are currently assessing the need for additional standards as it relates to software and vehicle electronics in general.”

Miller argues that regulators should lead the way in developing safety standards for beta updates and fully self-driving cars as they start to come online. But he says they probably won’t. “Very rarely do we get proactive laws. They’re always reactive. Right now we have an opportunity to get in front of the technology. We’ve had these small, incremental releases to the public, and we’ll continue to see small, incremental releases until we see a completely driverless vehicle around 2019, 2020.” He adds, “But this is the time that we need these regulatory agencies to say, ‘We’ve four years before we’re projecting one of these vehicles are released to the consumer market. Let’s come up with the laws first before that happens.’”

In the meantime, Tesla is not waiting around for regulators to act and currently have 40,000 cars with Autopilot that interact with one another via its ‘fleet learning’ technology. When one car learns something, all of them learn the same thing. With almost a million miles a day being recorded by Autopilot-enabled Teslas, the rate of feedback and improvement through machine learning will be an ongoing uphill challenge for regulators to keep up.

Related Autopilot News

- Watch Tesla Autopilot parallel park with precision

- Tesla Autopilot emergency saves driver from head-on collision

- Who is responsible when Tesla Autopilot results in a crash?

- Tesla Building Next Gen Maps through its Autopilot Drivers

Firmware

Tesla mobile app shows signs of upcoming FSD subscriptions

It appears that Tesla may be preparing to roll out some subscription-based services soon. Based on the observations of a Wales-based Model 3 owner who performed some reverse-engineering on the Tesla mobile app, it seems that the electric car maker has added a new “Subscribe” option beside the “Buy” option within the “Upgrades” tab, at least behind the scenes.

A screenshot of the new option was posted in the r/TeslaMotors subreddit, and while the Tesla owner in question, u/Callump01, admitted that the screenshot looks like something that could be easily fabricated, he did submit proof of his reverse-engineering to the community’s moderators. The moderators of the r/TeslaMotors subreddit confirmed the legitimacy of the Model 3 owner’s work, further suggesting that subscription options may indeed be coming to Tesla owners soon.

Did some reverse engineering on the app and Tesla looks to be preparing for subscriptions? from r/teslamotors

Tesla’s Full Self-Driving suite has been heavily speculated to be offered as a subscription option, similar to the company’s Premium Connectivity feature. And back in April, noted Tesla hacker @greentheonly stated that the company’s vehicles already had the source codes for a pay-as-you-go subscription model. The Tesla hacker suggested then that Tesla would likely release such a feature by the end of the year — something that Elon Musk also suggested in the first-quarter earnings call. “I think we will offer Full Self-Driving as a subscription service, but it will be probably towards the end of this year,” Musk stated.

While the signs for an upcoming FSD subscription option seem to be getting more and more prominent as the year approaches its final quarter, the details for such a feature are still quite slim. Pricing for FSD subscriptions, for example, have not been teased by Elon Musk yet, though he has stated on Twitter that purchasing the suite upfront would be more worth it in the long term. References to the feature in the vehicles’ source code, and now in the Tesla mobile app, also listed no references to pricing.

The idea of FSD subscriptions could prove quite popular among electric car owners, especially since it would allow budget-conscious customers to make the most out of the company’s driver-assist and self-driving systems without committing to the features’ full price. The current price of the Full Self-Driving suite is no joke, after all, being listed at $8,000 on top of a vehicle’s cost. By offering subscriptions to features like Navigate on Autopilot with automatic lane changes, owners could gain access to advanced functions only as they are needed.

Elon Musk, for his part, has explained that ultimately, he still believes that purchasing the Full Self-Driving suite outright provides the most value to customers, as it is an investment that would pay off in the future. “I should say, it will still make sense to buy FSD as an option as in our view, buying FSD is an investment in the future. And we are confident that it is an investment that will pay off to the consumer – to the benefit of the consumer.” Musk said.

Firmware

Tesla rolls out speed limit sign recognition and green traffic light alert in new update

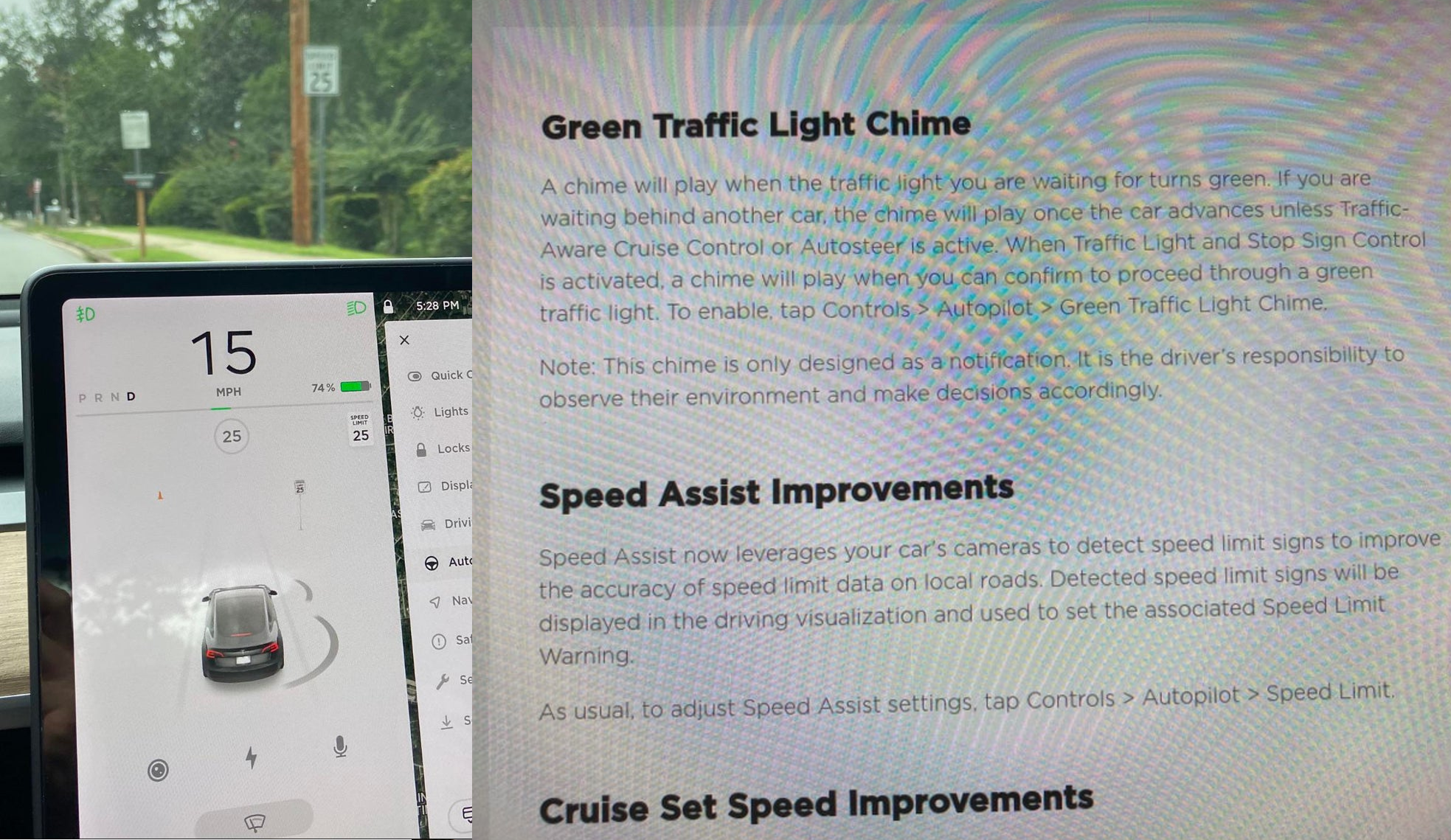

Tesla has started rolling out update 2020.36 this weekend, introducing a couple of notable new features for its vehicles. While there are only a few handful of vehicles that have reportedly received the update so far, 2020.36 makes it evident that the electric car maker has made some strides in its efforts to refine its driver-assist systems for inner-city driving.

Tesla is currently hard at work developing key features for its Full Self-Driving suite, which should allow vehicles to navigate through inner-city streets without driver input. Tesla’s FSD suite is still a work in progress, though the company has released the initial iterations of key features such Traffic Light and Stop Sign Control, which was introduced last April. Similar to the first release of Navigate on Autopilot, however, the capabilities of Traffic Light and Stop Sign Control were pretty basic during their initial rollout.

2020.36 Showing Speed Limit Signs in Visualization from r/teslamotors

With the release of update 2020.36, Tesla has rolled out some improvements that should allow its vehicles to handle traffic lights better. What’s more, the update also includes a particularly useful feature that enables better recognition of speed limit signs, which should make Autopilot’s speed adjustments better during use. Following are the Release Notes for these two new features.

Green Traffic Light Chime

“A chime will play when the traffic light you are waiting for turns green. If you are waiting behind another car, the chime will play once the car advances unless Traffic-Aware Cruise Control or Autosteer is active. When Traffic Light and Stop Sign Control is activated, a chime will play when you can confirm to proceed through a green traffic light. To enable, tap Controls > Autopilot > Green Traffic Light Chime.

“Note: This chime is only designed as a notification. It is the driver’s responsibility to observe their environment and make decisions accordingly.”

Speed Assist Improvements

“Speed Assist now leverages your car’s cameras to detect speed limit signs to improve the accuracy of speed limit data on local roads. Detected speed limit signs will be displayed in the driving visualization and used to set the associated Speed Limit Warning.

“As usual, to adjust Speed Assist settings, tap Controls > Autopilot > Speed Limit.”

Footage of the new green light chime in action via @NASA8500 on Twitter ✈️ from r/teslamotors

Amidst the rollout of 2020.36’s new features, speculations were abounding among Tesla community members that this update may include the first pieces of the company’s highly-anticipated Autopilot rewrite. Inasmuch as the idea is exciting, however, Tesla CEO Elon Musk has stated that this was not the case. While responding to a Tesla owner who asked if the Autopilot rewrite is in “shadow mode” in 2020.36, Musk responded “Not yet.”

Firmware

Tesla rolls out Sirius XM free three-month subscription

Tesla has rolled out a free three-month trial subscription to Sirius XM, in what appears to be the company’s latest push into making its vehicles’ entertainment systems more feature-rich. The new Sirius XM offer will likely be appreciated by owners of the company’s vehicles, especially considering that the service is among the most popular satellite radios in the country today.

Tesla announced its new offer in an email sent on Monday. An image that accompanied the communication also teased Tesla’s updated and optimized Sirius XM UI for its vehicles. Following is the email’s text.

“Beginning now, enjoy a free, All Access three-month trial subscription to Sirius XM, plus a completely new look and improved functionality. Our latest over-the-air software update includes significant improvements to overall Sirius XM navigation, organization, and search features, including access to more than 150 satellite channels.

“To access simply tap the Sirius XM app from the ‘Music’ section of your in-car center touchscreen—or enjoy your subscription online, on your phone, or at home on connected devices. If you can’t hear SiriusXM channels in your car, select the Sirius XM ‘Subscription’ tab for instruction on how to refresh your audio.”

Tesla has actually been working on Sirius XM improvements for some time now. Back in June, for example, Tesla rolled out its 2020.24.6.4 update, and it included some optimizations to its Model S and Model X’s Sirius XM interface. As noted by noted Tesla owner and hacker @greentheonly, the source code of this update revealed that the Sirius XM optimizations were also intended to be released to other areas such as Canada.

Interestingly enough, Sirius XM is a popular feature that has been exclusive to the Model S and X. Tesla’s most popular vehicle to date, the Model 3, is yet to receive the feature. One could only hope that Sirius XM integration to the Model 3 may eventually be included in the future. Such an update would most definitely be appreciated by the EV community, especially since some Model 3 owners have resorted to using their smartphones or third-party solutions to gain access to the satellite radio service.

The fact that Tesla seems to be pushing Sirius XM rather assertively to its customers seems to suggest that the company may be poised to roll out more entertainment-based apps in the coming months. Apps such as Sirius XM, Spotify, Netflix, and YouTube, may seem quite minor when compared to key functions like Autopilot, after all, but they do help round out the ownership experience of Tesla owners. In a way, Sirius XM does make sense for Tesla’s next-generation of vehicles, especially the Cybertruck and the Semi, both of which would likely be driven in areas that lack LTE connectivity.

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoTesla investors will be shocked by Jim Cramer’s latest assessment

-

News2 days ago

News2 days agoTesla debuts hands-free Grok AI with update 2025.26: What you need to know

-

Elon Musk4 days ago

Elon Musk4 days agoxAI launches Grok 4 with new $300/month SuperGrok Heavy subscription

-

Elon Musk7 days ago

Elon Musk7 days agoElon Musk confirms Grok 4 launch on July 9 with livestream event

-

News1 week ago

News1 week agoTesla Model 3 ranks as the safest new car in Europe for 2025, per Euro NCAP tests

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoxAI’s Memphis data center receives air permit despite community criticism

-

News4 days ago

News4 days agoTesla begins Robotaxi certification push in Arizona: report

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoTesla scrambles after Musk sidekick exit, CEO takes over sales

![Tesla Autopilot Version 7.0 Dashboard Display [Source: Tesla Motors]](http://www.teslarati.com/wp-content/uploads/2015/10/Tesla-Version-7-Autopilot-Dash-1024x384.jpg)