News

Mars travelers can use ‘Star Trek’ Tricorder-like features using smartphone biotech: study

Plans to take humans to the Moon and Mars come with numerous challenges, and the health of space travelers is no exception. One of the ways any ill-effects can be prevented or mitigated is by detecting relevant changes in the body and the body’s surroundings, something that biosensor technology is specifically designed to address on Earth. However, the small size and weight requirements for tech used in the limited habitats of astronauts has impeded its development to date.

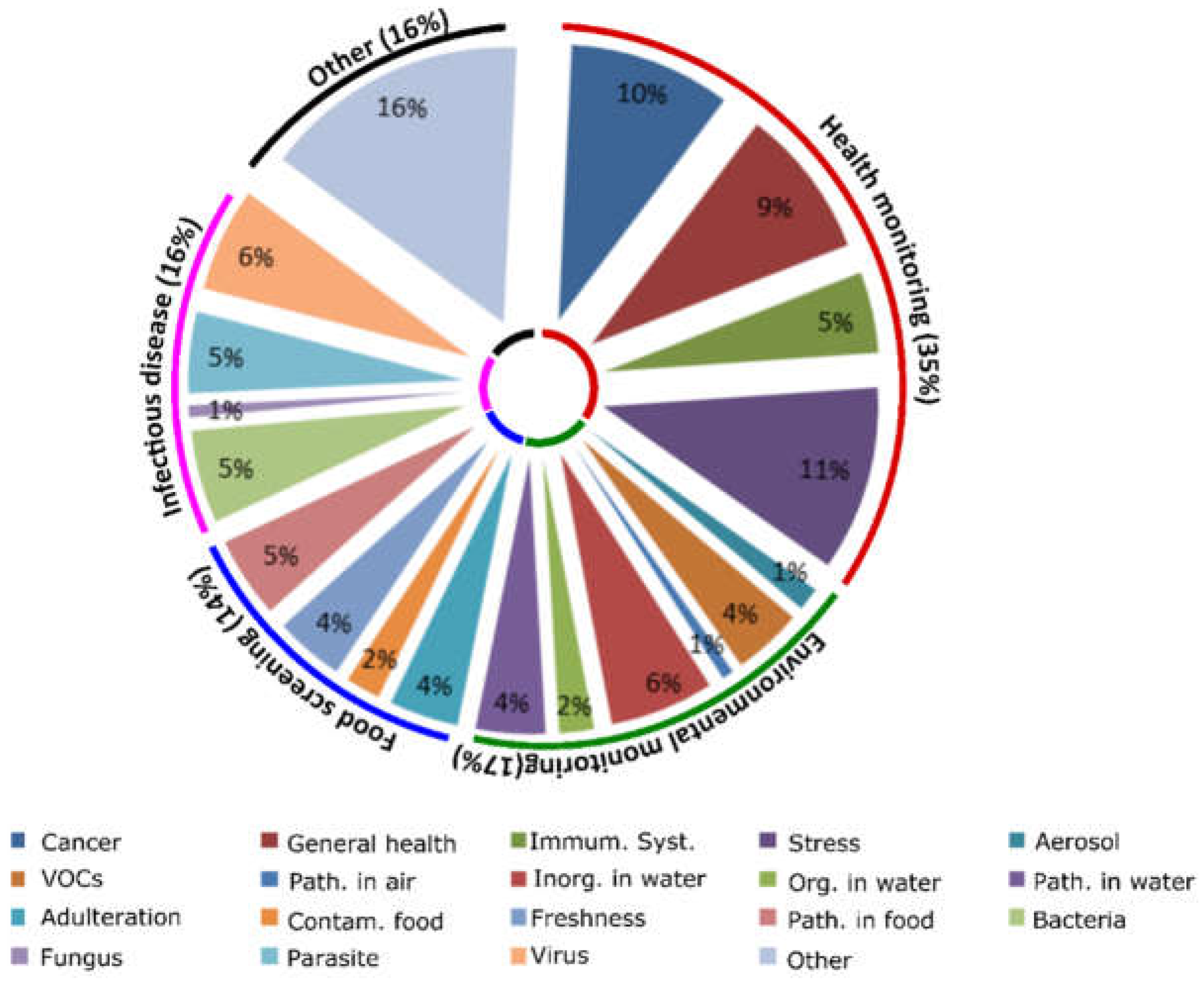

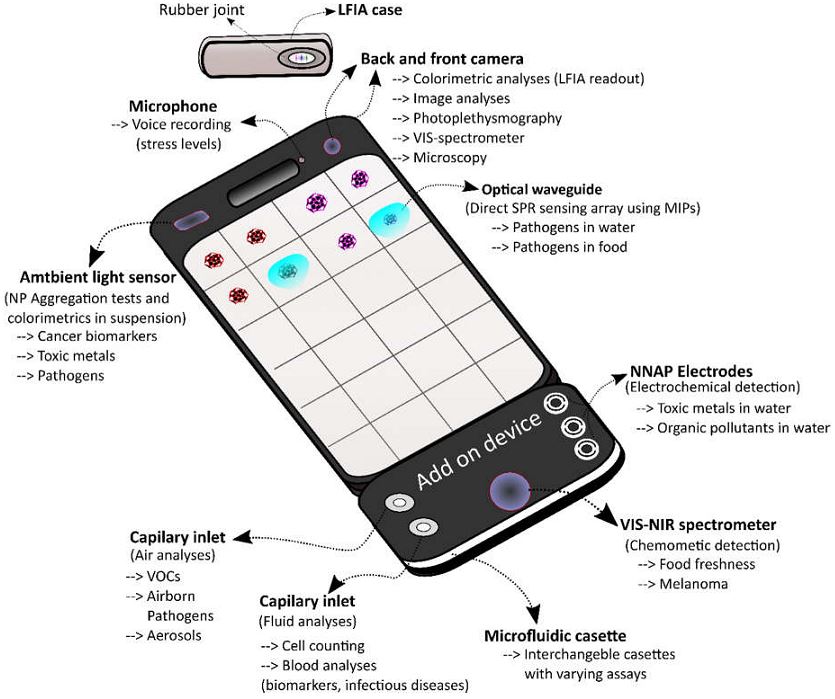

A recent study of existing smartphone-based biosensors by scientists from Queen’s University Belfast (QUB) in the UK identified several candidates under current use or development that could be also used in a space or Martian environment. When combined, the technology could provide functionality reminiscent of the “Tricorder” devices used for medical assessments in the Star Trek television and movie franchises, providing on-site information about the health of human space travelers and biological risks present in their habitats.

Biosensors focus on studying biomarkers, i.e., the body’s response to environmental conditions. For example, changes in blood composition, elevations of certain molecules in urine, heart rate increases or decreases, and so forth, are all considered biomarkers. Health and fitness apps tracking general health biomarkers have become common in the marketplace with brands like FitBit leading the charge for overall wellness sensing by tracking sleep patterns, heart rate, and activity levels using wearable biosensors. Astronauts and other future space travelers could likely use this kind of tech for basic health monitoring, but there are other challenges that need to be addressed in a compact way.

The projected human health needs during spaceflight have been detailed by NASA on its Human Research Program website, more specifically so in its web-based Human Research Roadmap (HRR) where the agency has its scientific data published for public review. Several hazards of human spaceflight are identified, such as environmental and mental health concerns, and the QUB scientists used that information to organize their study. Their research produced a 20-page document reviewing the specific inner workings of the relevant devices found in their searches, complete with tables summarizing each device’s methods and suitability for use in space missions. Here are some of the highlights.

Risks in the Spacecraft Environment

During spaceflight, the environment is a closed system that has a two-fold effect: One, the immune system has been shown to decrease its functionality in long-duration missions, specifically by lowering white blood cell counts, and two, the weightless and non-competitive environment make it easier for microbes to transfer between humans and their growth rates increase. In one space shuttle era study, the number of microbial cells in the vehicle able to reproduce increased by 300% within 12 days of being in orbit. Also, certain herpes viruses, such as those responsible for chickenpox and mononucleosis, have been reactivated under microgravity, although the astronauts typically didn’t show symptoms despite the presence of active viral shedding (the virus had surfaced and was able to spread).

Frequent monitoring of the spacecraft environment and the crew’s biomarkers is the best way to mitigate these challenges, and NASA is addressing these issues to an extent with traditional instruments and equipment to collect data, although often times the data cannot be processed until the experiments are returned to Earth. An attempt has also been made to rapidly quantify microorganisms aboard the International Space Station (ISS) via a handheld device called the Lab-on-a-Chip Application Development-Portable Test System (LOCAD-PTS). However, this device cannot distinguish between microorganism species yet, meaning it can’t tell the difference between pathogens and harmless species. The QUB study found several existing smartphone-based technologies generally developed for use in remote medical care facilities that could achieve better identification results.

One of the devices described was a spectrometer (used to identify substances based on the light frequency emitted) which used the smartphone’s flashlight and camera to generate data that was at least as accurate as traditional instruments. Another was able to identify concentrations of an artificial growth hormone injected into cows called recominant bovine somatrotropin (rBST) in test samples, and other systems were able to accurately detect cyphilis and HIV as well as the zika, chikungunya, and dengue viruses. All of the devices used smartphone attachments, some of them with 3D-printed parts. Of course, the types of pathogens detected are not likely to be common in a closed space habitat, but the technology driving them could be modified to meet specific detection needs.

The Stress of Spaceflight

A group of people crammed together in a small space for long periods of time will be impacted by the situation despite any amount of careful selection or training due to the isolation and confinement. Declines in mood, cognition, morale, or interpersonal interaction can impact team functioning or transition into a sleep disorder. On Earth, these stress responses may seem common, or perhaps an expected part of being human, but missions in deep space and on Mars will be demanding and need fully alert, well-communicating teams to succeed. NASA already uses devices to monitor these risks while also addressing the stress factor by managing habitat lighting, crew movement and sleep amounts, and recommending astronauts keep journals to vent as needed. However, an all-encompassing tool may be needed for longer-duration space travels.

As recognized by the QUB study, several “mindfulness” and self-help apps already exist in the market and could be utilized to address the stress factor in future astronauts when combined with general health monitors. For example, the popular FitBit app and similar products collect data on sleep patterns, activity levels, and heart rates which could potentially be linked to other mental health apps that could recommend self-help programs using algorithms. The more recent “BeWell” app monitors physical activity, sleep patterns, and social interactions to analyze stress levels and recommend self-help treatments. Other apps use voice patterns and general phone communication data to assess stress levels such as “StressSense” and “MoodSense”.

Advances in smartphone technology such as high resolution cameras, microphones, fast processing speed, wireless connectivity, and the ability to attach external devices provide tools that can be used for an expanding number of “portable lab” type functionalities. Unfortunately, though, despite the possibilities that these biosensors could mean for human spaceflight needs, there are notable limitations that would need to be overcome in some of the devices. In particular, any device utilizing antibodies or enzymes in its testing would risk the stability of its instruments thanks to radiation from galactic cosmic rays and solar particle events. Biosensor electronics might also be damaged by these things as well. Development of new types of shielding may be necessary to ensure their functionality outside of Earth and Earth orbit or, alternatively, synthetic biology could also be a source of testing elements genetically engineered to withstand the space and Martian environments.

The interest in smartphone-based solutions for space travelers has been garnering more attention over the years as tech-centric societies have moved in the “app” direction overall. NASA itself has hosted a “Space Apps Challenge” for the last 8 years, drawing thousands of participants to submit programs that interpret and visualize data for greater understanding of designated space and science topics. Some of the challenges could be directly relevant to the biosensor field. For example, in the 2018 event, contestants are asked to develop a sensor to be used by humans on Mars to observe and measure variables in their environments; in 2017, contestants created visualizations of potential radiation exposure during polar or near-polar flight.

While the QUB study implied that the combination of existing biosensor technology could be equivalent to a Tricorder, the direct development of such a device has been the subject of its own specific challenge. In 2012, the Qualcomm Tricorder XPRIZE competition was launched, asking competitors to develop a user-friendly device that could accurately diagnose 13 health conditions and capture 5 real-time health vital signs. The winner of the prize awarded in 2017 was Pennsylvania-based family team called Final Frontier Medical Devices, now Basil Leaf Technologies, for their DxtER device. According to their website, the sensors inside DxtER can be used independently, one of which is in a Phase 1 Clinical Trial. The second place winner of the competition used a smartphone app to connect its health testing modules and generate a diagnosis from the data acquired from the user.

The march continues to develop the technology humans will need to safely explore regions beyond Earth orbit. Space is hard, but it was hard before we went there the first time, and it was hard before we put humans on the moon. There may be plenty of challenges to overcome, but as the Queen’s University Belfast study demonstrates, we may already be solving them. It’s just a matter of realizing it and expanding on it.

DIY

Tesla owner fixes common feature complaint with crafty DIY retrofit

Tesla owners have long griped about the wireless phone charger in the Model Y and other vehicles. It often turns smartphones into miniature ovens rather than reliably topping them up.

Software engineer and Model Y owner Michał Gapiński tackled this issue head-on with a clever DIY upgrade, swapping the cooled wireless charger pad from the China-made Model YL in for the one that came standard in his vehicle.

There are several key differences between the U.S.-built Model Y’s wireless charging pad and the one that Tesla has been installing in the Model YL. The one installed in U.S.-built vehicles lacks active cooling and relies on basic heat dissipation, leading to rapid temperature buildup during charging. In contrast, the Model YL integrates a small fan for active cooling.

Will it fit? Fingers crossed, I want a first YL charger deployed in the regular juniper pic.twitter.com/wWDqSNFVkW

— Michał Gapiński (@mikegapinski) June 2, 2026

This design maintains lower temperatures even in warm ambient conditions, though it does not support faster Qi2 charging on iPhones. The connector matches exactly, making physical swaps feasible on compatible consoles, but coding is required to enable full functionality.

Owners in the U.S. have complained about the wireless charging pad, with many reporting that overheating is fairly common. Within 20 or 30 minutes of placing a phone on the wireless charging pad, many have reported overheating messages on their phones, which halt charging and essentially turn the pad into a fancy place to rest your phone.

Many owners have opted to simply plug their phones into a charging cord. Tesla has acknowledged the problem by releasing several solutions for owners, including a relatively new feature that allows you to simply turn off the charging and simply act as a holder for your phone while driving.

Gapiński said that he sourced the cooled pad affordably from China, and it cost under $200 for the part.

He removed the existing console charger, swapped in the new unit, confirming a perfect connector fit, and handled the trim differences. Since the parameter isn’t fully secured, he enabled it through custom coding outside official Toolbox.

Connector is identical, she fits, now time to code it. https://t.co/Y9idgDrpCq pic.twitter.com/uwwgq6blg7

— Michał Gapiński (@mikegapinski) June 2, 2026

The fan activates quietly, blending with AC and seat cooling. He reported the installation was effective and the wireless charging pad worked perfectly; it even kept the phone cool as it stayed at just 86 degrees Fahrenheit. Many times, the wireless charging pad will bring the phone’s temperature well above 100 degrees, sometimes even being relatively hot to the touch.

The retrofit worked, no issues. First Model Y with a cooled wireless charger! No QI2/faster charging on the iPhone but it does not boil the phone even when it is 30 degrees outside.

The fan kicks in, it is not audible especially with the air conditioning and seat cooling. The… https://t.co/JOyR8Tb1Yo pic.twitter.com/kJcYhQIlYq

— Michał Gapiński (@mikegapinski) June 2, 2026

This retrofit highlighted an elegant, owner-driven solution to a factory shortcoming. It is expected that Tesla will begin installing the cooled charging pads into new cars in the U.S. soon, and hopefully, it will offer some sort of retrofit service or kit to owners here who want to use the charging pad effectively.

For those who love to tinker, it’s an accessible upgrade, proving that innovation thrives beyond the production line.

News

Tesla exec says Roadster unveil is soon — for real this time

The Tesla Roadster unveiling could be coming “in a few weeks,” according to the company’s Chief Designer Franz von Holzhausen, who said at the Tesla Takeover Europe Event in Austria that the all-electric hypercar could finally make its way to the production line after years of anticipation.

Von Holzhausen delivered the news just days after The Information reported that Tesla planned to push the Roadster unveiling to August. It was slated for both April and May of this year, but now it seems the company is leaning toward a late Summer event to cap off the heat with perhaps its most anticipated vehicle of all-time.

🚨 Tesla Chief Designer Franz Von Holzhausen, speaking to the crowd at Tesla Takeover Europe, said at the event that the Roadster is coming “in a few weeks,”

Multiple attendees have confirmed this pic.twitter.com/B1v6yb2Geq

— TESLARATI (@Teslarati) June 6, 2026

Franz has been with Tesla since 2008, and has played a pivotal role in the iconic design language the company has utilized with its vehicles. Speaking to the crowd in Austria virtually, von Holzhausen’s comments injected fresh excitement into a project that has been plagued by delays for nine years.

The second-generation Roadster promises to redefine supercar standards. Tesla’s website still highlights ambitious targets: 0-60 mph in under 1.9 seconds (with optional SpaceX thruster pack potentially achieving 1.1 seconds or less), a top speed exceeding 250 mph, and a range of about 620 miles.

Equipped with a tri-motor all-wheel-drive setup delivering over 1,000 horsepower, the four-seater aims to blend blistering acceleration, everyday usability, and innovative features like cold gas thrusters for short-hop capabilities, technology that will combine the project with SpaceX.

But years after the company promised to start production, which was slated for 2020, the timeline for the Roadster has continued to shift.

Tesla has strung along those who have put $50,000 deposits down, as well as fans and enthusiasts of the company who have been long awaiting the company to bring forth a car truly designed for the human driver, and not autonomy. The Roadster is more than just a halo vehicle for Tesla; it showcases the company’s ability to push the boundaries while incorporating synergies from other Musk companies.

However, it has to make it to production, which is something Musk and Co. have pushed back repeatedly.

As Tesla navigates Robotaxi development and broader autonomy goals, the Roadster serves as a reminder of its performance roots. If von Holzhausen’s timeline holds, fans could witness this engineering marvel by late June or early July 2026. Whether a full unveiling, demo, or initial deliveries, it marks a milestone for electric supercars.

News

Tesla Roadster unveiling gets pushed again, but new event details emerge

Tesla has reportedly pushed the unveiling of the Roadster once again, but there are also evidently new details about the event that the company plans to show off.

The Information reported this morning that Tesla will now unveil, for the second time, the next-generation Roadster in August, a further delay from the multiple timeline that the company had previously stated.

The report has not been confirmed or denied by Tesla at any capacity.

It also states the unveiling event will take place in Texas, the same place that Tesla executives revealed in May would be the place of manufacture for the company’s highly-anticipated supercar, which boasts a top speed of over 250 MPH and 650 miles of range, according to its website.

Tesla is also expected to showcase the SpaceX package, which will be used for faster acceleration and potentially hovering capabilities, at the unveiling event, the report states. Musk has always planned for this to happen, but now it seems it is more realistic than ever

The report also states the Roadster unveiling is planned for August pic.twitter.com/By26XZIJzU

— TESLARATI (@Teslarati) June 5, 2026

The Roadster has had its unveiling date and manufacturing date pushed back on many occasions. It was set to start production in 2020, but the COVID-19 pandemic crippled supply chain operations, forcing Tesla to push its timeline back considerably.

However, COVID has been over for some time, and Tesla has still not managed to successfully schedule and execute an unveiling event, which is something fans and enthusiasts, as well as those who have put down a $50,000 deposit, have been waiting for.

The vehicle was close to completion last year, but Musk truly wanted Lars Moravy and Franz von Holzhausen to push the limits of the Roadster. In July of last year, Moravy said:

“Roadster is definitely in development. We did talk about it last Sunday night. We are gearing up for a super cool demo. It’s going to be mind-blowing; We showed Elon some cool demos last week of the tech we’ve been working on, and he got a little excited.”

It is important to note two things: Tesla has not confirmed these details, and the company has regularly pushed these dates back. Until Tesla sends out formal invitations with a concrete date, taking any unveiling event reports with a grain of salt is a good idea.