Tesla’s AI Day is here. In a few minutes, Tesla watchers would be seeing executives like Elon Musk provide an in-depth discussion on the company’s AI efforts on not just its automotive business but on its energy business and beyond as well. AI Day promises to be yet another tour-de-force of technical information from the electric car manufacturer. Thus, it is no surprise that there is a lot of excitement from the EV community heading into the event.

Tesla has kept the details of AI Day behind closed doors, so the specifics of the actual event are scarce. That being said, an AI Day agenda sent to attendees indicated that they could expect to hear Elon Musk speak during a live keynote, speak with Andrej Karpathy and the rest of Tesla’s AI engineers, and participate in breakout sessions with the teams behind Tesla’s AI development.

Similar to Autonomy Day and Battery Day, Teslarati would be following along on AI Day’s discussions to provide you with an updated account of the highly-anticipated event. Please refresh this page from time to time, as notes, details, and quotes from Elon Musk’s keynote and its following discussions will be posted here.

Simon 19:40 PT – A question about the use cases for the Tesla Bot was asked. Musk notes that the Tesla Bot would start with boring, repetitive, work, or work that people would least like to do.

Simon 19:25 PT – A question about AI and manufacturing is asked and how it potentially relates to the “Alien Dreadnaught” concept. Musk notes that most of Tesla’s manufacturing today is already automated. Musk also noted that humanoid robots would be done either way, so it would be great for Tesla to do this project, and safely as well. “We’re making the pieces that would be useful for a humanoid robot, so we should probably make it. If we don’t someone else will — and we want to make sure it’s safe,” Musk said.

Simon 19:15 PT – And the Q&A starts. First question involves open-sourcing Tesla’s innovations. Musk notes that it’s pretty expensive to develop all this tech, so he’s not sure how things could be open-sourced. But if other car companies would like to license the system, that could be done.

Simon 19:11 PT – There will really be a “Tesla Bot.” It would be built by humans, for humans. It would be friendly, and it would eliminate dangerous, repetitive, boring tasks. This is still petty darn unreal. It uses the systems that are currently being developed for the company’s vehicles. “There will be profound applications for the economy,” Musk said.

Simon 19:06 PT – New products! A whole Tesla suit?! After a fun skit, Elon says the “Tesla Bot” would eventually be real.

Simon 19:00 PT – What is crazy is that Dojo is not even done. This is just what it is today. Dojo is still evolving, and it is going to be way more powerful in the future. Now, it’s Elon Musk’s turn. What’s next for Tesla beyond vehicles.

Simon 19:00 PT – Venkataramanan teases the ExaPOD. Yet another revolutionary solution from Tesla. With all this, it is evident that Tesla’s approach to autonomy is on a whole other level. It would not be surprising if it takes Wall Street and the market a few days to fully absorb what is happening here.

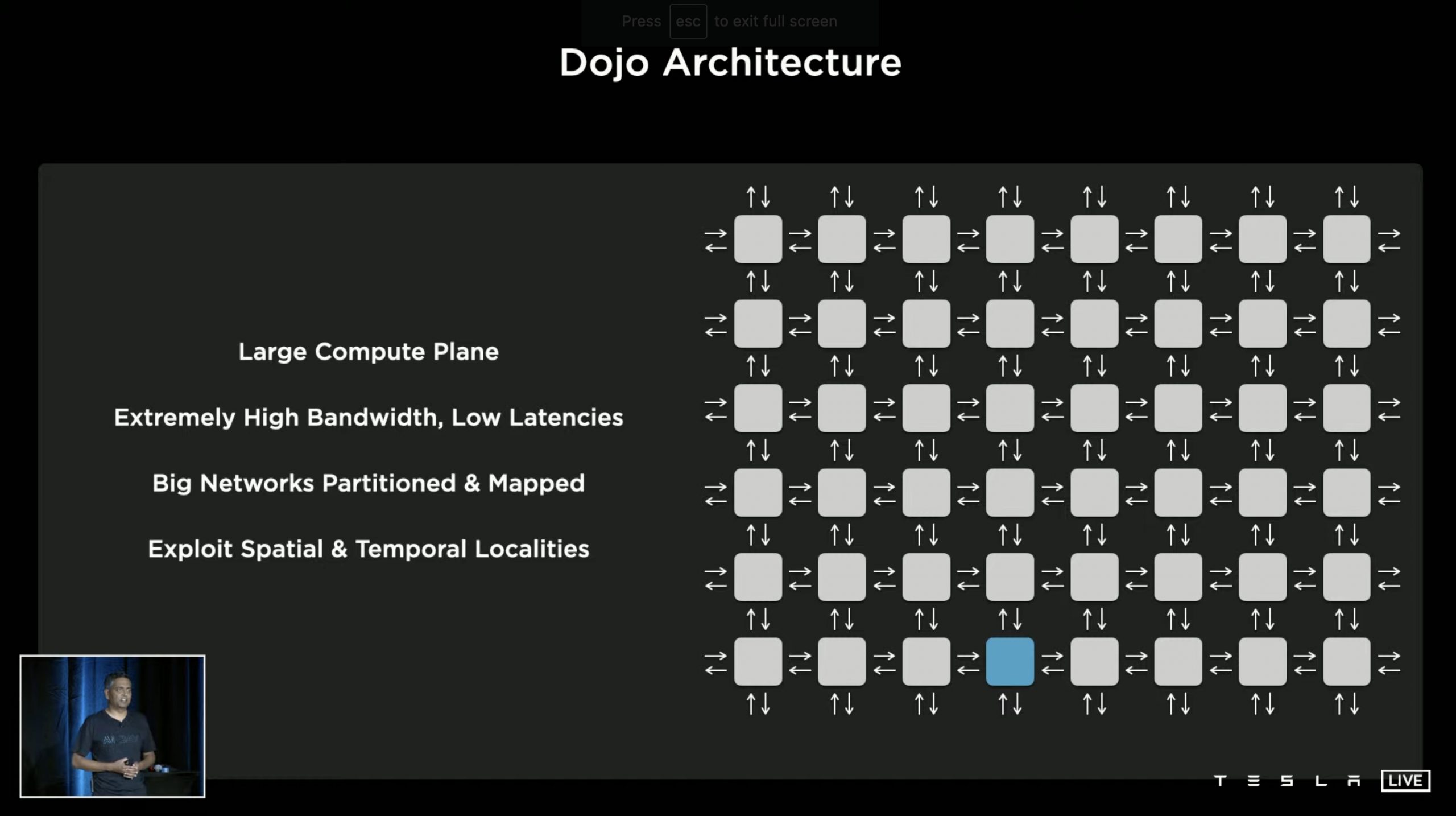

Simon 18:55 PT – The specs of Dojo are insane. Behind its beastly specs, it seems that Dojo’s full potential lies in the fact that all this power is being used to do one thing: to make autonomous cars possible. Dojo is a pure learning machine, with more than 500,000 training nodes being built together. Nine petaflops of compute per tile, 36 terabytes per second of off-tile bandwidth. But this is just the tip of the iceberg for Dojo.

Simon 18:50 PT – Ganesh Venkataramanan, Project Dojo’s lead, takes the stage. He states that Elon Musk wanted a super-fast training computer to train Autopilot. And thus Project Dojo was born. Dojo is a distributed compute architecture connected by network fabric. It also has a large compute plane, extremely high bandwidth with low latencies, and big networks that are partitioned and mapped, to name a few.

Simon 18:45 PT – Milan Kovac, Tesla’s Director of Autopilot Engineering takes the stage. He notes that he would discuss how neural networks are run in the company’s cars. He notes that Tesla’s systems require supercomputers.

Simon 18:40 PT – Ashok notes that simulations have helped Tesla a lot already. It has, for example, helped the company identify pedestrian, bicycle, and vehicle detection and kinematics. The networks in the vehicles were traded to 371 million simulated images and 480 million cuboids.

Simon 18:35 PT – Ashok notes that these strategies ultimately helped Tesla retire radar from its FSD and Autopilot suite and adopt a pure vision model. A comparison between a radar+camera system and pure vision shows just how much more refined the company’s current strategy is. The executive also touched on how simulations help Tesla develop its self-driving systems. He states that simulations help when data is difficult to source, difficult to label, or in a closed loop.

Simon 18:30 PT – Ashok returns to discuss Auto Labeling. Simply put, there is so much labeling that needs to be done that it’s impossible to be done manually. He shows how roads and other items on the road are “reconstructed” from a single car that’s driving. This effectively allowed Tesla to label data much faster, while allowing vehicles to navigate safely and accurately even when occlusions are present.

Simon 18:25 PT – Karpathy returns to talk about manual labeling. He notes that manual labeling that’s outsourced to third-party firms is not optimal. Thus, in the spirit of vertical integration, Tesla opted to establish its own labeling team. Karpathy notes that in the beginning, that Tesla was using 2D image labeling. Eventually, Tesla transitioned to 4D labeling, where the company could label in vector space. But even this was not enough, and thus, auto labeling was developed.

Simon 18:23 PT – The executive states that traffic behavior is extremely complicated, especially in several parts of the world. Ashok notes that this partly illustrated by parking lots and how they are actually complex. Summoning a car from a parking lot, for example, used to utilize 400k notes to navigate, resulting in a system whose performance left much to be desired.

Simon 18:18 PT – Ashok notes that when driving alongside other cars, Autopilot must not only think about how they would drive, they must also think about how other cars would operate. He shows a video of a Tesla navigating a road and dealing with multiple vehicles to demonstrate this point.

Simon 18:15 PT – Director of Autopilot Software Ashok Elluswamy takes the stage. He starts off by discussing some key problems in planning in both non-convex and high-dimensional action spaces. He also shows Tesla’s solution to these issues, a “Hybrid Planning System.” He demonstrates this by showing how Autopilot performs a lane change.

Simon 18:10 PT – Karpathy’s discussion notes that today, Tesla’s FSD strategy is a lot more cohesive. This is demonstrated by the fact that the company’s vehicles could effectively draw a map in real-time as it drives. This is a massive difference compared to the pre-mapped strategies employed by rivals in both the automotive and software field like Super Cruise and Waymo.

To solve several problems encountered over the last few years with the previous suite, Tesla re-engineered their NN learning from the ground up and utilized a multi-head route, camera calibrations, caching, queues, and optimizations to streamline all tasks.

(heavily simplified) pic.twitter.com/LG2TRgjxip

— Teslascope (@teslascope) August 20, 2021

Simon 18:05 PT – The AI Director discusses how Tesla practically re-engineered their neural network learning from the ground-up and utilized a multi-head route. These include camera calibrations, caching, queues, and optimizations to streamline all tasks. Do note that this is an extremely simplified iteration of Karpathy’s discussion so far.

Simon 18:00 PT – Karpathy covers more challenges that are involved in even the basics of perception. Needless to say, AI Day is quickly proving to be Tesla’s most technical event right off the bat. That said, multi-camera networks are amazing. They’re just a ton of work, but it may very well be a silver bullet for Tesla’s predictive efforts.

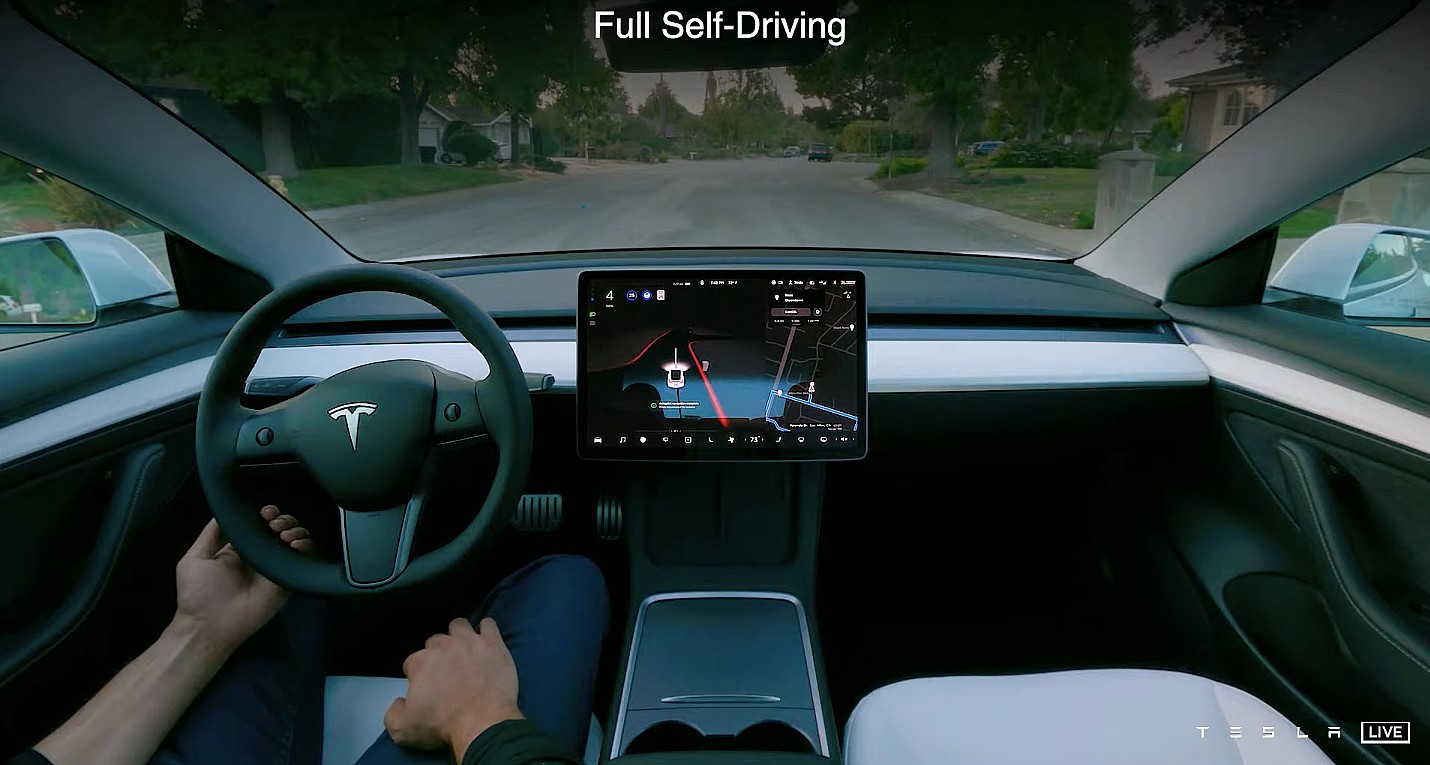

Simon 17:56 PT – Karpathy showcases a video of how Tesla used to process its image data in the past. He shows a popular video for FSD that has been shared in the past. He notes that while great, such a system proved to be inadequate, and this is something that Tesla learned when it launched Smart Summon. While per-camera detection is great, the vector space proves inadequate.

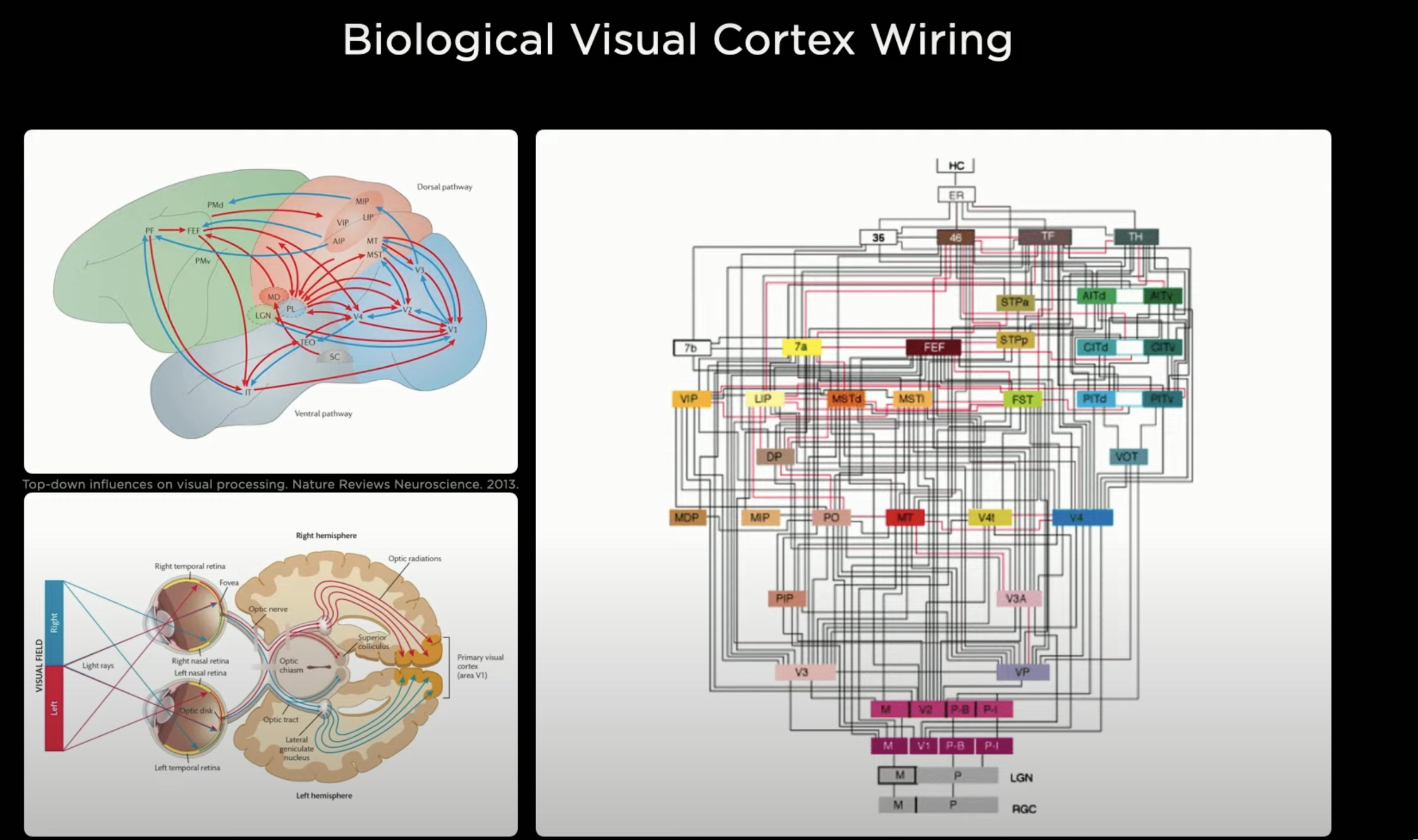

Simon 17:55 PT – Karpathy noted that when Tesla designs the visual cortex in its car, the company is modeling it to how a biological vision is perceived by eyes. He also touches on how Tesla’s visual processing strategies have evolved over the years, and how it is done today. The AI Director also touches on Tesla’s “HydraNets,” on account of their multi-task learning capabilities.

Simon 17:51 PT – Karpathy starts off by discussing the visual component of Tesla’s AI, as characterized by the eight cameras used in the company’s vehicles. The AI director notes that AI could be considered like a biological being, and it’s built from the ground up, including its synthetic visual cortex.

Simon 17:48 PT – Elon Musk takes the stage. He apologizes for the event’s delay. He jokes that Tesla probably needs AI to solve these “technical difficulties.” The CEO highlights that AI Day is a recruitment event. He calls Tesla’s head of AI Andrej Karpathy. There’s no better person to discuss AI.

Simon 17:45 PT – We’re here watching the AI Day FSD preview video and we can’t help but notice that… are those Waypoints?!

Simon 17:38 PT – Looks like we’ve got an Elon sighting! And a preview video too! Here we go, folks!

We’ve got an Elon sighting

— Rob Maurer (@TeslaPodcast) August 20, 2021

Simon 17:30 PT – A 30-minute delay. We haven’t seen this much delay in quite a bit.

Simon 17:20 PT – It’s a good thing that Tesla has great taste in music. Did Grimes mix this track?

Simon 17:15 PT – We’re 15 minutes in. “Elon Time” is going strong on AI Day. To be honest, though, this music would fit the “Rave Cave” in Giga Berlin this coming October.

Simon 17:10 PT – A good thing to keep in mind is that AI Day is a recruitment event. Some food for thought just in case the discussions take a turn for the extremely technical. AI Day is designed to attract individuals who speak Tesla’s language in its rawest form. We’re just fortunate enough to come along for the ride.

Tesla Board Member Hiro Mizuno sums it up in this tweet pretty well.

Anybody passionate about real world AI !! https://t.co/ydaWQlkE4O

— HIRO MIZUNO (@hiromichimizuno) August 20, 2021

Simon 17:05 PT – I guess AI Day is starting on “Elon Time?” We’re on to the next track of chill music.

Simon 17:00 PT – And with 5 p.m. PST here, the music is officially live on the AI Day live stream. Looks like we’re in for some wait. Wonder how many minutes it would take before it starts? Gotta love this chill music though.

Simon 16:58 PT – While waiting, I can’t help but think that a ton of TSLA bears and Wall Street would likely not understand the nuances of what Tesla would be discussing today. Will Tesla go three-for-three? It was certainly the case with Battery Day and Autonomy Day.

Made it pic.twitter.com/aAWqxgf0bP

— Johnna (@JohnnaCrider1) August 19, 2021

Simon 16:55 PT – T-minus 5 minutes. Some attendees of AI Day are now posting some photos on Twitter, but it seems like photos and videos are not allowed on the actual venue of the event. Pretty much expected, I guess.

Simon 16:50 PT – Greetings, everyone, and welcome to another Live Blog. This is Tesla’s most technical event yet, so I expect this one to go extremely in-depth on the company’s AI efforts and the technology behind it. We’re pretty excited.

Don’t hesitate to contact us with news tips. Just send a message to tips@teslarati.com to give us a heads up.

Elon Musk

Tesla tipped its hand at where Robotaxi is heading next

In the world of autonomous ride-hailing, there are only a handful of names. Among those few companies lies a strategy play by each to keep the opposition on their toes. Tesla, on the other hand, already tipped its hand at where it is headed next.

Tesla has signaled its next major push in the autonomous ride-hailing market by filing for an Autonomous Vehicle Network Company permit in Nevada (Docket 26-05015). Through Tesla Robotaxi, LLC, the company seeks approval to operate up to 5,000 robotaxis in Clark County, including high-traffic areas like Las Vegas and Henderson airports, within the first 12 months of launch.

This filing builds on Tesla’s earlier testing approvals from the Nevada DMV in September 2025 and preparations such as maintenance hubs in the Las Vegas area. Nevada represents a strategic expansion into a major tourist destination, where high visitor volumes could drive strong utilization and showcase the reliability of unsupervised autonomy to a broad audience.

We’d have to assume this means Tesla is targeting Las Vegas, and it’s a great move from a business perspective.

Vegas is such a melting pot of people from all around the country and the world. It will expose people from all corners of the globe to Tesla’s autonomy capabilities https://t.co/Qz3fQmhULF pic.twitter.com/Du5pj2RyWC

— TESLARATI (@Teslarati) June 6, 2026

Approval would mark a significant step toward commercial operations in a new state, following progress in Texas.

Tesla’s shareholder decks and earnings calls have clearly outlined these ambitions. In the Q4 2025 shareholder deck, the company listed planned Robotaxi coverage for the first half of 2026, explicitly naming Las Vegas alongside Phoenix, Miami, Orlando, and Tampa, with Dallas and Houston already advancing. Austin was noted as “ramping unsupervised,” while the Bay Area remained in safety-driver mode.

By Q1 2026, the deck updated statuses to reflect launches in Dallas and Houston, with “preparations underway” for the remaining cities, including Las Vegas. Paid Robotaxi miles nearly doubled sequentially in Q1, underscoring momentum even as broader timelines adjusted slightly for regulatory and operational readiness.

On earnings calls, CEO Elon Musk and executives have emphasized a phased rollout prioritizing safety. Unsupervised operations in Texas have shown strong results with no reported accidents or injuries in the program. Tesla continues groundwork in additional major U.S. metros through testing and permitting, positioning it to scale quickly once approvals clear.

This Nevada move aligns with Tesla’s vision of transforming from an EV maker into an AI and robotics leader. The forthcoming Cybercab, which started production at Giga Texas in April, is expected to eventually dominate the fleet, replacing many Model Y vehicles and driving down costs to enable affordable rides.

For investors and the industry, this signals Tesla’s intent to dominate key Sun Belt and tourist markets where weather, regulations, and demand favor rapid scaling. Success in Las Vegas could validate the model for denser urban and high-tourism environments, accelerating the shift toward a future where robotaxis generate meaningful revenue.

Las Vegas will also expand knowledge among the general public at Tesla’s capabilities, helping people experience driverless ride-hailing from several companies during their time on The Strip.

News

Tesla Model 3’s cheapest trim just got a major accolade

The Tesla Model 3’s cheapest trim level just got a major accolade, as Edmunds just revealed the Rear-Wheel-Drive trim of the all-electric sedan is the most efficient EV that is currently in production.

The 2026 Tesla Model 3 Rear-Wheel-Drive not only beat its EPA-estimated range by 30 miles, but it also bested its efficiency mark by 13.2 percent. The Model 3 tested by Edmunds traveled 393 miles, beating its EPA rating by 8.3 percent, while it returned 21.7 kWh per 100 miles, or 4.61 mi/kWh.

Beating those two metrics is especially pertinent when it comes to EV ownership and driving down the cost of ownership from ICE counterparts across the board. The real money savings come from driving down the cost of driving per mile, especially when it comes to high-mileage driving.

Edmunds stated in its report and review that the process it uses to test EV efficiency is aimed at giving “the most accurate representation of a car’s real-world range.” The assessment uses a strict route that features 60 percent city and 40 percent highway driving, and an average speed of 40 MPH across the trip.

It also drives each car within 5 MPH of all posted speed limits, and the climate control is set on Auto at 72 degrees to ensure even testing. In other words, Edmunds does not use methods to maximize efficiency, and instead tries to make it reasonable to achieve the same ratings yourself.

In comparison to other EVs, it beat the 2026 Mercedes-Benz CLA 350, which went 385 miles, as well as the 2026 Audi A6 Sportback E-tron Prestige AWD, which traveled 392 miles. Only the Mercedes-Benz CLA 250+ traveled farther, making it an impressive 434 miles on a charge.

However, the Tesla Model 3 RWD’s efficiency is “unmatched” because of its incredibly low energy usage per mile.

🚨 Tesla Model 3 RWD:

-At $36,990, it is $9,000 cheaper than the average transaction price for a new car ($46,023 via KBB)

-Was 13.2% more efficient than its EPA estimate

-Traveled 393 miles on a charge despite its 363-mile EPA range https://t.co/Grov2hXqpa pic.twitter.com/Zl8rnZZLIB

— TESLARATI (@Teslarati) June 8, 2026

The Model 3 Rear-Wheel-Drive might be the best bang-for-your-buck EV if you’re looking to buy new and want access to features like Full Self-Driving, while also being aware of efficiency. This trim of the Model 3 is also priced over $9,000 cheaper than what Kelley Blue Book says the average transactional price for a new car was in May 2026, which sits at $46,023.

If you’re looking for something with more speed, an All-Wheel-Drive drivetrain, or more premium features, the Premium trims of the Model 3 currently come with one year of Free Supercharging.

Investor's Corner

SpaceX IPO set to provide massive $11.6B windfall for teacher pension plan

The Ontario Teachers’ Pension Plan (OTPP) stands to reap one of the most extraordinary returns in pension fund history thanks to a bold 2019 investment in SpaceX.

According to a recent report from The Globe and Mail, the Toronto-based fund invested roughly $300 million CAD (~$220 million USD at the time) in Elon Musk’s space company as its inaugural deal through the Teachers’ Innovation Platform.

At SpaceX’s anticipated $1.75 trillion IPO valuation, set for a mid-June debut on Nasdaq under ticker $SPCX, that stake could now be worth up to $11.6 billion USD. This would represent a roughly 50x return and easily become OTPP’s most successful single investment ever.

The fund manages $279 billion in assets for approximately 346,000 working and retired teachers in Ontario, potentially delivering an average boost of around $33,500 per member if fully realized.

SpaceX has filed its S-1 and plans to price shares at $135 each, aiming to raise a record $75 billion in what would be the largest IPO in history, surpassing Saudi Aramco. The company reported $18.67 billion in revenue for 2025, driven primarily by Starlink satellite internet growth and NASA contracts, though it continues to post significant losses tied to ambitious R&D in Starship and AI initiatives.

Important pieces moving forward include:

- Starlink Expansion: The satellite broadband service is scaling rapidly, targeting global connectivity, especially in underserved rural and remote areas. This segment offers massive recurring revenue potential as numbers climb.

- Starship and Reusability Leadership: SpaceX’s fully reusable Starship aims to slash launch costs dramatically, enabling frequent missions, Mars ambitions, and lucrative government/defense contracts. Success here could unlock exponential growth.

- AI and Diversification: Recent moves, including ties to xAI, position SpaceX in high-growth AI infrastructure, broadening beyond traditional aerospace.

- Validation Scrutiny: While the $1.75 trillion target excites investors, analysts like Morningstar value the company closer to $780 billion, citing high multiples (around 90x trailing revenue) and execution risks. A 180-day lockup period will prevent early investors like OTPP from selling immediately post-IPO.

The irony has not been lost on observers. Ontario’s government previously canceled a Starlink rural internet contract amid political tensions involving Musk, yet the pension fund’s savvy investment, made when SpaceX was valued around $33-36 billion, and Starlink was nascent, delivers outsized gains independent of politics.

For OTPP, this windfall strengthens its already solid 111 percent funding ratio and underscores the value of patient, innovation-focused capital allocation.

For SpaceX, the IPO marks a new chapter: greater transparency, access to public markets for talent retention and growth capital, and heightened pressure to deliver on its multi-planetary vision.

All eyes are fixed on whether SpaceX can justify its lofty valuation through sustained execution. For Ontario teachers, the returns are already stellar, but SpaceX, like other Musk companies in the past, has plenty of things to prove. Perhaps the most ideal person for the job is at the helm, hoping to bring the company to a massive valuation.