News

Tesla announces AI Day will be held in Palo Alto

Tesla announced that its second AI Day will be held in Palo Alto. Many speculated that it would be held in Austin due to all the hard work employees have put into making Gigafactory Texas. The 10 million square foot building is a sight to behold for those of us who have seen it in person. Even more so if you’ve been inside of it.

While most of the U.S. (East and Gulf Coast at least) were sleeping, Tesla dropped a late-night tweet on Twitter with the caption “AI Day 2022 on Sept 30 ?” The image included Palo Alto and the little robot emoji is a hint that Tesla’s plans to reveal a working Optimus prototype are on track.

During my own interview with Tesla CEO Elon Musk, he told me that I would be able to attend in person again this year. Last year, I was invited by Tesla to attend at the last minute and scrambled to get there. To be honest, I am not an AI expert and the Tesla staff that I met were very accommodating, and kind, and tried their best to explain things to me on a level that I could understand.

I learned a lot while being there and for those who doubt Tesla’s progress with Full Self-Driving, I think that you need to not only watch the live stream of this year’s and last year’s presentations but take notes.

Last year, Tesla had its beautiful Cybertruck on display and provided snacks for all of the attendees.

Last year, I learned more about AI and its impact at Tesla than I’ve ever learned in my entire life. There, Tesla showed that it’s much more than an EV car company. It has deep AI activity in its hardware, on the inference level, and at the training level. That day, Tesla established itself as a leader in real-world AI and Tesla’s FSD Beta software is just one application of real-world AI.

One key takeaway from last year’s AI Day that got overshadowed by Tesla’s announcement that it was making a robot was something that then-Director of AI, Andrej Karpathy said.

“What I find kind of fascinating about this is we are effectively building a synthetic animal from the ground up. So the car can be thought of as an animal. It moves around, it senses the environment, acts autonomously and intelligently, and we are building all of the components from scratch and in-house.”

“When we designed the visual cortex of the car, we also wanted to design the neural architecture of how the information flows in the system.”

Not only will we get to learn more about Tesla’s new Optimus Bot, but I think we will learn quite a bit more about the Occupancy Networks that enable the care to perceive space around it as a human or animal would. Tesla’s Autopilot Software Director, Ashok Elluswamy, recently shared a deep dive into Occupancy Networks and you can read more about that here.

Note: Johnna is a Tesla shareholder and supports its mission.

Your feedback is important. If you have any comments, concerns, or see a typo, you can email me at johnna@teslarati.com. You can also reach me on Twitter @JohnnaCrider1

Elon Musk

Elon Musk confirms Grok 4 launch on July 9 with livestream event

The rollout will be accompanied by a livestream at 8 p.m. Pacific Time.

Elon Musk has officially confirmed that Grok 4, the latest version of xAI’s large language model, will launch on July 9. The rollout will be accompanied by a livestream at 8 p.m. Pacific Time, hosted on xAI’s official account on X.

xAI goes straight to Grok 4

Back in May, leaks indicated that xAI was getting ready to ship Grok 3.5. Considering Musk’s recent comments, however, it appears that the artificial intelligence startup would be focusing on the large language model’s fourth iteration instead. As noted in a Financial Express report, users on X have sighted references to Grok 4 in the lead up to the update’s launch, such as “grok-4-prod-mimic” and “Grok 4 Code.”

Musk’s Grok 4 announcement comes as AI competition intensifies between major players including OpenAI, Google, and xAI. With Musk’s Colossus supercomputer fully operational in Memphis, xAI appears to be accelerating its AI product roadmap.

Musk pushes Grok toward political neutrality

Grok 4’s launch also follows a recent controversy involving political bias, as noted in a CNN report. Last week, Grok responded to a user on X stating that political violence in the U.S. since 2016 had come more from the political right than the left. The chatbot noted in a later reply that its answer was based on information from sources like Reuters, the Journal of Democracy, and University of Maryland studies.

Musk stated that Grok’s response was a “major fail.” “Major fail, as this is objectively false. Grok is parroting legacy media. Working on it,” he wrote in a post on X. By the end of June, Musk noted that he was “grinding all night with the xAI team” and that they were making “good progress.” He also stated that the model “Will be called Grok 4. Release just after July 4th. Needs one more big run for a specialized coding model.”

News

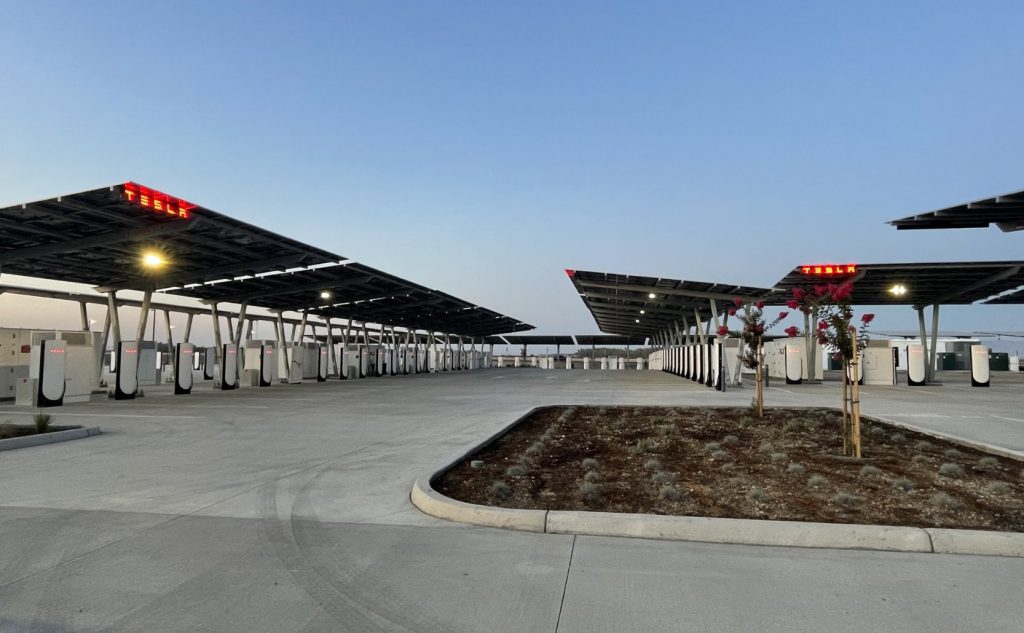

Tesla opens massive solar Supercharger station in California

The Supercharger opened to customers ahead of Fourth of July weekend, while Tesla continues phase two of construction on the site.

Tesla has officially launched the first several Supercharging posts at a massive station in California, notably including solar canopies and grid-scale batteries to offer completely renewable charging.

Last week, Tesla announced on X that it opened the first 84 Supercharger stalls of a planned 168-stall station in Lost Hills, California. Additionally, the massive Supercharger project features 11MW of solar canopies and 10 Megapack batteries for off-grid charging powered entirely by solar energy.

Tesla completed the first phase of the project just days ahead of the busy Fourth of July holiday weekend, adding that initial construction took just eight months. In addition to the remaining charging stalls, Tesla says it’s building a set of lounge areas, renderings of which can be seen below alongside current photos of the site.

Notably, the site also includes V4 charging posts for the company’s latest available charging speeds, and it’s located near the busy junction between I-5 and Highway 46 in Kern County.

“Thank you [Kern County] and [PG&E] for collaboration and approvals,” Tesla wrote in a follow-up post.

Credit: Tesla Charging | X

Credit: Tesla Charging | X

Credit: Tesla Charging | X

Credit: Tesla Charging | X

Tesla Supercharger Maps for North America, Europe, and Asia pic.twitter.com/0U5r0XRPyo

— TESLARATI (@Teslarati) July 2, 2025

READ MORE ON TESLA SUPERCHARGERS: Tesla launches ultra-fast V4 Superchargers in China for the first time

Testing at the LA Diner, plus Musk update on potential Tesla solar Gigafactory

The huge Tesla Supercharger station completed phase one of construction fairly quickly, especially given how long Tesla has been working on its unique Los Angeles diner, drive-in, and Supercharger location. Still, the company was seen performing some testing at the nearly-completed charging station earlier this month, and will reportedly be holding a job fair.

Elon Musk also responded on Monday morning to a post on X, suggesting that Tesla is “thinking about” building a U.S.-based solar Gigafactory in order to help support increased power needs with AI growth, and to bolster domestic solar production.

Tesla is building a new UFO-inspired Supercharger in the heart of Alien country

News

Tesla driver walks away from major accident with minor injuries

The driver sustained only minor injuries, and the exact cause of the crash remains under investigation.

The driver of a Tesla Model Y survived and walked away from a harrowing accident on Monday in California, only sustaining minor injuries despite the vehicle being impaled by a guardrail.

On Monday morning around 4:34 a.m., the Los Banos division of the California Highway Patrol (CHP) responded to the accident on I-5 near Panoche Road, involving a 23-year-old in a Tesla Model Y. According to a post on social media, the driver veered off the road for unknown reasons in the northbound lane, before crashing directly into the guardrail and impaling the vehicle.

You can read the full message and photos from Los Banos CHP below, as were shared in a Facebook post on Monday afternoon.

This morning a Tesla model y was traveling in the #1 northbound lane of I-5 north of Panoche Rd. For unknown reasons driver allowed V-1 to veer off the roadway, travel through a dirt center divide, and crashed into the fixed metal guardrail. Lucky for the driver he only sustained minor injuries and was able to walk away. Driving a vehicle requires 100% attention to the road. Avoid distractions and focus on driving.

Credit: CHP Los Banos (via Facebook)

Credit: CHP Los Banos (via Facebook)

Credit: CHP Los Banos (via Facebook)

In a statement to SFGate, CHP officer Myles Anderson said that the driver only sustained minor injuries, while no arrests are made and drugs and alcohol are not suspected to have been involved. The report also notes that Tesla’s “cruise control and lane assistance features” were activated, according to Anderson. However, it’s not entirely clear if this is referring to Supervised Full Self-Driving (FSD), or to the cruise control and lane assist features baked into Autopilot.

At the time of writing, CHP has not yet responded to Teslarati’s request for clarification and additional details on the matter.

Tesla Crash Safety Ratings across its lineup: pic.twitter.com/ny30R7ceji

— TESLARATI (@Teslarati) July 1, 2025

READ MORE ON TESLA SAFETY: Tesla rolls out crucial new safety feature aimed at saving children

The news comes after Tesla has touted its vehicles as incredibly safe for many years. In December, for example, the company highlighted receiving top safety scores from regulators on four different continents throughout the world, including from the National Highway Traffic Safety Administration (NHTSA) and the Insurance Institute of Highway Safety (IIHS) in the U.S.

Tesla has also listed the goal of making its vehicles the safest on the road throughout the years, both in the overall design of its vehicles and in its Autopilot and Full Self-Driving (FSD) programs.

Tesla Model 3 ranks as the safest new car in Europe for 2025, per Euro NCAP tests

-

Elon Musk1 week ago

Elon Musk1 week agoTesla investors will be shocked by Jim Cramer’s latest assessment

-

News2 weeks ago

News2 weeks agoTesla Robotaxi’s biggest challenge seems to be this one thing

-

News2 weeks ago

News2 weeks agoWatch the first true Tesla Robotaxi intervention by safety monitor

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoA Tesla just delivered itself to a customer autonomously, Elon Musk confirms

-

News2 weeks ago

News2 weeks agoTesla Robotaxi rollout proves that Elon Musk still delivers, even if it’s late

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoxAI welcomes Memphis pollution results, environmental groups push back

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoElon Musk commends Tesla team on successful Robotaxi launch

-

Elon Musk2 weeks ago

Elon Musk2 weeks agoElon Musk confirms Tesla Optimus V3 already uses Grok voice AI